## Issue Addressed

NA

## Proposed Changes

Adds the ability to verify batches of aggregated/unaggregated attestations from the network.

When the `BeaconProcessor` finds there are messages in the aggregated or unaggregated attestation queues, it will first check the length of the queue:

- `== 1` verify the attestation individually.

- `>= 2` take up to 64 of those attestations and verify them in a batch.

Notably, we only perform batch verification if the queue has a backlog. We don't apply any artificial delays to attestations to try and force them into batches.

### Batching Details

To assist with implementing batches we modify `beacon_chain::attestation_verification` to have two distinct categories for attestations:

- *Indexed* attestations: those which have passed initial validation and were valid enough for us to derive an `IndexedAttestation`.

- *Verified* attestations: those attestations which were indexed *and also* passed signature verification. These are well-formed, interesting messages which were signed by validators.

The batching functions accept `n` attestations and then return `n` attestation verification `Result`s, where those `Result`s can be any combination of `Ok` or `Err`. In other words, we attempt to verify as many attestations as possible and return specific per-attestation results so peer scores can be updated, if required.

When we batch verify attestations, we first try to map all those attestations to *indexed* attestations. If any of those attestations were able to be indexed, we then perform batch BLS verification on those indexed attestations. If the batch verification succeeds, we convert them into *verified* attestations, disabling individual signature checking. If the batch fails, we convert to verified attestations with individual signature checking enabled.

Ultimately, we optimistically try to do a batch verification of attestation signatures and fall-back to individual verification if it fails. This opens an attach vector for "poisoning" the attestations and causing us to waste a batch verification. I argue that peer scoring should do a good-enough job of defending against this and the typical-case gains massively outweigh the worst-case losses.

## Additional Info

Before this PR, attestation verification took the attestations by value (instead of by reference). It turns out that this was unnecessary and, in my opinion, resulted in some undesirable ergonomics (e.g., we had to pass the attestation back in the `Err` variant to avoid clones). In this PR I've modified attestation verification so that it now takes a reference.

I refactored the `beacon_chain/tests/attestation_verification.rs` tests so they use a builder-esque "tester" struct instead of a weird macro. It made it easier for me to test individual/batch with the same set of tests and I think it was a nice tidy-up. Notably, I did this last to try and make sure my new refactors to *actual* production code would pass under the existing test suite.

## Issue Addressed

Closes#1891Closes#1784

## Proposed Changes

Implement checkpoint sync for Lighthouse, enabling it to start from a weak subjectivity checkpoint.

## Additional Info

- [x] Return unavailable status for out-of-range blocks requested by peers (#2561)

- [x] Implement sync daemon for fetching historical blocks (#2561)

- [x] Verify chain hashes (either in `historical_blocks.rs` or the calling module)

- [x] Consistency check for initial block + state

- [x] Fetch the initial state and block from a beacon node HTTP endpoint

- [x] Don't crash fetching beacon states by slot from the API

- [x] Background service for state reconstruction, triggered by CLI flag or API call.

Considered out of scope for this PR:

- Drop the requirement to provide the `--checkpoint-block` (this would require some pretty heavy refactoring of block verification)

Co-authored-by: Diva M <divma@protonmail.com>

In previous network updates we have made our libp2p connections more lean by limiting the maximum number of connections a lighthouse node will accept before libp2p rejects new connections.

However, we still maintain the logic that at maximum connections, we try to dial extra peers if they are needed by a validator client to publish messages on a specific subnet. The dials typically result in failures due the libp2p connection limits.

This PR adds an extra factor, `PRIORITY_PEER_EXCESS` which sets aside a new allocation of peers we are able to dial in case we need these peers for the VC client. This allocation sits along side the excess peer (which allows extra incoming peers on top of our target peer limit).

The drawback here, is that libp2p now allows extra peers to connect to us (beyond the standard peer limit) which the peer manager should subsequently reject.

## Issue Addressed

N/A

## Proposed Changes

Add a note to the Doppelganger Protection docs about how it is not interoperable until an endpoint facilitating it is standardized (https://github.com/ethereum/beacon-APIs/pull/131).

## Additional Info

N/A

Co-authored-by: realbigsean <seananderson33@gmail.com>

This PR in general improves the handling around peer banning.

Specifically there were issues when multiple peers under a single IP connected to us after we banned the IP for poor behaviour.

This PR should now handle these peers gracefully as well as make some improvements around how we previously disconnected and banned peers.

The logic now goes as follows:

- Once a peer gets banned, its gets registered with its known IP addresses

- Once enough banned peers exist under a single IP that IP is banned

- We retain connections with existing peers under this IP

- Any new connections under this IP are rejected

## Issue Addressed

N/A

## Proposed Changes

Add a fork_digest to `ForkContext` only if it is set in the config.

Reject gossip messages on post fork topics before the fork happens.

Edit: Instead of rejecting gossip messages on post fork topics, we now subscribe to post fork topics 2 slots before the fork.

Co-authored-by: Age Manning <Age@AgeManning.com>

Sigma Prime is transitioning our mainnet bootnodes and this PR represents the transition of our bootnodes.

After a few releases, old boot-nodes will be deprecated.

# Description

A few changes have been made to discovery. In particular a custom re-write of an LRU cache which previously was read/write O(N) for all our sessions ~5k, to a more reasonable hashmap-style O(1).

Further there has been reported issues in the current discv5, so added error handling to help identify the issue has been added.

[EIP-3030]: https://eips.ethereum.org/EIPS/eip-3030

[Web3Signer]: https://consensys.github.io/web3signer/web3signer-eth2.html

## Issue Addressed

Resolves#2498

## Proposed Changes

Allows the VC to call out to a [Web3Signer] remote signer to obtain signatures.

## Additional Info

### Making Signing Functions `async`

To allow remote signing, I needed to make all the signing functions `async`. This caused a bit of noise where I had to convert iterators into `for` loops.

In `duties_service.rs` there was a particularly tricky case where we couldn't hold a write-lock across an `await`, so I had to first take a read-lock, then grab a write-lock.

### Move Signing from Core Executor

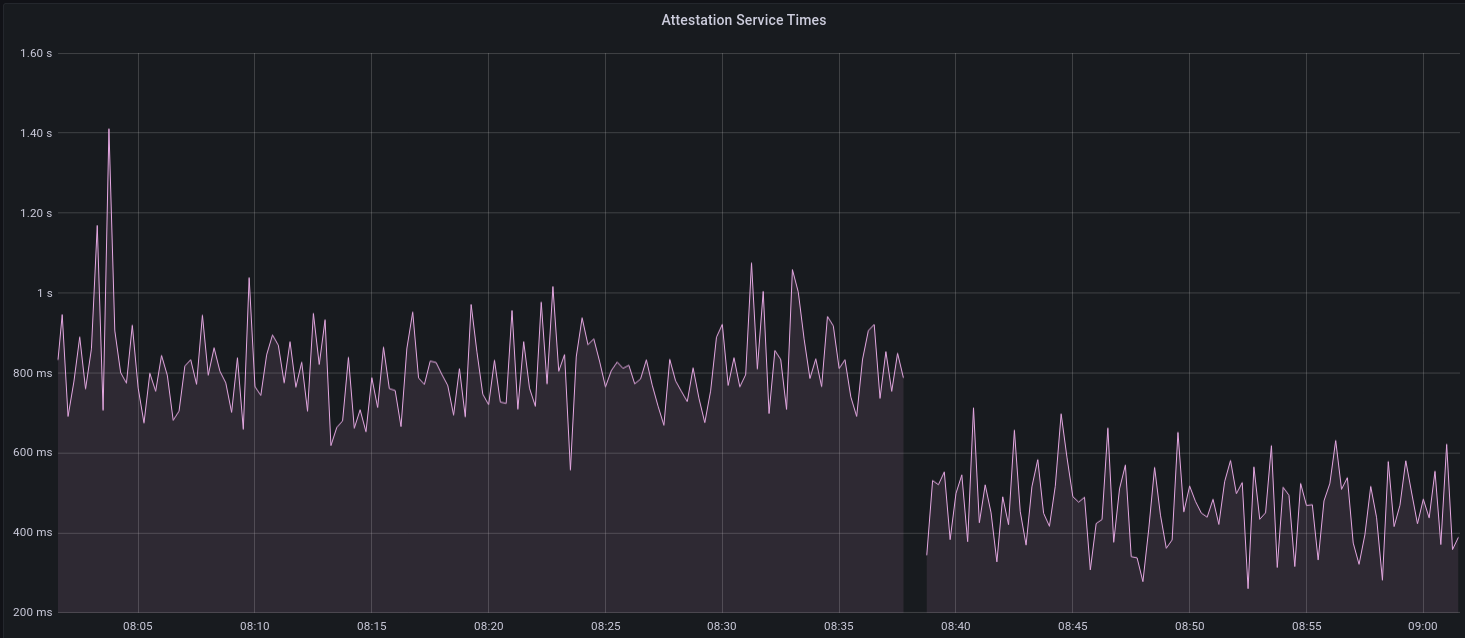

Whilst implementing this feature, I noticed that we signing was happening on the core tokio executor. I suspect this was causing the executor to temporarily lock and occasionally trigger some HTTP timeouts (and potentially SQL pool timeouts, but I can't verify this). Since moving all signing into blocking tokio tasks, I noticed a distinct drop in the "atttestations_http_get" metric on a Prater node:

I think this graph indicates that freeing the core executor allows the VC to operate more smoothly.

### Refactor TaskExecutor

I noticed that the `TaskExecutor::spawn_blocking_handle` function would fail to spawn tasks if it were unable to obtain handles to some metrics (this can happen if the same metric is defined twice). It seemed that a more sensible approach would be to keep spawning tasks, but without metrics. To that end, I refactored the function so that it would still function without metrics. There are no other changes made.

## TODO

- [x] Restructure to support multiple signing methods.

- [x] Add calls to remote signer from VC.

- [x] Documentation

- [x] Test all endpoints

- [x] Test HTTPS certificate

- [x] Allow adding remote signer validators via the API

- [x] Add Altair support via [21.8.1-rc1](https://github.com/ConsenSys/web3signer/releases/tag/21.8.1-rc1)

- [x] Create issue to start using latest version of web3signer. (See #2570)

## Notes

- ~~Web3Signer doesn't yet support the Altair fork for Prater. See https://github.com/ConsenSys/web3signer/issues/423.~~

- ~~There is not yet a release of Web3Signer which supports Altair blocks. See https://github.com/ConsenSys/web3signer/issues/391.~~

## Proposed Changes

* Modify the `TaskExecutor` so that it spawns a "monitor" future for each future spawned by `spawn` or `spawn_blocking`. This monitor future joins the handle of the child future and shuts down the executor if it detects a panic.

* Enable backtraces by default by setting the environment variable `RUST_BACKTRACE`.

* Spawn the `ProductionBeaconNode` on the `TaskExecutor` so that if a panic occurs during start-up it will take down the whole process. Previously we were using a raw Tokio `spawn`, but I can't see any reason not to use the executor (perhaps someone else can).

## Additional Info

I considered using [`std::panic::set_hook`](https://doc.rust-lang.org/std/panic/fn.set_hook.html) to instantiate a custom panic handler, however this doesn't allow us to send a shutdown signal because `Fn` functions can't move variables (i.e. the shutdown sender) out of their environment. This also prevents it from receiving a `Logger`. Hence I decided to leave the panic handler untouched, but with backtraces turned on by default.

I did a run through the code base with all the raw Tokio spawn functions disallowed by Clippy, and found only two instances where we bypass the `TaskExecutor`: the HTTP API and `InitializedValidators` in the VC. In both places we use `spawn_blocking` and handle the return value, so I figured that was OK for now.

In terms of performance I think the overhead should be minimal. The monitor tasks will just get parked by the executor until their child resolves.

I've checked that this covers Discv5, as the `TaskExecutor` gets injected into Discv5 here: f9bba92db3/beacon_node/src/lib.rs (L125-L126)

The identify network debug logs can get quite noisy and are unnecessary to print on every request/response.

This PR reduces debug noise by only printing messages for identify messages that offer some new information.

## Issue Addressed

Resolves#2582

## Proposed Changes

Use `quoted_u64` and `quoted_u64_vec` custom serde deserializers from `eth2_serde_utils` to support the proper Eth2.0 API spec for `/eth/v1/validator/sync_committee_subscriptions`

## Additional Info

N/A

Move the contents of book/src/local-testnets.md into book/src/setup.md

to make it more discoverable.

Also, the link to scripts/local_testnet was missing `/local_testnet`.

## Proposed Changes

Cache the total active balance for the current epoch in the `BeaconState`. Computing this value takes around 1ms, and this was negatively impacting block processing times on Prater, particularly when reconstructing states.

With a large number of attestations in each block, I saw the `process_attestations` function taking 150ms, which means that reconstructing hot states can take up to 4.65s (31 * 150ms), and reconstructing freezer states can take up to 307s (2047 * 150ms).

I opted to add the cache to the beacon state rather than computing the total active balance at the start of state processing and threading it through. Although this would be simpler in a way, it would waste time, particularly during block replay, as the total active balance doesn't change for the duration of an epoch. So we save ~32ms for hot states, and up to 8.1s for freezer states (using `--slots-per-restore-point 8192`).

## Proposed Changes

This PR deletes all `remote_signer` code from Lighthouse, for the following reasons:

* The `remote_signer` code is unused, and we have no plans to use it now that we're moving to supporting the Web3Signer APIs: #2522

* It represents a significant maintenance burden. The HTTP API tests have been prone to platform-specific failures, and breakages due to dependency upgrades, e.g. #2400.

Although the code is deleted it remains in the Git history should we ever want to recover it. For ease of reference:

- The last commit containing remote signer code: 5a3bcd2904

- The last Lighthouse version: v1.5.1

## Proposed Changes

Remove the SIGPIPE handler added in #2486.

We saw some of the testnet nodes running under `systemd` being stopped due to `journald` restarts. The systemd docs state:

> If systemd-journald.service is stopped, the stream connections associated with all services are terminated. Further writes to those streams by the service will result in EPIPE errors. In order to react gracefully in this case it is recommended that programs logging to standard output/error ignore such errors. If the SIGPIPE UNIX signal handler is not blocked or turned off, such write attempts will also result in such process signals being generated, see signal(7).

From https://www.freedesktop.org/software/systemd/man/systemd-journald.service.html

## Additional Info

It turned out that the issue described in #2114 was due to `tee`'s behaviour rather than Lighthouse's, so the SIGPIPE handler isn't required for any current use case. An alternative to disabling it all together would be to exit with a non-zero code so that systemd knows to restart the process, but it seems more desirable to tolerate journald glitches than to restart frequently.

## Issue Addressed

N/A

## Proposed Changes

Set a valid fork epoch in the simulator and run checks on

1. If all nodes transitioned at the fork

2. If all altair block sync aggregates are full

## Issue Addressed

Resolves#2438Resolves#2437

## Proposed Changes

Changes the permissions for validator client http server api token file and secret key to 600 from 644. Also changes the permission for logfiles generated using the `--logfile` cli option to 600.

Logs the path to the api token instead of the actual api token. Updates docs to reflect the change.

## Issue Addressed

Related to: #2259

Made an attempt at all the necessary updates here to publish the crates to crates.io. I incremented the minor versions on all the crates that have been previously published. We still might run into some issues as we try to publish because I'm not able to test this out but I think it's a good starting point.

## Proposed Changes

- Add description and license to `ssz_types` and `serde_util`

- rename `serde_util` to `eth2_serde_util`

- increment minor versions

- remove path dependencies

- remove patch dependencies

## Additional Info

Crates published:

- [x] `tree_hash` -- need to publish `tree_hash_derive` and `eth2_hashing` first

- [x] `eth2_ssz_types` -- need to publish `eth2_serde_util` first

- [x] `tree_hash_derive`

- [x] `eth2_ssz`

- [x] `eth2_ssz_derive`

- [x] `eth2_serde_util`

- [x] `eth2_hashing`

Co-authored-by: realbigsean <seananderson33@gmail.com>

## Issue Addressed

N/A

## Proposed Changes

Add functionality in the validator monitor to provide sync committee related metrics for monitored validators.

Co-authored-by: Michael Sproul <michael@sigmaprime.io>

## Issue Addressed

NA

## Proposed Changes

Missed head votes on attestations is a well-known issue. The primary cause is a block getting set as the head *after* the attestation deadline.

This PR aims to shorten the overall time between "block received" and "block set as head" by:

1. Persisting the head and fork choice *after* setting the canonical head

- Informal measurements show this takes ~200ms

1. Pruning the op pool *after* setting the canonical head.

1. No longer persisting the op pool to disk during `BeaconChain::fork_choice`

- Informal measurements show this can take up to 1.2s.

I also add some metrics to help measure the effect of these changes.

Persistence changes like this run the risk of breaking assumptions downstream. However, I have considered these risks and I think we're fine here. I will describe my reasoning for each change.

## Reasoning

### Change 1: Persisting the head and fork choice *after* setting the canonical head

For (1), although the function is called `persist_head_and_fork_choice`, it only persists:

- Fork choice

- Head tracker

- Genesis block root

Since `BeaconChain::fork_choice_internal` does not modify these values between the original time we were persisting it and the current time, I assert that the change I've made is non-substantial in terms of what ends up on-disk. There's the possibility that some *other* thread has modified fork choice in the extra time we've given it, but that's totally fine.

Since the only time we *read* those values from disk is during startup, I assert that this has no impact during runtime.

### Change 2: Pruning the op pool after setting the canonical head

Similar to the argument above, we don't modify the op pool during `BeaconChain::fork_choice_internal` so it shouldn't matter when we prune. This change should be non-substantial.

### Change 3: No longer persisting the op pool to disk during `BeaconChain::fork_choice`

This change *is* substantial. With the proposed changes, we'll only be persisting the op pool to disk when we shut down cleanly (i.e., the `BeaconChain` gets dropped). This means we'll save disk IO and time during usual operation, but a `kill -9` or similar "crash" will probably result in an out-of-date op pool when we reboot. An out-of-date op pool can only have an impact when producing blocks or aggregate attestations/sync committees.

I think it's pretty reasonable that a crash might result in an out-of-date op pool, since:

- Crashes are fairly rare. Practically the only time I see LH suffer a full crash is when the OOM killer shows up, and that's a very serious event.

- It's generally quite rare to produce a block/aggregate immediately after a reboot. Just a few slots of runtime is probably enough to have a decent-enough op pool again.

## Additional Info

Credits to @macladson for the timings referenced here.

## Issue Addressed

NA

## Proposed Changes

This PR adds some more fancy macro magic to make it easier to add a new built-in (aka "baked-in") testnet config to the `lighthouse` binary.

Previously, a user needed to modify several files and repeat themselves several times. Now, they only need to add a single definition in the `eth2_config` crate. No repetition 🎉

## Issue Addressed

Resolves#2033

## Proposed Changes

Adds a flag to enable shutting down beacon node right after sync is completed.

## Additional Info

Will need modification after weak subjectivity sync is enabled to change definition of a fully synced node.

## Issue Addressed

Closes#2526

## Proposed Changes

If the head block fails to decode on start up, do two things:

1. Revert all blocks between the head and the most recent hard fork (to `fork_slot - 1`).

2. Reset fork choice so that it contains the new head, and all blocks back to the new head's finalized checkpoint.

## Additional Info

I tweaked some of the beacon chain test harness stuff in order to make it generic enough to test with a non-zero slot clock on start-up. In the process I consolidated all the various `new_` methods into a single generic one which will hopefully serve all future uses 🤞

## Issue Addressed

Resolves#2114

Swapped out the ctrlc crate for tokio signals to hook register handlers for SIGPIPE and SIGHUP along with SIGTERM and SIGINT.

## Proposed Changes

- Swap out the ctrlc crate for tokio signals for unix signal handing

- Register signals for SIGPIPE and SHIGUP that trigger the same shutdown procedure as SIGTERM and SIGINT

## Additional Info

I tested these changes against the examples in the original issue and noticed some interesting behavior on my machine. When running `lighthouse bn --network pyrmont |& tee -a pyrmont_bn.log` or `lighthouse bn --network pyrmont 2>&1 | tee -a pyrmont_bn.log` none of the above signals are sent to the lighthouse program in a way I was able to observe.

The only time it seems that the signal gets sent to the lighthouse program is if there is no redirection of stderr to stdout. I'm not as familiar with the details of how unix signals work in linux with a redirect like that so I'm not sure if this is a bug in the program or expected behavior.

Signals are correctly received without the redirection and if the above signals are sent directly to the program with something like `kill`.

## Issue Addressed

Resolves#2487

## Proposed Changes

Logs a message once in every invocation of `Eth1Service::update` method if the primary endpoint is unavailable for some reason.

e.g.

```log

Aug 03 00:09:53.517 WARN Error connecting to eth1 node endpoint action: trying fallbacks, endpoint: http://localhost:8545/, service: eth1_rpc

Aug 03 00:09:56.959 INFO Fetched data from fallback fallback_number: 1, service: eth1_rpc

```

The main aim of this PR is to have an accompanying message to the "action: trying fallbacks" error message that is returned when checking the endpoint for liveness. This is mainly to indicate to the user that the fallback was live and reachable.

## Additional info

This PR is not meant to be a catch all for all cases where the primary endpoint failed. For instance, this won't log anything if the primary node was working fine during endpoint liveness checking and failed during deposit/block fetching. This is done intentionally to reduce number of logs while initial deposit/block sync and to avoid more complicated logic.

## Issue Addressed

NA

## Proposed Changes

This PR expands the time that entries exist in the gossip-sub duplicate cache. Recent investigations found that this cache is one slot (12s) shorter than the period for which an attestation is permitted to propagate on the gossip network.

Before #2540, this was causing peers to be unnecessarily down-scored for sending old attestations. Although that issue has been fixed, the duplicate cache time is increased here to avoid such messages from getting any further up the networking stack then required.

## Additional Info

NA

## Issue Addressed

https://github.com/eth2-clients/eth2-networks/pull/58

## Proposed Changes

Add the fork epoch for Altair on Prater: 36660

## Additional Info

This `config.yaml` is copied exactly from upstream. Large parts already matched due to our preemptive move to the new config style.

## Issue Addressed

NA

## Proposed Changes

A Discord user presented logs which indicated a drop in their peer count caused by a variety of peers sending attestations where we'd already seen an attestation for that validator. It's presently unclear how this case came about, but during our investigation I noticed that we are down-voting peers for sending such attestations.

There are three scenarios where we may receive duplicate unagg. attestations from the same validator:

1. The validator is committing a slashable offense.

2. The gossipsub message-deduping functionality is not working as expected.

3. We received the message via the HTTP prior to seeing it via gossip.

Scenario (1) would be so costly for an attacker that I don't think we need to add DoS protection for it.

Scenario (2) seems feasible. Our "seen message" caches in gossipsub might fill up/expire and let through these duplicates. There are also cases involving message ID mismatches with the other peers. In both these cases, I don't think we should be doing 1 attestation == -1 point down-voting.

Scenario (3) is not necessarily a fault of the peer and we shouldn't down-score them for it.

## Additional Info

NA

Due to the altair fork, in principle we can now subscribe to up to 148 topics. This bypasses our original limit and we can end up rejecting subscriptions.

This PR increases the limit to account for the fork.

## Issue Addressed

N/A

## Proposed Changes

Adds a cli option to disable packet filter in `lighthouse bootnode`. This is useful in running local testnets as the bootnode bans requests from the same ip(localhost) if the packet filter is enabled.

## Issue Addressed

Resolves#2524

## Proposed Changes

- Return all known forks in the `/config/fork_schedule`, previously returned only the head of the chain's fork.

- Deleted the `StateId::head` method because it was only previously used in this endpoint.

Co-authored-by: realbigsean <seananderson33@gmail.com>

## Issue Addressed

Resolves#2406

## Proposed Changes

Add windows release binaries to our CI

## Additional Info

Co-authored-by: realbigsean <seananderson33@gmail.com>

## Issue Addressed

N/A

## Proposed Changes

Refactor discovery queries such that only `QueryType::Subnet` queries are queued for grouping. `QueryType::FindPeers` is always made regardless of the number of active `Subnet` queries (max = 2). This prevents `FindPeers` queries from getting starved if `Subnet` queries start queuing up.

Also removes `GroupedQueryType` struct and use `QueryType` for all queuing and processing of discovery requests.

## Additional Info

Currently, no distinction is made between subnet discovery queries for attestation and sync committee subnets when grouping queries. Potentially we could prioritise attestation queries over sync committee queries in the future.

## Issue Addressed

NA

## Proposed Changes

- Version bump

- Increase queue sizes for aggregated attestations and re-queued attestations.

## Additional Info

NA

## Issue Addressed

Which issue # does this PR address?

## Proposed Changes

- Add a counter metric to log when a block is received late from gossip.

- Also push a `DEBG` log for the above condition.

- Use Debug (`?`) instead of Display (`%`) for a bunch of logs in the beacon processor, so we don't have to deal with concatenated block roots.

- Add new ERRO and CRIT to HTTP API to alert users when they're publishing late blocks.

## Additional Info

NA