## Issue Addressed

#3853

## Proposed Changes

Added `INFO` level logs for requesting and receiving the unsigned block.

## Additional Info

Logging for successfully publishing the signed block is already there. And seemingly there is a log for when "We realize we are going to produce a block" in the `start_update_service`: `info!(log, "Block production service started");

`. Is there anywhere else you'd like to see logging around this event?

Co-authored-by: GeemoCandama <104614073+GeemoCandama@users.noreply.github.com>

## Issue Addressed

#3780

## Proposed Changes

Add error reporting that notifies the node operator that the `voting_keystore_path` in their `validator_definitions.yml` file may be incorrect.

## Additional Info

There is more info in issue #3780

Co-authored-by: Paul Hauner <paul@paulhauner.com>

## Proposed Changes

With proposer boosting implemented (#2822) we have an opportunity to re-org out late blocks.

This PR adds three flags to the BN to control this behaviour:

* `--disable-proposer-reorgs`: turn aggressive re-orging off (it's on by default).

* `--proposer-reorg-threshold N`: attempt to orphan blocks with less than N% of the committee vote. If this parameter isn't set then N defaults to 20% when the feature is enabled.

* `--proposer-reorg-epochs-since-finalization N`: only attempt to re-org late blocks when the number of epochs since finalization is less than or equal to N. The default is 2 epochs, meaning re-orgs will only be attempted when the chain is finalizing optimally.

For safety Lighthouse will only attempt a re-org under very specific conditions:

1. The block being proposed is 1 slot after the canonical head, and the canonical head is 1 slot after its parent. i.e. at slot `n + 1` rather than building on the block from slot `n` we build on the block from slot `n - 1`.

2. The current canonical head received less than N% of the committee vote. N should be set depending on the proposer boost fraction itself, the fraction of the network that is believed to be applying it, and the size of the largest entity that could be hoarding votes.

3. The current canonical head arrived after the attestation deadline from our perspective. This condition was only added to support suppression of forkchoiceUpdated messages, but makes intuitive sense.

4. The block is being proposed in the first 2 seconds of the slot. This gives it time to propagate and receive the proposer boost.

## Additional Info

For the initial idea and background, see: https://github.com/ethereum/consensus-specs/pull/2353#issuecomment-950238004

There is also a specification for this feature here: https://github.com/ethereum/consensus-specs/pull/3034

Co-authored-by: Michael Sproul <micsproul@gmail.com>

Co-authored-by: pawan <pawandhananjay@gmail.com>

## Issue Addressed

#3766

## Proposed Changes

Adds an endpoint to get the graffiti that will be used for the next block proposal for each validator.

## Usage

```bash

curl -H "Authorization: Bearer api-token" http://localhost:9095/lighthouse/ui/graffiti | jq

```

```json

{

"data": {

"0x81283b7a20e1ca460ebd9bbd77005d557370cabb1f9a44f530c4c4c66230f675f8df8b4c2818851aa7d77a80ca5a4a5e": "mr f was here",

"0xa3a32b0f8b4ddb83f1a0a853d81dd725dfe577d4f4c3db8ece52ce2b026eca84815c1a7e8e92a4de3d755733bf7e4a9b": "mr v was here",

"0x872c61b4a7f8510ec809e5b023f5fdda2105d024c470ddbbeca4bc74e8280af0d178d749853e8f6a841083ac1b4db98f": null

}

}

```

## Additional Info

This will only return graffiti that the validator client knows about.

That is from these 3 sources:

1. Graffiti File

2. validator_definitions.yml

3. The `--graffiti` flag on the VC

If the graffiti is set on the BN, it will not be returned. This may warrant an additional endpoint on the BN side which can be used in the event the endpoint returns `null`.

This PR adds some health endpoints for the beacon node and the validator client.

Specifically it adds the endpoint:

`/lighthouse/ui/health`

These are not entirely stable yet. But provide a base for modification for our UI.

These also may have issues with various platforms and may need modification.

## Issue Addressed

Closes#3612

## Proposed Changes

- Iterates through BNs until it finds a non-optimistic head.

A slight change in error behavior:

- Previously: `spawn_contribution_tasks` did not return an error for a non-optimistic block head. It returned `Ok(())` logged a warning.

- Now: `spawn_contribution_tasks` returns an error if it cannot find a non-optimistic block head. The caller of `spawn_contribution_tasks` then logs the error as a critical error.

Co-authored-by: Michael Sproul <micsproul@gmail.com>

## Issue Addressed

New lints for rust 1.65

## Proposed Changes

Notable change is the identification or parameters that are only used in recursion

## Additional Info

na

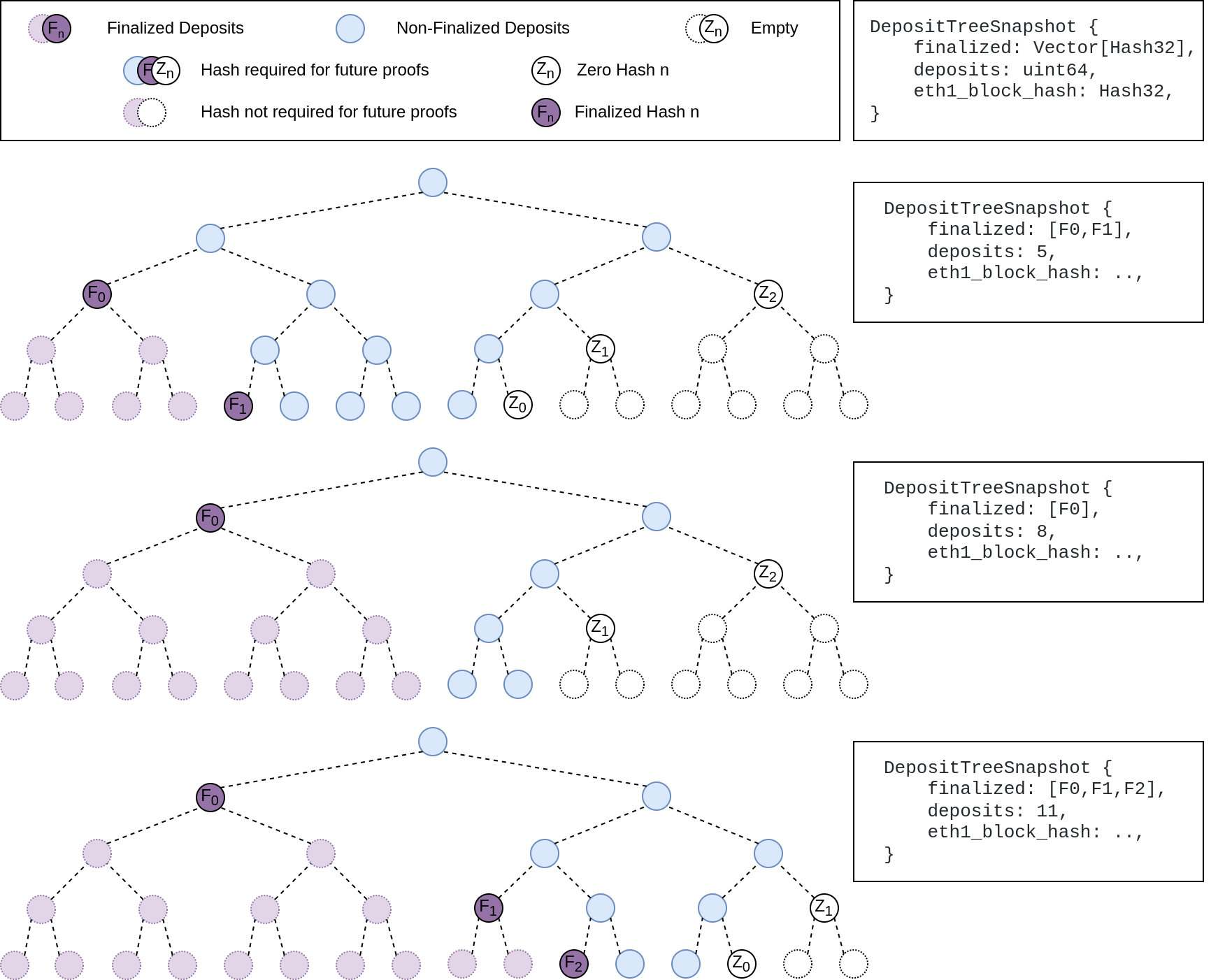

## Summary

The deposit cache now has the ability to finalize deposits. This will cause it to drop unneeded deposit logs and hashes in the deposit Merkle tree that are no longer required to construct deposit proofs. The cache is finalized whenever the latest finalized checkpoint has a new `Eth1Data` with all deposits imported.

This has three benefits:

1. Improves the speed of constructing Merkle proofs for deposits as we can just replay deposits since the last finalized checkpoint instead of all historical deposits when re-constructing the Merkle tree.

2. Significantly faster weak subjectivity sync as the deposit cache can be transferred to the newly syncing node in compressed form. The Merkle tree that stores `N` finalized deposits requires a maximum of `log2(N)` hashes. The newly syncing node then only needs to download deposits since the last finalized checkpoint to have a full tree.

3. Future proofing in preparation for [EIP-4444](https://eips.ethereum.org/EIPS/eip-4444) as execution nodes will no longer be required to store logs permanently so we won't always have all historical logs available to us.

## More Details

Image to illustrate how the deposit contract merkle tree evolves and finalizes along with the resulting `DepositTreeSnapshot`

## Other Considerations

I've changed the structure of the `SszDepositCache` so once you load & save your database from this version of lighthouse, you will no longer be able to load it from older versions.

Co-authored-by: ethDreamer <37123614+ethDreamer@users.noreply.github.com>

## Issue Addressed

Resolves#3516

## Proposed Changes

Adds a beacon fallback function for running a beacon node http query on all available fallbacks instead of returning on a first successful result. Uses the new `run_on_all` method for attestation and sync committee subscriptions.

## Additional Info

Please provide any additional information. For example, future considerations

or information useful for reviewers.

## Issue Addressed

fixes lints from the last rust release

## Proposed Changes

Fix the lints, most of the lints by `clippy::question-mark` are false positives in the form of https://github.com/rust-lang/rust-clippy/issues/9518 so it's allowed for now

## Additional Info

## Issue Addressed

Resolves: #3550

Remove the `--strict-fee-recipient` flag. It will cause missed proposals prior to the bellatrix transition.

Co-authored-by: realbigsean <sean@sigmaprime.io>

## Issue Addressed

Closes#3514

## Proposed Changes

- Change default monitoring endpoint frequency to 120 seconds to fit with 30k requests/month limit.

- Allow configuration of the monitoring endpoint frequency using `--monitoring-endpoint-frequency N` where `N` is a value in seconds.

## Issue Addressed

[Have --checkpoint-sync-url timeout](https://github.com/sigp/lighthouse/issues/3478)

## Proposed Changes

I added a parameter for `get_bytes_opt_accept_header<U: IntoUrl>` which accept a timeout duration, and modified the body of `get_beacon_blocks_ssz` and `get_debug_beacon_states_ssz` to pass corresponding timeout durations.

## Issue Addressed

Resolves#3524

## Proposed Changes

Log fee recipient in the `Validator exists in beacon chain` log. Logging in the BN already happens here 18c61a5e8b/beacon_node/beacon_chain/src/beacon_chain.rs (L3858-L3865)

I also think it's good practice to encourage users to set the fee recipient in the VC rather than the BN because of issues mentioned here https://github.com/sigp/lighthouse/issues/3432

Some example logs from prater:

```

Aug 30 03:47:09.922 INFO Validator exists in beacon chain fee_recipient: 0xab97_ad88, validator_index: 213615, pubkey: 0xb542b69ba14ddbaf717ca1762ece63a4804c08d38a1aadf156ae718d1545942e86763a1604f5065d4faa550b7259d651, service: duties

Aug 30 03:48:05.505 INFO Validator exists in beacon chain fee_recipient: Fee recipient for validator not set in validator_definitions.yml or provided with the `--suggested-fee-recipient flag`, validator_index: 210710, pubkey: 0xad5d67cc7f990590c7b3fa41d593c4cf12d9ead894be2311fbb3e5c733d8c1b909e9d47af60ea3480fb6b37946c35390, service: duties

```

Co-authored-by: Paul Hauner <paul@paulhauner.com>

## Issue Addressed

Relates to https://github.com/sigp/lighthouse/issues/3416

## Proposed Changes

- Add an `OfflineOnFailure` enum to the `first_success` method for querying beacon nodes so that a val registration request failure from the BN -> builder does not result in the BN being marked offline. This seems important because these failures could be coming directly from a connected relay and actually have no bearing on BN health. Other messages that are sent to a relay have a local fallback so shouldn't result in errors

- Downgrade the following log to a `WARN`

```

ERRO Unable to publish validator registrations to the builder network, error: All endpoints failed https://BN_B => RequestFailed(ServerMessage(ErrorMessage { code: 500, message: "UNHANDLED_ERROR: BuilderMissing", stacktraces: [] })), https://XXXX/ => Unavailable(Offline), [omitted]

```

## Additional Info

I think this change at least improves the UX of having a VC connected to some builder and some non-builder beacon nodes. I think we need to balance potentially alerting users that there is a BN <> VC misconfiguration and also allowing this type of fallback to work.

If we want to fully support this type of configuration we may want to consider adding a flag `--builder-beacon-nodes` and track whether a VC should be making builder queries on a per-beacon node basis. But I think the changes in this PR are independent of that type of extension.

PS: Sorry for the big diff here, it's mostly formatting changes after I added a new arg to a bunch of methods calls.

Co-authored-by: realbigsean <sean@sigmaprime.io>

## Issue Addressed

NA

## Proposed Changes

Indicate that local keystores are `readonly: Some(false)` rather than `None` via the `/eth/v1/keystores` method on the VC API.

I'll mark this as backwards-incompat so we remember to mention it in the release notes. There aren't any type-level incompatibilities here, just a change in how Lighthouse responds to responses.

## Additional Info

- Blocked on #3464

## Proposed Changes

Update the merge migration docs to encourage updating mainnet configs _now_!

The docs are also updated to recommend _against_ `--suggested-fee-recipient` on the beacon node (https://github.com/sigp/lighthouse/issues/3432).

Additionally the `--help` for the CLI is updated to match with a few small semantic changes:

- `--execution-jwt` is no longer allowed without `--execution-endpoint`. We've ended up without a default for `--execution-endpoint`, so I think that's fine.

- The flags related to the JWT are only allowed if `--execution-jwt` is provided.

## Issue Addressed

Resolves#3388Resolves#2638

## Proposed Changes

- Return the `BellatrixPreset` on `/eth/v1/config/spec` by default.

- Allow users to opt out of this by providing `--http-spec-fork=altair` (unless there's a Bellatrix fork epoch set).

- Add the Altair constants from #2638 and make serving the constants non-optional (the `http-disable-legacy-spec` flag is deprecated).

- Modify the VC to only read the `Config` and not to log extra fields. This prevents it from having to muck around parsing the `ConfigAndPreset` fields it doesn't need.

## Additional Info

This change is backwards-compatible for the VC and the BN, but is marked as a breaking change for the removal of `--http-disable-legacy-spec`.

I tried making `Config` a `superstruct` too, but getting the automatic decoding to work was a huge pain and was going to require a lot of hacks, so I gave up in favour of keeping the default-based approach we have now.

## Issue Addressed

Fixes#2967

## Proposed Changes

Collect validator indices alongside attestations when creating signed

attestations (and aggregates) for inclusion in the logs.

## Additional Info

This is my first time looking at Lighthouse source code and using Rust, so newbie feedback appreciated!

## Issue Addressed

https://github.com/sigp/lighthouse/issues/3091

Extends https://github.com/sigp/lighthouse/pull/3062, adding pre-bellatrix block support on blinded endpoints and allowing the normal proposal flow (local payload construction) on blinded endpoints. This resulted in better fallback logic because the VC will not have to switch endpoints on failure in the BN <> Builder API, the BN can just fallback immediately and without repeating block processing that it shouldn't need to. We can also keep VC fallback from the VC<>BN API's blinded endpoint to full endpoint.

## Proposed Changes

- Pre-bellatrix blocks on blinded endpoints

- Add a new `PayloadCache` to the execution layer

- Better fallback-from-builder logic

## Todos

- [x] Remove VC transition logic

- [x] Add logic to only enable builder flow after Merge transition finalization

- [x] Tests

- [x] Fix metrics

- [x] Rustdocs

Co-authored-by: Mac L <mjladson@pm.me>

Co-authored-by: realbigsean <sean@sigmaprime.io>

## Issue Addressed

Resolves#3151

## Proposed Changes

When fetching duties for sync committee contributions, check the value of `execution_optimistic` of the head block from the BN and refuse to sign any sync committee messages `if execution_optimistic == true`.

## Additional Info

- Is backwards compatible with older BNs

- Finding a way to add test coverage for this would be prudent. Open to suggestions.

## Issue Addressed

Resolves#3267Resolves#3156

## Proposed Changes

- Move the log for fee recipient checks from proposer cache insertion into block proposal so we are directly checking what we get from the EE

- Only log when there is a discrepancy with the local EE, not when using the builder API. In the `builder-api` branch there is an `info` log when there is a discrepancy, I think it is more likely there will be a difference in fee recipient with the builder api because proposer payments might be made via a transaction in the block. Not really sure what patterns will become commong.

- Upgrade the log from a `warn` to an `error` - not actually sure which we want, but I think this is worth an error because the local EE with default transaction ordering I think should pretty much always use the provided fee recipient

- add a `strict-fee-recipient` flag to the VC so we only sign blocks with matching fee recipients. Falls back from the builder API to the local API if there is a discrepancy .

Co-authored-by: realbigsean <sean@sigmaprime.io>

## Issue Addressed

#3302

## Proposed Changes

Move the `reqwest::Client` from being initialized per-validator, to being initialized per distinct Web3Signer.

This is done by placing the `Client` into a `HashMap` keyed by the definition of the Web3Signer as specified by the `ValidatorDefintion`. This will allow multiple Web3Signers to be used with a single VC and also maintains backwards compatibility.

## Additional Info

This was done to reduce the memory used by the VC when connecting to a Web3Signer.

I set up a local testnet using [a custom script](https://github.com/macladson/lighthouse/tree/web3signer-local-test/scripts/local_testnet_web3signer) and ran a VC with 200 validator keys:

VC with Web3Signer:

- `unstable`: ~200MB

- With fix: ~50MB

VC with Local Signer:

- `unstable`: ~35MB

- With fix: ~35MB

> I'm seeing some fragmentation with the VC using the Web3Signer, but not when using a local signer (this is most likely due to making lots of http requests and dealing with lots of JSON objects). I tested the above using `MALLOC_ARENA_MAX=1` to try to reduce the fragmentation. Without it, the values are around +50MB for both `unstable` and the fix.

## Issue Addressed

Web3signer validators can't produce post-Bellatrix blocks.

## Proposed Changes

Add support for Bellatrix to web3signer validators.

## Additional Info

I am running validators with this code on Ropsten, but it may be a while for them to get a proposal.

## Issue Addressed

Resolves#3276.

## Proposed Changes

Add a timeout for the sync committee contributions at 1/4 the slot length such that we may be able to try backup beacon nodes in the case of contribution post failure.

## Additional Info

1/4 slot length seemed standard for the timeouts, but may want to decrease this to 1/2.

I did not find any timeout related / sync committee related tests, so there are no tests. Happy to write some with a bit of guidance.

## Issue Addressed

* #3173

## Proposed Changes

Moved all `fee_recipient_file` related logic inside the `ValidatorStore` as it makes more sense to have this all together there. I tested this with the validators I have on `mainnet-shadow-fork-5` and everything appeared to work well. Only technicality is that I can't get the method to return `401` when the authorization header is not specified (it returns `400` instead). Fixing this is probably quite difficult given that none of `warp`'s rejections have code `401`.. I don't really think this matters too much though as long as it fails.

## Issue Addressed

Lays the groundwork for builder API changes by implementing the beacon-API's new `register_validator` endpoint

## Proposed Changes

- Add a routine in the VC that runs on startup (re-try until success), once per epoch or whenever `suggested_fee_recipient` is updated, signing `ValidatorRegistrationData` and sending it to the BN.

- TODO: `gas_limit` config options https://github.com/ethereum/builder-specs/issues/17

- BN only sends VC registration data to builders on demand, but VC registration data *does update* the BN's prepare proposer cache and send an updated fcU to a local EE. This is necessary for fee recipient consistency between the blinded and full block flow in the event of fallback. Having the BN only send registration data to builders on demand gives feedback directly to the VC about relay status. Also, since the BN has no ability to sign these messages anyways (so couldn't refresh them if it wanted), and validator registration is independent of the BN head, I think this approach makes sense.

- Adds upcoming consensus spec changes for this PR https://github.com/ethereum/consensus-specs/pull/2884

- I initially applied the bit mask based on a configured application domain.. but I ended up just hard coding it here instead because that's how it's spec'd in the builder repo.

- Should application mask appear in the api?

Co-authored-by: realbigsean <sean@sigmaprime.io>

## Issue Addressed

Following up from https://github.com/sigp/lighthouse/pull/3223#issuecomment-1158718102, it has been observed that the validator client uses vastly more memory in some compilation configurations than others. Compiling with Cross and then putting the binary into an Ubuntu 22.04 image seems to use 3x more memory than compiling with Cargo directly on Debian bullseye.

## Proposed Changes

Enable malloc metrics for the validator client. This will hopefully allow us to see the difference between the two compilation configs and compare heap fragmentation. This PR doesn't enable malloc tuning for the VC because it was found to perform significantly worse. The `--disable-malloc-tuning` flag is repurposed to just disable the metrics.

## Issue Addressed

#3154

## Proposed Changes

Add three new metrics for the VC:

1. `vc_beacon_nodes_synced_count`

2. `vc_beacon_nodes_available_count`

3. `vc_beacon_nodes_total_count`

Their values mirror the values present in the following log line:

```

Apr 08 17:25:17.000 INFO Connected to beacon node(s) synced: 4, available: 4, total: 4, service: notifier

```

## Issue Addressed

This fixes the low-hanging Clippy lints introduced in Rust 1.61 (due any hour now). It _ignores_ one lint, because fixing it requires a structural refactor of the validator client that needs to be done delicately. I've started on that refactor and will create another PR that can be reviewed in more depth in the coming days. I think we should merge this PR in the meantime to unblock CI.

## Issue Addressed

Web3Signer validators do not support client authentication. This means the `--tls-known-clients-file` option on Web3Signer can't be used with Lighthouse.

## Proposed Changes

Add two new fields to Web3Signer validators, `client_identity_path` and `client_identity_password`, which specify the path and password for a PKCS12 file containing a certificate and private key. If `client_identity_path` is present, use the certificate for SSL client authentication.

## Additional Info

I am successfully validating on Prater using client authentication with Web3Signer and client authentication.

## Issue Addressed

#3141

## Proposed Changes

Changes the algorithm for proposing blocks from

```

For each BN (first success):

- Produce a block

- Sign the block and store its root in the slashing protection DB

- Publish the block

```

to

```

For each BN (first success):

- Produce a block

Sign the block and store its root in the slashing protection DB

For each BN (first success):

- Publish the block

```

Separating the producing from the publishing makes sure that we only add a signed block once to the slashing DB.

# Description

Since the `TaskExecutor` currently requires a `Weak<Runtime>`, it's impossible to use it in an async test where the `Runtime` is created outside our scope. Whilst we *could* create a new `Runtime` instance inside the async test, dropping that `Runtime` would cause a panic (you can't drop a `Runtime` in an async context).

To address this issue, this PR creates the `enum Handle`, which supports either:

- A `Weak<Runtime>` (for use in our production code)

- A `Handle` to a runtime (for use in testing)

In theory, there should be no change to the behaviour of our production code (beyond some slightly different descriptions in HTTP 500 errors), or even our tests. If there is no change, you might ask *"why bother?"*. There are two PRs (#3070 and #3175) that are waiting on these fixes to introduce some new tests. Since we've added the EL to the `BeaconChain` (for the merge), we are now doing more async stuff in tests.

I've also added a `RuntimeExecutor` to the `BeaconChainTestHarness`. Whilst that's not immediately useful, it will become useful in the near future with all the new async testing.

Code simplifications using `Option`/`Result` combinators to make pattern-matches a tad simpler.

Opinions on these loosely held, happy to adjust in review.

Tool-aided by [comby-rust](https://github.com/huitseeker/comby-rust).

## Issue Addressed

#3068

## Proposed Changes

Adds support for remote key API.

## Additional Info

Needed to add `is_local_keystore` argument to `delete_definition_and_keystore` to know if we want to delete local or remote key. Previously this wasn't necessary because remotekeys(web3signers) could be deleted.

## Proposed Changes

I did some gardening 🌳 in our dependency tree:

- Remove duplicate versions of `warp` (git vs patch)

- Remove duplicate versions of lots of small deps: `cpufeatures`, `ethabi`, `ethereum-types`, `bitvec`, `nix`, `libsecp256k1`.

- Update MDBX (should resolve#3028). I tested and Lighthouse compiles on Windows 11 now.

- Restore `psutil` back to upstream

- Make some progress updating everything to rand 0.8. There are a few crates stuck on 0.7.

Hopefully this puts us on a better footing for future `cargo audit` issues, and improves compile times slightly.

## Additional Info

Some crates are held back by issues with `zeroize`. libp2p-noise depends on [`chacha20poly1305`](https://crates.io/crates/chacha20poly1305) which depends on zeroize < v1.5, and we can only have one version of zeroize because it's post 1.0 (see https://github.com/rust-lang/cargo/issues/6584). The latest version of `zeroize` is v1.5.4, which is used by the new versions of many other crates (e.g. `num-bigint-dig`). Once a new version of chacha20poly1305 is released we can update libp2p-noise and upgrade everything to the latest `zeroize` version.

I've also opened a PR to `blst` related to zeroize: https://github.com/supranational/blst/pull/111

## Issue Addressed

MEV boost compatibility

## Proposed Changes

See #2987

## Additional Info

This is blocked on the stabilization of a couple specs, [here](https://github.com/ethereum/beacon-APIs/pull/194) and [here](https://github.com/flashbots/mev-boost/pull/20).

Additional TODO's and outstanding questions

- [ ] MEV boost JWT Auth

- [ ] Will `builder_proposeBlindedBlock` return the revealed payload for the BN to propogate

- [ ] Should we remove `private-tx-proposals` flag and communicate BN <> VC with blinded blocks by default once these endpoints enter the beacon-API's repo? This simplifies merge transition logic.

Co-authored-by: realbigsean <seananderson33@gmail.com>

Co-authored-by: realbigsean <sean@sigmaprime.io>

## Issue Addressed

#3103

## Proposed Changes

Parse `http-address` and `metrics-address` as `IpAddr` for both the beacon node and validator client to support IPv6 addresses.

Also adjusts parsing of CORS origins to allow for IPv6 addresses.

## Usage

You can now set `http-address` and/or `metrics-address` flags to IPv6 addresses.

For example, the following:

`lighthouse bn --http --http-address :: --metrics --metrics-address ::1`

will expose the beacon node HTTP server on `[::]` (equivalent of `0.0.0.0` in IPv4) and the metrics HTTP server on `localhost` (the equivalent of `127.0.0.1` in IPv4)

The beacon node API can then be accessed by:

`curl "http://[server-ipv6-address]:5052/eth/v1/some_endpoint"`

And the metrics server api can be accessed by:

`curl "http://localhost:5054/metrics"` or by `curl "http://[::1]:5054/metrics"`

## Additional Info

On most Linux distributions the `v6only` flag is set to `false` by default (see the section for the `IPV6_V6ONLY` flag in https://www.man7.org/linux/man-pages/man7/ipv6.7.html) which means IPv4 connections will continue to function on a IPv6 address (providing it is appropriately mapped). This means that even if the Lighthouse API is running on `::` it is also possible to accept IPv4 connections.

However on Windows, this is not the case. The `v6only` flag is set to `true` so binding to `::` will only allow IPv6 connections.

## Issue Addressed

Presently if the VC is configured with a fee recipient it will error out when sending fee-recipient preparations to a beacon node that doesn't yet support the API:

```

Mar 08 22:23:36.236 ERRO Unable to publish proposer preparation error: All endpoints failed https://eth2-beacon-prater.infura.io/ => RequestFailed(StatusCode(404)), service: preparation

```

This doesn't affect other VC duties, but could be a source of anxiety for users trying to do the right thing and configure their fee recipients in advance.

## Proposed Changes

Change the preparation service to only send preparations if the current slot is later than 2 epochs before the Bellatrix hard fork epoch.

## Additional Info

I've tagged this v2.1.4 as I think it's a small change that's worth having for the next release

## Proposed Changes

Lots of lint updates related to `flat_map`, `unwrap_or_else` and string patterns. I did a little more creative refactoring in the op pool, but otherwise followed Clippy's suggestions.

## Additional Info

We need this PR to unblock CI.

## Issue Addressed

#3020

## Proposed Changes

- Alias the `validators-dir` arg to `validator-dir` in the `validator_client` subcommand.

- Alias the `validator-dir` arg to `validators-dir` in the `account_manager validator` subcommand.

- Add test for the validator_client alias.

## Issue Addressed

Addresses https://github.com/sigp/lighthouse/issues/2926

## Proposed Changes

Appropriated from https://github.com/sigp/lighthouse/issues/2926#issuecomment-1039676768:

When a node returns *any* error we call [`CandidateBeaconNode::set_offline`](c3a793fd73/validator_client/src/beacon_node_fallback.rs (L424)) which sets it's `status` to `CandidateError::Offline`. That node will then be ignored until the routine [`fallback_updater_service`](c3a793fd73/validator_client/src/beacon_node_fallback.rs (L44)) manages to reconnect to it.

However, I believe there was an issue in the [`CanidateBeaconNode::refesh_status`](c3a793fd73/validator_client/src/beacon_node_fallback.rs (L157-L178)) method, which is used by the updater service to see if the node has come good again. It was holding a [write lock on the `status` field](c3a793fd73/validator_client/src/beacon_node_fallback.rs (L165)) whilst it polled the node status. This means a long timeout would hog the write lock and starve other processes.

When a VC is trying to access a beacon node for whatever purpose (getting duties, posting blocks, etc), it performs [three passes](c3a793fd73/validator_client/src/beacon_node_fallback.rs (L432-L482)) through the lists of nodes, trying to run some generic `function` (closure, lambda, etc) on each node:

- 1st pass: only try running `function` on all nodes which are both synced and online.

- 2nd pass: try running `function` on all nodes that are online, but not necessarily synced.

- 3rd pass: for each offline node, try refreshing its status and then running `function` on it.

So, it turns out that if the `CanidateBeaconNode::refesh_status` function from the routine update service is hogging the write-lock, the 1st pass gets blocked whilst trying to read the status of the first node. So, nodes that should be left until the 3rd pass are blocking the process of the 1st and 2nd passes, hence the behaviour described in #2926.

## Additional Info

NA