## Issue Addressed

Closes#4354Closes#3987

Replaces #4305, #4283

## Proposed Changes

This switches the default slasher backend _back_ to LMDB.

If an MDBX database exists and the MDBX backend is enabled then MDBX will continue to be used. Our release binaries and Docker images will continue to include MDBX for as long as it is practical, so users of these should not notice any difference.

The main benefit is to users compiling from source and devs running tests. These users no longer have to struggle to compile MDBX and deal with the compatibility issues that arises. Similarly, devs don't need to worry about toggling feature flags in tests or risk forgetting to run the slasher tests due to backend issues.

This PR adds the ability to read the Lighthouse logs from the HTTP API for both the BN and the VC.

This is done in such a way to as minimize any kind of performance hit by adding this feature.

The current design creates a tokio broadcast channel and mixes is into a form of slog drain that combines with our main global logger drain, only if the http api is enabled.

The drain gets the logs, checks the log level and drops them if they are below INFO. If they are INFO or higher, it sends them via a broadcast channel only if there are users subscribed to the HTTP API channel. If not, it drops the logs.

If there are more than one subscriber, the channel clones the log records and converts them to json in their independent HTTP API tasks.

Co-authored-by: Michael Sproul <micsproul@gmail.com>

## Limit Backfill Sync

This PR transitions Lighthouse from syncing all the way back to genesis to only syncing back to the weak subjectivity point (~ 5 months) when syncing via a checkpoint sync.

There are a number of important points to note with this PR:

- Firstly and most importantly, this PR fundamentally shifts the default security guarantees of checkpoint syncing in Lighthouse. Prior to this PR, Lighthouse could verify the checkpoint of any given chain by ensuring the chain eventually terminates at the corresponding genesis. This guarantee can still be employed via the new CLI flag --genesis-backfill which will prompt lighthouse to the old behaviour of downloading all blocks back to genesis. The new behaviour only checks the proposer signatures for the last 5 months of blocks but cannot guarantee the chain matches the genesis chain.

- I have not modified any of the peer scoring or RPC responses. Clients syncing from gensis, will downscore new Lighthouse peers that do not possess blocks prior to the WSP. This is by design, as Lighthouse nodes of this form, need a mechanism to sort through peers in order to find useful peers in order to complete their genesis sync. We therefore do not discriminate between empty/error responses for blocks prior or post the local WSP. If we request a block that a peer does not posses, then fundamentally that peer is less useful to us than other peers.

- This will make a radical shift in that the majority of nodes will no longer store the full history of the chain. In the future we could add a pruning mechanism to remove old blocks from the db also.

Co-authored-by: Paul Hauner <paul@paulhauner.com>

## Issue Addressed

Resolves#4061

## Proposed Changes

Adds a message to tell users to check their EE.

## Additional Info

I really struggled to come up with something succinct and complete, so I'm totally open to feedback.

## Issue Addressed

Add support for ipv6 and dual stack in lighthouse.

## Proposed Changes

From an user perspective, now setting an ipv6 address, optionally configuring the ports should feel exactly the same as using an ipv4 address. If listening over both ipv4 and ipv6 then the user needs to:

- use the `--listen-address` two times (ipv4 and ipv6 addresses)

- `--port6` becomes then required

- `--discovery-port6` can now be used to additionally configure the ipv6 udp port

### Rough list of code changes

- Discovery:

- Table filter and ip mode set to match the listening config.

- Ipv6 address, tcp port and udp port set in the ENR builder

- Reported addresses now check which tcp port to give to libp2p

- LH Network Service:

- Can listen over Ipv6, Ipv4, or both. This uses two sockets. Using mapped addresses is disabled from libp2p and it's the most compatible option.

- NetworkGlobals:

- No longer stores udp port since was not used at all. Instead, stores the Ipv4 and Ipv6 TCP ports.

- NetworkConfig:

- Update names to make it clear that previous udp and tcp ports in ENR were Ipv4

- Add fields to configure Ipv6 udp and tcp ports in the ENR

- Include advertised enr Ipv6 address.

- Add type to model Listening address that's either Ipv4, Ipv6 or both. A listening address includes the ip, udp port and tcp port.

- UPnP:

- Kept only for ipv4

- Cli flags:

- `--listen-addresses` now can take up to two values

- `--port` will apply to ipv4 or ipv6 if only one listening address is given. If two listening addresses are given it will apply only to Ipv4.

- `--port6` New flag required when listening over ipv4 and ipv6 that applies exclusively to Ipv6.

- `--discovery-port` will now apply to ipv4 and ipv6 if only one listening address is given.

- `--discovery-port6` New flag to configure the individual udp port of ipv6 if listening over both ipv4 and ipv6.

- `--enr-udp-port` Updated docs to specify that it only applies to ipv4. This is an old behaviour.

- `--enr-udp6-port` Added to configure the enr udp6 field.

- `--enr-tcp-port` Updated docs to specify that it only applies to ipv4. This is an old behaviour.

- `--enr-tcp6-port` Added to configure the enr tcp6 field.

- `--enr-addresses` now can take two values.

- `--enr-match` updated behaviour.

- Common:

- rename `unused_port` functions to specify that they are over ipv4.

- add functions to get unused ports over ipv6.

- Testing binaries

- Updated code to reflect network config changes and unused_port changes.

## Additional Info

TODOs:

- use two sockets in discovery. I'll get back to this and it's on https://github.com/sigp/discv5/pull/160

- lcli allow listening over two sockets in generate_bootnodes_enr

- add at least one smoke flag for ipv6 (I have tested this and works for me)

- update the book

## Issue Addressed

#4040

## Proposed Changes

- Add the `always_prefer_builder_payload` field to `Config` in `beacon_node/client/src/config.rs`.

- Add that same field to `Inner` in `beacon_node/execution_layer/src/lib.rs`

- Modify the logic for picking the payload in `beacon_node/execution_layer/src/lib.rs`

- Add the `always-prefer-builder-payload` flag to the beacon node CLI

- Test the new flags in `lighthouse/tests/beacon_node.rs`

Co-authored-by: Paul Hauner <paul@paulhauner.com>

## Proposed Changes

Allowing compiling without MDBX by running:

```bash

CARGO_INSTALL_EXTRA_FLAGS="--no-default-features" make

```

The reasons to do this are several:

- Save compilation time if the slasher won't be used

- Work around compilation errors in slasher backend dependencies (our pinned version of MDBX is currently not compiling on FreeBSD with certain compiler versions).

## Additional Info

When I opened this PR we were using resolver v1 which [doesn't disable default features in dependencies](https://doc.rust-lang.org/cargo/reference/features.html#resolver-version-2-command-line-flags), and `mdbx` is default for the `slasher` crate. Even after the resolver got changed to v2 in #3697 compiling with `--no-default-features` _still_ wasn't turning off the slasher crate's default features, so I added `default-features = false` in all the places we depend on it.

Co-authored-by: Michael Sproul <micsproul@gmail.com>

* Remove CapellaReadiness::NotSynced

Some EEs have a habit of flipping between synced/not-synced, which causes some

spurious "Not read for the merge" messages back before the merge. For the

merge, if the EE wasn't synced the CE simple wouldn't go through the transition

(due to optimistic sync stuff). However, we don't have that hard requirement

for Capella; the CE will go through the fork and just wait for the EE to catch

up. I think that removing `NotSynced` here will avoid false-positives on the

"Not ready logs..". We'll be creating other WARN/ERRO logs if the EE isn't

synced, anyway.

* Change some Capella readiness logging

There's two changes here:

1. Shorten the log messages, for readability.

2. Change the hints.

Connecting a Capella-ready LH to a non-Capella-ready EE gives this log:

```

WARN Not ready for Capella info: The execution endpoint does not appear to support the required engine api methods for Capella: Required Methods Unsupported: engine_getPayloadV2 engine_forkchoiceUpdatedV2 engine_newPayloadV2, service: slot_notifier

```

This variant of error doesn't get a "try updating" style hint, when it's the

one that needs it. This is because we detect the method-not-found reponse from

the EE and return default capabilities, rather than indicating that the request

fails. I think it's fair to say that an EE upgrade is required whenever it

doesn't provide the required methods.

I changed the `ExchangeCapabilitiesFailed` message since that can only happen

when the EE fails to respond with anything other than success or not-found.

* Add first efforts at broadcast

* Tidy

* Move broadcast code to client

* Progress with broadcast impl

* Rename to address change

* Fix compile errors

* Use `while` loop

* Tidy

* Flip broadcast condition

* Switch to forgetting individual indices

* Always broadcast when the node starts

* Refactor into two functions

* Add testing

* Add another test

* Tidy, add more testing

* Tidy

* Add test, rename enum

* Rename enum again

* Tidy

* Break loop early

* Add V15 schema migration

* Bump schema version

* Progress with migration

* Update beacon_node/client/src/address_change_broadcast.rs

Co-authored-by: Michael Sproul <micsproul@gmail.com>

* Fix typo in function name

---------

Co-authored-by: Michael Sproul <micsproul@gmail.com>

## Issue Addressed

NA

## Proposed Changes

Myself and others (#3678) have observed that when running with lots of validators (e.g., 1000s) the cardinality is too much for Prometheus. I've seen Prometheus instances just grind to a halt when we turn the validator monitor on for our testnet validators (we have 10,000s of Goerli validators). Additionally, the debug log volume can get very high with one log per validator, per attestation.

To address this, the `bn --validator-monitor-individual-tracking-threshold <INTEGER>` flag has been added to *disable* per-validator (i.e., non-aggregated) metrics/logging once the validator monitor exceeds the threshold of validators. The default value is `64`, which is a finger-to-the-wind value. I don't actually know the value at which Prometheus starts to become overwhelmed, but I've seen it work with ~64 validators and I've seen it *not* work with 1000s of validators. A default of `64` seems like it will result in a breaking change to users who are running millions of dollars worth of validators whilst resulting in a no-op for low-validator-count users. I'm open to changing this number, though.

Additionally, this PR starts collecting aggregated Prometheus metrics (e.g., total count of head hits across all validators), so that high-validator-count validators still have some interesting metrics. We already had logging for aggregated values, so nothing has been added there.

I've opted to make this a breaking change since it can be rather damaging to your Prometheus instance to accidentally enable the validator monitor with large numbers of validators. I've crashed a Prometheus instance myself and had a report from another user who's done the same thing.

## Additional Info

NA

## Breaking Changes Note

A new label has been added to the validator monitor Prometheus metrics: `total`. This label tracks the aggregated metrics of all validators in the validator monitor (as opposed to each validator being tracking individually using its pubkey as the label).

Additionally, a new flag has been added to the Beacon Node: `--validator-monitor-individual-tracking-threshold`. The default value is `64`, which means that when the validator monitor is tracking more than 64 validators then it will stop tracking per-validator metrics and only track the `all_validators` metric. It will also stop logging per-validator logs and only emit aggregated logs (the exception being that exit and slashing logs are always emitted).

These changes were introduced in #3728 to address issues with untenable Prometheus cardinality and log volume when using the validator monitor with high validator counts (e.g., 1000s of validators). Users with less than 65 validators will see no change in behavior (apart from the added `all_validators` metric). Users with more than 65 validators who wish to maintain the previous behavior can set something like `--validator-monitor-individual-tracking-threshold 999999`.

## Proposed Changes

With proposer boosting implemented (#2822) we have an opportunity to re-org out late blocks.

This PR adds three flags to the BN to control this behaviour:

* `--disable-proposer-reorgs`: turn aggressive re-orging off (it's on by default).

* `--proposer-reorg-threshold N`: attempt to orphan blocks with less than N% of the committee vote. If this parameter isn't set then N defaults to 20% when the feature is enabled.

* `--proposer-reorg-epochs-since-finalization N`: only attempt to re-org late blocks when the number of epochs since finalization is less than or equal to N. The default is 2 epochs, meaning re-orgs will only be attempted when the chain is finalizing optimally.

For safety Lighthouse will only attempt a re-org under very specific conditions:

1. The block being proposed is 1 slot after the canonical head, and the canonical head is 1 slot after its parent. i.e. at slot `n + 1` rather than building on the block from slot `n` we build on the block from slot `n - 1`.

2. The current canonical head received less than N% of the committee vote. N should be set depending on the proposer boost fraction itself, the fraction of the network that is believed to be applying it, and the size of the largest entity that could be hoarding votes.

3. The current canonical head arrived after the attestation deadline from our perspective. This condition was only added to support suppression of forkchoiceUpdated messages, but makes intuitive sense.

4. The block is being proposed in the first 2 seconds of the slot. This gives it time to propagate and receive the proposer boost.

## Additional Info

For the initial idea and background, see: https://github.com/ethereum/consensus-specs/pull/2353#issuecomment-950238004

There is also a specification for this feature here: https://github.com/ethereum/consensus-specs/pull/3034

Co-authored-by: Michael Sproul <micsproul@gmail.com>

Co-authored-by: pawan <pawandhananjay@gmail.com>

This PR adds some health endpoints for the beacon node and the validator client.

Specifically it adds the endpoint:

`/lighthouse/ui/health`

These are not entirely stable yet. But provide a base for modification for our UI.

These also may have issues with various platforms and may need modification.

## Issue Addressed

#3702

Which issue # does this PR address?

#3702

## Proposed Changes

Added checkpoint-sync-url-timeout flag to cli. Added timeout field to ClientGenesis::CheckpointSyncUrl to utilize timeout set

## Additional Info

Please provide any additional information. For example, future considerations

or information useful for reviewers.

Co-authored-by: GeemoCandama <104614073+GeemoCandama@users.noreply.github.com>

Co-authored-by: Michael Sproul <micsproul@gmail.com>

## Issue Addressed

New lints for rust 1.65

## Proposed Changes

Notable change is the identification or parameters that are only used in recursion

## Additional Info

na

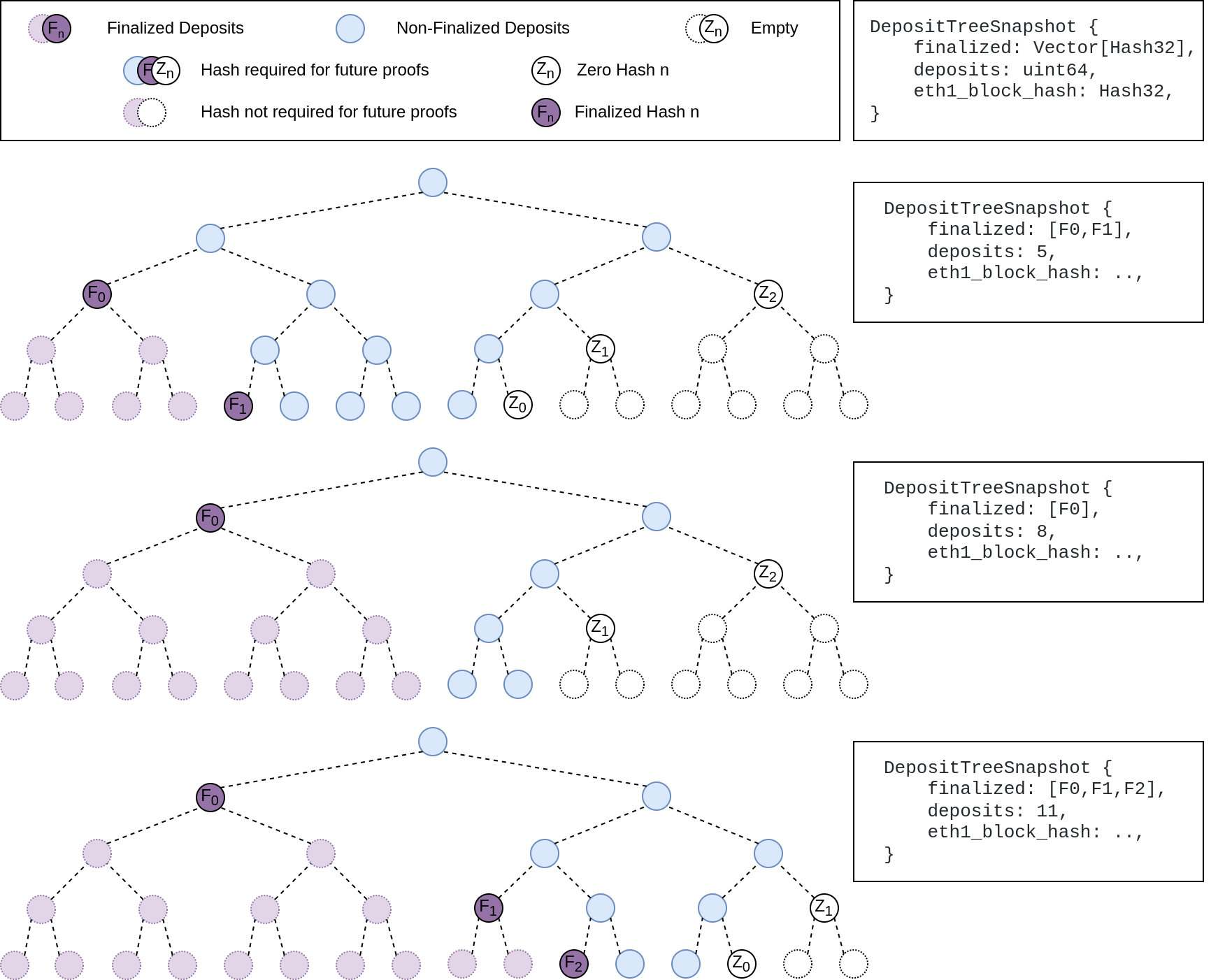

## Summary

The deposit cache now has the ability to finalize deposits. This will cause it to drop unneeded deposit logs and hashes in the deposit Merkle tree that are no longer required to construct deposit proofs. The cache is finalized whenever the latest finalized checkpoint has a new `Eth1Data` with all deposits imported.

This has three benefits:

1. Improves the speed of constructing Merkle proofs for deposits as we can just replay deposits since the last finalized checkpoint instead of all historical deposits when re-constructing the Merkle tree.

2. Significantly faster weak subjectivity sync as the deposit cache can be transferred to the newly syncing node in compressed form. The Merkle tree that stores `N` finalized deposits requires a maximum of `log2(N)` hashes. The newly syncing node then only needs to download deposits since the last finalized checkpoint to have a full tree.

3. Future proofing in preparation for [EIP-4444](https://eips.ethereum.org/EIPS/eip-4444) as execution nodes will no longer be required to store logs permanently so we won't always have all historical logs available to us.

## More Details

Image to illustrate how the deposit contract merkle tree evolves and finalizes along with the resulting `DepositTreeSnapshot`

## Other Considerations

I've changed the structure of the `SszDepositCache` so once you load & save your database from this version of lighthouse, you will no longer be able to load it from older versions.

Co-authored-by: ethDreamer <37123614+ethDreamer@users.noreply.github.com>

Overrides any previous option that enables the eth1 service.

Useful for operating a `light` beacon node.

Co-authored-by: Michael Sproul <micsproul@gmail.com>

## Issue Addressed

Adding CLI tests for logging flags: log-color and disable-log-timestamp

Which issue # does this PR address?

#3588

## Proposed Changes

Add CLI tests for logging flags as described in #3588

Please list or describe the changes introduced by this PR.

Added logger_config to client::Config as suggested. Implemented Default for LoggerConfig based on what was being done elsewhere in the repo. Created 2 tests for each flag addressed.

## Additional Info

Please provide any additional information. For example, future considerations

or information useful for reviewers.

## Issue Addressed

N/A

## Proposed Changes

With https://github.com/sigp/lighthouse/pull/3214 we made it such that you can either have 1 auth endpoint or multiple non auth endpoints. Now that we are post merge on all networks (testnets and mainnet), we cannot progress a chain without a dedicated auth execution layer connection so there is no point in having a non-auth eth1-endpoint for syncing deposit cache.

This code removes all fallback related code in the eth1 service. We still keep the single non-auth endpoint since it's useful for testing.

## Additional Info

This removes all eth1 fallback related metrics that were relevant for the monitoring service, so we might need to change the api upstream.

## Issue Addressed

NA

## Proposed Changes

As we've seen on Prater, there seems to be a correlation between these messages

```

WARN Not enough time for a discovery search subnet_id: ExactSubnet { subnet_id: SubnetId(19), slot: Slot(3742336) }, service: attestation_service

```

... and nodes falling 20-30 slots behind the head for short periods. These nodes are running ~20k Prater validators.

After running some metrics, I can see that the `network_recv` channel is processing ~250k `AttestationSubscribe` messages per minute. It occurred to me that perhaps the `AttestationSubscribe` messages are "washing out" the `SendRequest` and `SendResponse` messages. In this PR I separate the `AttestationSubscribe` and `SyncCommitteeSubscribe` messages into their own queue so the `tokio::select!` in the `NetworkService` can still process the other messages in the `network_recv` channel without necessarily having to clear all the subscription messages first.

~~I've also added filter to the HTTP API to prevent duplicate subscriptions going to the network service.~~

## Additional Info

- Currently being tested on Prater

## Issue Addressed

Partly resolves#3518

## Proposed Changes

Change the slot notifier to use `duration_to_next_slot` rather than an interval timer. This makes it robust against underlying clock changes.

## Issue Addressed

Fixes an issue whereby syncing a post-merge network without an execution endpoint would silently stall. Sync swallows the errors from block verification so previously there was no indication in the logs for why the node couldn't sync.

## Proposed Changes

Add an error log to the merge-readiness notifier for the case where the merge has already completed but no execution endpoint is configured.

## Issue Addressed

NA

## Proposed Changes

Start issuing merge-readiness logs 2 weeks before the Bellatrix fork epoch. Additionally, if the Bellatrix epoch is specified and the use has configured an EL, always log merge readiness logs, this should benefit pro-active users.

### Lookahead Reasoning

- Bellatrix fork is:

- epoch 144896

- slot 4636672

- Unix timestamp: `1606824023 + (4636672 * 12) = 1662464087`

- GMT: Tue Sep 06 2022 11:34:47 GMT+0000

- Warning start time is:

- Unix timestamp: `1662464087 - 604800 * 2 = 1661254487`

- GMT: Tue Aug 23 2022 11:34:47 GMT+0000

The [current expectation](https://discord.com/channels/595666850260713488/745077610685661265/1007445305198911569) is that EL and CL clients will releases out by Aug 22nd at the latest, then an EF announcement will go out on the 23rd. If all goes well, LH will start alerting users about merge-readiness just after the announcement.

## Additional Info

NA

## Issue Addressed

Fixes an issue identified by @remyroy whereby we were logging a recommendation to use `--eth1-endpoints` on merge-ready setups (when the execution layer was out of sync).

## Proposed Changes

I took the opportunity to clean up the other eth1-related logs, replacing "eth1" by "deposit contract" or "execution" as appropriate.

I've downgraded the severity of the `CRIT` log to `ERRO` and removed most of the recommendation text. The reason being that users lacking an execution endpoint will be informed by the new `WARN Not merge ready` log pre-Bellatrix, or the regular errors from block verification post-Bellatrix.

## Issue Addressed

Resolves#3249

## Proposed Changes

Log merge related parameters and EE status in the beacon notifier before the merge.

Co-authored-by: Paul Hauner <paul@paulhauner.com>

## Issue Addressed

N/A

## Proposed Changes

Since Rust 1.62, we can use `#[derive(Default)]` on enums. ✨https://blog.rust-lang.org/2022/06/30/Rust-1.62.0.html#default-enum-variants

There are no changes to functionality in this PR, just replaced the `Default` trait implementation with `#[derive(Default)]`.

## Overview

This rather extensive PR achieves two primary goals:

1. Uses the finalized/justified checkpoints of fork choice (FC), rather than that of the head state.

2. Refactors fork choice, block production and block processing to `async` functions.

Additionally, it achieves:

- Concurrent forkchoice updates to the EL and cache pruning after a new head is selected.

- Concurrent "block packing" (attestations, etc) and execution payload retrieval during block production.

- Concurrent per-block-processing and execution payload verification during block processing.

- The `Arc`-ification of `SignedBeaconBlock` during block processing (it's never mutated, so why not?):

- I had to do this to deal with sending blocks into spawned tasks.

- Previously we were cloning the beacon block at least 2 times during each block processing, these clones are either removed or turned into cheaper `Arc` clones.

- We were also `Box`-ing and un-`Box`-ing beacon blocks as they moved throughout the networking crate. This is not a big deal, but it's nice to avoid shifting things between the stack and heap.

- Avoids cloning *all the blocks* in *every chain segment* during sync.

- It also has the potential to clean up our code where we need to pass an *owned* block around so we can send it back in the case of an error (I didn't do much of this, my PR is already big enough 😅)

- The `BeaconChain::HeadSafetyStatus` struct was removed. It was an old relic from prior merge specs.

For motivation for this change, see https://github.com/sigp/lighthouse/pull/3244#issuecomment-1160963273

## Changes to `canonical_head` and `fork_choice`

Previously, the `BeaconChain` had two separate fields:

```

canonical_head: RwLock<Snapshot>,

fork_choice: RwLock<BeaconForkChoice>

```

Now, we have grouped these values under a single struct:

```

canonical_head: CanonicalHead {

cached_head: RwLock<Arc<Snapshot>>,

fork_choice: RwLock<BeaconForkChoice>

}

```

Apart from ergonomics, the only *actual* change here is wrapping the canonical head snapshot in an `Arc`. This means that we no longer need to hold the `cached_head` (`canonical_head`, in old terms) lock when we want to pull some values from it. This was done to avoid deadlock risks by preventing functions from acquiring (and holding) the `cached_head` and `fork_choice` locks simultaneously.

## Breaking Changes

### The `state` (root) field in the `finalized_checkpoint` SSE event

Consider the scenario where epoch `n` is just finalized, but `start_slot(n)` is skipped. There are two state roots we might in the `finalized_checkpoint` SSE event:

1. The state root of the finalized block, which is `get_block(finalized_checkpoint.root).state_root`.

4. The state root at slot of `start_slot(n)`, which would be the state from (1), but "skipped forward" through any skip slots.

Previously, Lighthouse would choose (2). However, we can see that when [Teku generates that event](de2b2801c8/data/beaconrestapi/src/main/java/tech/pegasys/teku/beaconrestapi/handlers/v1/events/EventSubscriptionManager.java (L171-L182)) it uses [`getStateRootFromBlockRoot`](de2b2801c8/data/provider/src/main/java/tech/pegasys/teku/api/ChainDataProvider.java (L336-L341)) which uses (1).

I have switched Lighthouse from (2) to (1). I think it's a somewhat arbitrary choice between the two, where (1) is easier to compute and is consistent with Teku.

## Notes for Reviewers

I've renamed `BeaconChain::fork_choice` to `BeaconChain::recompute_head`. Doing this helped ensure I broke all previous uses of fork choice and I also find it more descriptive. It describes an action and can't be confused with trying to get a reference to the `ForkChoice` struct.

I've changed the ordering of SSE events when a block is received. It used to be `[block, finalized, head]` and now it's `[block, head, finalized]`. It was easier this way and I don't think we were making any promises about SSE event ordering so it's not "breaking".

I've made it so fork choice will run when it's first constructed. I did this because I wanted to have a cached version of the last call to `get_head`. Ensuring `get_head` has been run *at least once* means that the cached values doesn't need to wrapped in an `Option`. This was fairly simple, it just involved passing a `slot` to the constructor so it knows *when* it's being run. When loading a fork choice from the store and a slot clock isn't handy I've just used the `slot` that was saved in the `fork_choice_store`. That seems like it would be a faithful representation of the slot when we saved it.

I added the `genesis_time: u64` to the `BeaconChain`. It's small, constant and nice to have around.

Since we're using FC for the fin/just checkpoints, we no longer get the `0x00..00` roots at genesis. You can see I had to remove a work-around in `ef-tests` here: b56be3bc2. I can't find any reason why this would be an issue, if anything I think it'll be better since the genesis-alias has caught us out a few times (0x00..00 isn't actually a real root). Edit: I did find a case where the `network` expected the 0x00..00 alias and patched it here: 3f26ac3e2.

You'll notice a lot of changes in tests. Generally, tests should be functionally equivalent. Here are the things creating the most diff-noise in tests:

- Changing tests to be `tokio::async` tests.

- Adding `.await` to fork choice, block processing and block production functions.

- Refactor of the `canonical_head` "API" provided by the `BeaconChain`. E.g., `chain.canonical_head.cached_head()` instead of `chain.canonical_head.read()`.

- Wrapping `SignedBeaconBlock` in an `Arc`.

- In the `beacon_chain/tests/block_verification`, we can't use the `lazy_static` `CHAIN_SEGMENT` variable anymore since it's generated with an async function. We just generate it in each test, not so efficient but hopefully insignificant.

I had to disable `rayon` concurrent tests in the `fork_choice` tests. This is because the use of `rayon` and `block_on` was causing a panic.

Co-authored-by: Mac L <mjladson@pm.me>

## Issue Addressed

This PR is a subset of the changes in #3134. Unstable will still not function correctly with the new builder spec once this is merged, #3134 should be used on testnets

## Proposed Changes

- Removes redundancy in "builders" (servers implementing the builder spec)

- Renames `payload-builder` flag to `builder`

- Moves from old builder RPC API to new HTTP API, but does not implement the validator registration API (implemented in https://github.com/sigp/lighthouse/pull/3194)

Co-authored-by: sean <seananderson33@gmail.com>

Co-authored-by: realbigsean <sean@sigmaprime.io>

## Issue Addressed

Resolves#3069

## Proposed Changes

Unify the `eth1-endpoints` and `execution-endpoints` flags in a backwards compatible way as described in https://github.com/sigp/lighthouse/issues/3069#issuecomment-1134219221

Users have 2 options:

1. Use multiple non auth execution endpoints for deposit processing pre-merge

2. Use a single jwt authenticated execution endpoint for both execution layer and deposit processing post merge

Related https://github.com/sigp/lighthouse/issues/3118

To enable jwt authenticated deposit processing, this PR removes the calls to `net_version` as the `net` namespace is not exposed in the auth server in execution clients.

Moving away from using `networkId` is a good step in my opinion as it doesn't provide us with any added guarantees over `chainId`. See https://github.com/ethereum/consensus-specs/issues/2163 and https://github.com/sigp/lighthouse/issues/2115

Co-authored-by: Paul Hauner <paul@paulhauner.com>

## Issue Addressed

NA

## Proposed Changes

I used these logs when debugging a spurious failure with Infura and thought they might be nice to have around permanently.

There's no changes to functionality in this PR, just some additional `debug!` logs.

## Additional Info

NA

## Issue Addressed

Fixes a timing issue that results in spurious fork choice notifier failures:

```

WARN Error signalling fork choice waiter slot: 3962270, error: ForkChoiceSignalOutOfOrder { current: Slot(3962271), latest: Slot(3962270) }, service: beacon

```

There’s a fork choice run that is scheduled to run at the start of every slot by the `timer`, which creates a 12s interval timer when the beacon node starts up. The problem is that if there’s a bit of clock drift that gets corrected via NTP (or a leap second for that matter) then these 12s intervals will cease to line up with the start of the slot. This then creates the mismatch in slot number that we see above.

Lighthouse also runs fork choice 500ms before the slot begins, and these runs are what is conflicting with the start-of-slot runs. This means that the warning in current versions of Lighthouse is mostly cosmetic because fork choice is up to date with all but the most recent 500ms of attestations (which usually isn’t many).

## Proposed Changes

Fix the per-slot timer so that it continually re-calculates the duration to the start of the next slot and waits for that.

A side-effect of this change is that we may skip slots if the per-slot task takes >12s to run, but I think this is an unlikely scenario and an acceptable compromise.

# Description

Since the `TaskExecutor` currently requires a `Weak<Runtime>`, it's impossible to use it in an async test where the `Runtime` is created outside our scope. Whilst we *could* create a new `Runtime` instance inside the async test, dropping that `Runtime` would cause a panic (you can't drop a `Runtime` in an async context).

To address this issue, this PR creates the `enum Handle`, which supports either:

- A `Weak<Runtime>` (for use in our production code)

- A `Handle` to a runtime (for use in testing)

In theory, there should be no change to the behaviour of our production code (beyond some slightly different descriptions in HTTP 500 errors), or even our tests. If there is no change, you might ask *"why bother?"*. There are two PRs (#3070 and #3175) that are waiting on these fixes to introduce some new tests. Since we've added the EL to the `BeaconChain` (for the merge), we are now doing more async stuff in tests.

I've also added a `RuntimeExecutor` to the `BeaconChainTestHarness`. Whilst that's not immediately useful, it will become useful in the near future with all the new async testing.

Code simplifications using `Option`/`Result` combinators to make pattern-matches a tad simpler.

Opinions on these loosely held, happy to adjust in review.

Tool-aided by [comby-rust](https://github.com/huitseeker/comby-rust).

## Proposed Changes

I did some gardening 🌳 in our dependency tree:

- Remove duplicate versions of `warp` (git vs patch)

- Remove duplicate versions of lots of small deps: `cpufeatures`, `ethabi`, `ethereum-types`, `bitvec`, `nix`, `libsecp256k1`.

- Update MDBX (should resolve#3028). I tested and Lighthouse compiles on Windows 11 now.

- Restore `psutil` back to upstream

- Make some progress updating everything to rand 0.8. There are a few crates stuck on 0.7.

Hopefully this puts us on a better footing for future `cargo audit` issues, and improves compile times slightly.

## Additional Info

Some crates are held back by issues with `zeroize`. libp2p-noise depends on [`chacha20poly1305`](https://crates.io/crates/chacha20poly1305) which depends on zeroize < v1.5, and we can only have one version of zeroize because it's post 1.0 (see https://github.com/rust-lang/cargo/issues/6584). The latest version of `zeroize` is v1.5.4, which is used by the new versions of many other crates (e.g. `num-bigint-dig`). Once a new version of chacha20poly1305 is released we can update libp2p-noise and upgrade everything to the latest `zeroize` version.

I've also opened a PR to `blst` related to zeroize: https://github.com/supranational/blst/pull/111

## Proposed Changes

Add a `lighthouse db` command with three initial subcommands:

- `lighthouse db version`: print the database schema version.

- `lighthouse db migrate --to N`: manually upgrade (or downgrade!) the database to a different version.

- `lighthouse db inspect --column C`: log the key and size in bytes of every value in a given `DBColumn`.

This PR lays the groundwork for other changes, namely:

- Mark's fast-deposit sync (https://github.com/sigp/lighthouse/pull/2915), for which I think we should implement a database downgrade (from v9 to v8).

- My `tree-states` work, which already implements a downgrade (v10 to v8).

- Standalone purge commands like `lighthouse db purge-dht` per https://github.com/sigp/lighthouse/issues/2824.

## Additional Info

I updated the `strum` crate to 0.24.0, which necessitated some changes in the network code to remove calls to deprecated methods.

Thanks to @winksaville for the motivation, and implementation work that I used as a source of inspiration (https://github.com/sigp/lighthouse/pull/2685).

## Issue Addressed

Don't send an fcU message at startup if it's pre-genesis. The startup fcU message is not critical, not required by the spec, so it's fine to avoid it for networks that start post-Bellatrix fork.

## Issue Addressed

Resolves#2936

## Proposed Changes

Adds functionality for calling [`validator/prepare_beacon_proposer`](https://ethereum.github.io/beacon-APIs/?urls.primaryName=dev#/Validator/prepareBeaconProposer) in advance.

There is a `BeaconChain::prepare_beacon_proposer` method which, which called, computes the proposer for the next slot. If that proposer has been registered via the `validator/prepare_beacon_proposer` API method, then the `beacon_chain.execution_layer` will be provided the `PayloadAttributes` for us in all future forkchoiceUpdated calls. An artificial forkchoiceUpdated call will be created 4s before each slot, when the head updates and when a validator updates their information.

Additionally, I added strict ordering for calls from the `BeaconChain` to the `ExecutionLayer`. I'm not certain the `ExecutionLayer` will always maintain this ordering, but it's a good start to have consistency from the `BeaconChain`. There are some deadlock opportunities introduced, they are documented in the code.

## Additional Info

- ~~Blocked on #2837~~

Co-authored-by: realbigsean <seananderson33@GMAIL.com>

## Issue Addressed

Resolves#3015

## Proposed Changes

Add JWT token based authentication to engine api requests. The jwt secret key is read from the provided file and is used to sign tokens that are used for authenticated communication with the EL node.

- [x] Interop with geth (synced `merge-devnet-4` with the `merge-kiln-v2` branch on geth)

- [x] Interop with other EL clients (nethermind on `merge-devnet-4`)

- [x] ~Implement `zeroize` for jwt secrets~

- [x] Add auth server tests with `mock_execution_layer`

- [x] Get auth working with the `execution_engine_integration` tests

Co-authored-by: Paul Hauner <paul@paulhauner.com>

## Issue Addressed

NA

## Proposed Changes

Adds the functionality to allow blocks to be validated/invalidated after their import as per the [optimistic sync spec](https://github.com/ethereum/consensus-specs/blob/dev/sync/optimistic.md#how-to-optimistically-import-blocks). This means:

- Updating `ProtoArray` to allow flipping the `execution_status` of ancestors/descendants based on payload validity updates.

- Creating separation between `execution_layer` and the `beacon_chain` by creating a `PayloadStatus` struct.

- Refactoring how the `execution_layer` selects a `PayloadStatus` from the multiple statuses returned from multiple EEs.

- Adding testing framework for optimistic imports.

- Add `ExecutionBlockHash(Hash256)` new-type struct to avoid confusion between *beacon block roots* and *execution payload hashes*.

- Add `merge` to [`FORKS`](c3a793fd73/Makefile (L17)) in the `Makefile` to ensure we test the beacon chain with merge settings.

- Fix some tests here that were failing due to a missing execution layer.

## TODO

- [ ] Balance tests

Co-authored-by: Mark Mackey <mark@sigmaprime.io>

## Proposed Changes

Lots of lint updates related to `flat_map`, `unwrap_or_else` and string patterns. I did a little more creative refactoring in the op pool, but otherwise followed Clippy's suggestions.

## Additional Info

We need this PR to unblock CI.

## Issue Addressed

NA

## Proposed Changes

This PR extends #3018 to address my review comments there and add automated integration tests with Geth (and other implementations, in the future).

I've also de-duplicated the "unused port" logic by creating an `common/unused_port` crate.

## Additional Info

I'm not sure if we want to merge this PR, or update #3018 and merge that. I don't mind, I'm primarily opening this PR to make sure CI works.

Co-authored-by: Mark Mackey <mark@sigmaprime.io>

## Issue Addressed

Lighthouse gossiping late messages

## Proposed Changes

Point LH to our fork using tokio interval, which 1) works as expected 2) is more performant than the previous version that actually worked as expected

Upgrade libp2p

## Additional Info

https://github.com/libp2p/rust-libp2p/issues/2497