## Proposed Changes

Mitigate the fork choice attacks described in [_Three Attacks on Proof-of-Stake Ethereum_](https://arxiv.org/abs/2110.10086) by enabling proposer boost @ 70% on mainnet.

Proposer boost has been running with stability on Prater for a few months now, and is safe to roll out gradually on mainnet. I'll argue that the financial impact of rolling out gradually is also minimal.

Consider how a proposer-boosted validator handles two types of re-orgs:

## Ex ante re-org (from the paper)

In the mitigated attack, a malicious proposer releases their block at slot `n + 1` late so that it re-orgs the block at the slot _after_ them (at slot `n + 2`). Non-boosting validators will follow this re-org and vote for block `n + 1` in slot `n + 2`. Boosted validators will vote for `n + 2`. If the boosting validators are outnumbered, there'll be a re-org to the malicious block from `n + 1` and validators applying the boost will have their slot `n + 2` attestations miss head (and target on an epoch boundary). Note that all the attesters from slot `n + 1` are doomed to lose their head vote rewards, but this is the same regardless of boosting.

Therefore, Lighthouse nodes stand to miss slightly more head votes than other nodes if they are in the minority while applying the proposer boost. Once the proposer boost nodes gain a majority, this trend reverses.

## Ex post re-org (using the boost)

The other type of re-org is an ex post re-org using the strategy described here: https://github.com/sigp/lighthouse/pull/2860. With this strategy, boosted nodes will follow the attempted re-org and again lose a head vote if the re-org is unsuccessful. Once boosting is widely adopted, the re-orgs will succeed and the non-boosting validators will lose out.

I don't think there are (m)any validators applying this strategy, because it is irrational to attempt it before boosting is widely adopted. Therefore I think we can safely ignore this possibility.

## Risk Assessment

From observing re-orgs on mainnet I don't think ex ante re-orgs are very common. I've observed around 1 per day for the last month on my node (see: https://gist.github.com/michaelsproul/3b2142fa8fe0ff767c16553f96959e8c), compared to 2.5 ex post re-orgs per day.

Given one extra slot per day where attesting will cause a missed head vote, each individual validator has a 1/32 chance of being assigned to that slot. So we have an increase of 1/32 missed head votes per validator per day in expectation. Given that we currently see ~7 head vote misses per validator per day due to late/missing blocks (and re-orgs), this represents only a (1/32)/7 = 0.45% increase in missed head votes in expectation. I believe this is so small that we shouldn't worry about it. Particularly as getting proposer boost deployed is good for network health and may enable us to drive down the number of late blocks over time (which will decrease head vote misses).

## TL;DR

Enable proposer boost now and release ASAP, as financial downside is a 0.45% increase in missed head votes until widespread adoption.

## Issue Addressed

MEV boost compatibility

## Proposed Changes

See #2987

## Additional Info

This is blocked on the stabilization of a couple specs, [here](https://github.com/ethereum/beacon-APIs/pull/194) and [here](https://github.com/flashbots/mev-boost/pull/20).

Additional TODO's and outstanding questions

- [ ] MEV boost JWT Auth

- [ ] Will `builder_proposeBlindedBlock` return the revealed payload for the BN to propogate

- [ ] Should we remove `private-tx-proposals` flag and communicate BN <> VC with blinded blocks by default once these endpoints enter the beacon-API's repo? This simplifies merge transition logic.

Co-authored-by: realbigsean <seananderson33@gmail.com>

Co-authored-by: realbigsean <sean@sigmaprime.io>

## Issue Addressed

#3073

## Proposed Changes

Add around `SAFE_SLOTS_TO_IMPORT_OPTIMISTICALLY` in the API

Co-authored-by: realbigsean <sean@sigmaprime.io>

## Issue Addressed

This address an issue which was preventing checkpoint-sync.

When the node starts from checkpoint sync, the head block and the finalized block are the same value. We did not respect this when sending a `forkchoiceUpdated` (fcU) call to the EL and were expecting fork choice to hold the *finalized ancestor of the head* and returning an error when it didn't.

This PR uses *only fork choice* for sending fcU updates. This is actually quite nice and avoids some atomicity issues between `chain.canonical_head` and `chain.fork_choice`. Now, whenever `chain.fork_choice.get_head` returns a value we also cache the values required for the next fcU call.

## TODO

- [x] ~~Blocked on #3043~~

- [x] Ensure there isn't a warn message at startup.

## Issue Addressed

As discussed on last-night's consensus call, the testnets next week will target the [Kiln Spec v2](https://hackmd.io/@n0ble/kiln-spec).

Presently, we support Kiln V1. V2 is backwards compatible, except for renaming `random` to `prev_randao` in:

- https://github.com/ethereum/execution-apis/pull/180

- https://github.com/ethereum/consensus-specs/pull/2835

With this PR we'll no longer be compatible with the existing Kintsugi and Kiln testnets, however we'll be ready for the testnets next week. I raised this breaking change in the call last night, we are all keen to move forward and break things.

We now target the [`merge-kiln-v2`](https://github.com/MariusVanDerWijden/go-ethereum/tree/merge-kiln-v2) branch for interop with Geth. This required adding the `--http.aauthport` to the tester to avoid a port conflict at startup.

### Changes to exec integration tests

There's some change in the `merge-kiln-v2` version of Geth that means it can't compile on a vanilla Github runner. Bumping the `go` version on the runner solved this issue.

Whilst addressing this, I refactored the `testing/execution_integration` crate to be a *binary* rather than a *library* with tests. This means that we don't need to run the `build.rs` and build Geth whenever someone runs `make lint` or `make test-release`. This is nice for everyday users, but it's also nice for CI so that we can have a specific runner for these tests and we don't need to ensure *all* runners support everything required to build all execution clients.

## More Info

- [x] ~~EF tests are failing since the rename has broken some tests that reference the old field name. I have been told there will be new tests released in the coming days (25/02/22 or 26/02/22).~~

## Description

This PR adds a single, trivial commit (f5d2b27d78349d5a675a2615eba42cc9ae708094) atop #2986 to resolve a tests compile error. The original author (@ethDreamer) is AFK so I'm getting this one merged ☺️

Please see #2986 for more information about the other, significant changes in this PR.

Co-authored-by: Mark Mackey <mark@sigmaprime.io>

Co-authored-by: ethDreamer <37123614+ethDreamer@users.noreply.github.com>

## Issue Addressed

NA

## Proposed Changes

Adds the functionality to allow blocks to be validated/invalidated after their import as per the [optimistic sync spec](https://github.com/ethereum/consensus-specs/blob/dev/sync/optimistic.md#how-to-optimistically-import-blocks). This means:

- Updating `ProtoArray` to allow flipping the `execution_status` of ancestors/descendants based on payload validity updates.

- Creating separation between `execution_layer` and the `beacon_chain` by creating a `PayloadStatus` struct.

- Refactoring how the `execution_layer` selects a `PayloadStatus` from the multiple statuses returned from multiple EEs.

- Adding testing framework for optimistic imports.

- Add `ExecutionBlockHash(Hash256)` new-type struct to avoid confusion between *beacon block roots* and *execution payload hashes*.

- Add `merge` to [`FORKS`](c3a793fd73/Makefile (L17)) in the `Makefile` to ensure we test the beacon chain with merge settings.

- Fix some tests here that were failing due to a missing execution layer.

## TODO

- [ ] Balance tests

Co-authored-by: Mark Mackey <mark@sigmaprime.io>

## Proposed Changes

Lots of lint updates related to `flat_map`, `unwrap_or_else` and string patterns. I did a little more creative refactoring in the op pool, but otherwise followed Clippy's suggestions.

## Additional Info

We need this PR to unblock CI.

## Issue Addressed

Alternative to #2935

## Proposed Changes

Replace the `Vec<u8>` inside `Bitfield` with a `SmallVec<[u8; 32>`. This eliminates heap allocations for attestation bitfields until we reach 500K validators, at which point we can consider increasing `SMALLVEC_LEN` to 40 or 48.

While running Lighthouse under `heaptrack` I found that SSZ encoding and decoding of bitfields corresponded to 22% of all allocations by count. I've confirmed that with this change applied those allocations disappear entirely.

## Additional Info

We can win another 8 bytes of space by using `smallvec`'s [`union` feature](https://docs.rs/smallvec/1.8.0/smallvec/#union), although I might leave that for a future PR because I don't know how experimental that feature is and whether it uses some spicy `unsafe` blocks.

## Issue Addressed

Closes#3014

## Proposed Changes

- Rename `receipt_root` to `receipts_root`

- Rename `execute_payload` to `notify_new_payload`

- This is slightly weird since we modify everything except the actual HTTP call to the engine API. That change is expected to be implemented in #2985 (cc @ethDreamer)

- Enable "random" tests for Bellatrix.

## Notes

This will break *partially* compatibility with Kintusgi testnets in order to gain compatibility with [Kiln](https://hackmd.io/@n0ble/kiln-spec) testnets. I think it will only break the BN APIs due to the `receipts_root` change, however it might have some other effects too.

Co-authored-by: Michael Sproul <micsproul@gmail.com>

## Issue Addressed

#2883

## Proposed Changes

* Added `suggested-fee-recipient` & `suggested-fee-recipient-file` flags to validator client (similar to graffiti / graffiti-file implementation).

* Added proposer preparation service to VC, which sends the fee-recipient of all known validators to the BN via [/eth/v1/validator/prepare_beacon_proposer](https://github.com/ethereum/beacon-APIs/pull/178) api once per slot

* Added [/eth/v1/validator/prepare_beacon_proposer](https://github.com/ethereum/beacon-APIs/pull/178) api endpoint and preparation data caching

* Added cleanup routine to remove cached proposer preparations when not updated for 2 epochs

## Additional Info

Changed the Implementation following the discussion in #2883.

Co-authored-by: pk910 <philipp@pk910.de>

Co-authored-by: Paul Hauner <paul@paulhauner.com>

Co-authored-by: Philipp K <philipp@pk910.de>

## Issue Addressed

N/A

## Proposed Changes

https://github.com/sigp/lighthouse/pull/2940 introduced a bug where we parsed the uint256 terminal total difficulty as a hex string instead of a decimal string. Fixes the bug and adds tests.

## Issue Addressed

Implements the standard key manager API from https://ethereum.github.io/keymanager-APIs/, formerly https://github.com/ethereum/beacon-APIs/pull/151

Related to https://github.com/sigp/lighthouse/issues/2557

## Proposed Changes

- [x] Add all of the new endpoints from the standard API: GET, POST and DELETE.

- [x] Add a `validators.enabled` column to the slashing protection database to support atomic disable + export.

- [x] Add tests for all the common sequential accesses of the API

- [x] Add tests for interactions with remote signer validators

- [x] Add end-to-end tests for migration of validators from one VC to another

- [x] Implement the authentication scheme from the standard (token bearer auth)

## Additional Info

The `enabled` column in the validators SQL database is necessary to prevent a race condition when exporting slashing protection data. Without the slashing protection database having a way of knowing that a key has been disabled, a concurrent request to sign a message could insert a new record into the database. The `delete_concurrent_with_signing` test exercises this code path, and was indeed failing before the `enabled` column was added.

The validator client authentication has been modified from basic auth to bearer auth, with basic auth preserved for backwards compatibility.

## Proposed Changes

Add a new hardcoded spec for the Gnosis Beacon Chain.

Ideally, official Lighthouse executables will be able to connect to the gnosis beacon chain from now on, using `--network gnosis` CLI option.

## Issue Addressed

Continuation to #2934

## Proposed Changes

Currently, we have the transition fields in the config (`TERMINAL_TOTAL_DIFFICULTY`, `TERMINAL_BLOCK_HASH` and `TERMINAL_BLOCK_HASH_ACTIVATION_EPOCH`) as mandatory fields.

This is causing compatibility issues with other client BN's (nimbus and teku v22.1.0) which don't return these fields on a `eth/v1/config/spec` api call. Since we don't use this values until the merge, I think it's okay to have default values set for these fields as well to ensure compatibility.

## Issue Addressed

#2900

## Proposed Changes

Change type of extra_fields in ConfigAndPreset so it can contain non string values (inside serde_json::Value)

## Proposed Changes

Restore compatibility with beacon nodes using the `MERGE` naming by:

1. Adding defaults for the Bellatrix `Config` fields

2. Not attempting to read (or serve) the Bellatrix preset on `/config/spec`.

I've confirmed that this works with Infura, and just logs a warning:

```

Jan 20 10:51:31.078 INFO Connected to beacon node endpoint: https://eth2-beacon-mainnet.infura.io/, version: teku/v22.1.0/linux-x86_64/-eclipseadoptium-openjdk64bitservervm-java-17

Jan 20 10:51:31.344 WARN Beacon node config does not match exactly, advice: check that the BN is updated and configured for any upcoming forks, endpoint: https://eth2-beacon-mainnet.infura.io/

Jan 20 10:51:31.344 INFO Initialized beacon node connections available: 1, total: 1

```

## Proposed Changes

Change the canonical fork name for the merge to Bellatrix. Keep other merge naming the same to avoid churn.

I've also fixed and enabled the `fork` and `transition` tests for Bellatrix, and the v1.1.7 fork choice tests.

Additionally, the `BellatrixPreset` has been added with tests. It gets served via the `/config/spec` API endpoint along with the other presets.

## Proposed Changes

Allocate less memory in sync by hashing the `SignedBeaconBlock`s in a batch directly, rather than going via SSZ bytes.

Credit to @paulhauner for finding this source of temporary allocations.

## Issue Addressed

Restore compatibility between Lighthouse v2.0.1 VC and `unstable` BN in preparation for the next release.

## Proposed Changes

* Don't serialize the `PROPOSER_SCORE_BOOST` as `null` because it breaks the `extra_fields: HashMap<String, String>` used by the v2.0.1 VC.

## Proposed Changes

Update `superstruct` to bring in @realbigsean's fixes necessary for MEV-compatible private beacon block types (a la #2795).

The refactoring is due to another change in superstruct that allows partial getters to be auto-generated.

## Issue Addressed

Successor to #2431

## Proposed Changes

* Add a `BlockReplayer` struct to abstract over the intricacies of calling `per_slot_processing` and `per_block_processing` while avoiding unnecessary tree hashing.

* Add a variant of the forwards state root iterator that does not require an `end_state`.

* Use the `BlockReplayer` when reconstructing states in the database. Use the efficient forwards iterator for frozen states.

* Refactor the iterators to remove `Arc<HotColdDB>` (this seems to be neater than making _everything_ an `Arc<HotColdDB>` as I did in #2431).

Supplying the state roots allow us to avoid building a tree hash cache at all when reconstructing historic states, which saves around 1 second flat (regardless of `slots-per-restore-point`). This is a small percentage of worst-case state load times with 200K validators and SPRP=2048 (~15s vs ~16s) but a significant speed-up for more frequent restore points: state loads with SPRP=32 should be now consistently <500ms instead of 1.5s (a ~3x speedup).

## Additional Info

Required by https://github.com/sigp/lighthouse/pull/2628

## Issue Addressed

Resolves: https://github.com/sigp/lighthouse/issues/2741

Includes: https://github.com/sigp/lighthouse/pull/2853 so that we can get ssz static tests passing here on v1.1.6. If we want to merge that first, we can make this diff slightly smaller

## Proposed Changes

- Changes the `justified_epoch` and `finalized_epoch` in the `ProtoArrayNode` each to an `Option<Checkpoint>`. The `Option` is necessary only for the migration, so not ideal. But does allow us to add a default logic to `None` on these fields during the database migration.

- Adds a database migration from a legacy fork choice struct to the new one, search for all necessary block roots in fork choice by iterating through blocks in the db.

- updates related to https://github.com/ethereum/consensus-specs/pull/2727

- We will have to update the persisted forkchoice to make sure the justified checkpoint stored is correct according to the updated fork choice logic. This boils down to setting the forkchoice store's justified checkpoint to the justified checkpoint of the block that advanced the finalized checkpoint to the current one.

- AFAICT there's no migration steps necessary for the update to allow applying attestations from prior blocks, but would appreciate confirmation on that

- I updated the consensus spec tests to v1.1.6 here, but they will fail until we also implement the proposer score boost updates. I confirmed that the previously failing scenario `new_finalized_slot_is_justified_checkpoint_ancestor` will now pass after the boost updates, but haven't confirmed _all_ tests will pass because I just quickly stubbed out the proposer boost test scenario formatting.

- This PR now also includes proposer boosting https://github.com/ethereum/consensus-specs/pull/2730

## Additional Info

I realized checking justified and finalized roots in fork choice makes it more likely that we trigger this bug: https://github.com/ethereum/consensus-specs/pull/2727

It's possible the combination of justified checkpoint and finalized checkpoint in the forkchoice store is different from in any block in fork choice. So when trying to startup our store's justified checkpoint seems invalid to the rest of fork choice (but it should be valid). When this happens we get an `InvalidBestNode` error and fail to start up. So I'm including that bugfix in this branch.

Todo:

- [x] Fix fork choice tests

- [x] Self review

- [x] Add fix for https://github.com/ethereum/consensus-specs/pull/2727

- [x] Rebase onto Kintusgi

- [x] Fix `num_active_validators` calculation as @michaelsproul pointed out

- [x] Clean up db migrations

Co-authored-by: realbigsean <seananderson33@gmail.com>

## Issue Addressed

N/A

## Proposed Changes

Changes required for the `merge-devnet-3`. Added some more non substantive renames on top of @realbigsean 's commit.

Note: this doesn't include the proposer boosting changes in kintsugi v3.

This devnet isn't running with the proposer boosting fork choice changes so if we are looking to merge https://github.com/sigp/lighthouse/pull/2822 into `unstable`, then I think we should just maintain this branch for the devnet temporarily.

Co-authored-by: realbigsean <seananderson33@gmail.com>

Co-authored-by: Paul Hauner <paul@paulhauner.com>

## Issue Addressed

New rust lints

## Proposed Changes

- Boxing some enum variants

- removing some unused fields (is the validator lockfile unused? seemed so to me)

## Additional Info

- some error fields were marked as dead code but are logged out in areas

- left some dead fields in our ef test code because I assume they are useful for debugging?

Co-authored-by: realbigsean <seananderson33@gmail.com>

Fix max packet sizes

Fix max_payload_size function

Add merge block test

Fix max size calculation; fix up test

Clear comments

Add a payload_size_function

Use safe arith for payload calculation

Return an error if block too big in block production

Separate test to check if block is over limit

* Fix fork choice after rebase

* Remove paulhauner warp dep

* Fix fork choice test compile errors

* Assume fork choice payloads are valid

* Add comment

* Ignore new tests

* Fix error in test skipping

* Change base_fee_per_gas to Uint256

* Add custom (de)serialization to ExecutionPayload

* Fix errors

* Add a quoted_u256 module

* Remove unused function

* lint

* Add test

* Remove extra line

Co-authored-by: Paul Hauner <paul@paulhauner.com>

* Fix arbitrary check kintsugi

* Add merge chain spec fields, and a function to determine which constant to use based on the state variant

* increment spec test version

* Remove `Transaction` enum wrapper

* Remove Transaction new-type

* Remove gas validations

* Add `--terminal-block-hash-epoch-override` flag

* Increment spec tests version to 1.1.5

* Remove extraneous gossip verification https://github.com/ethereum/consensus-specs/pull/2687

* - Remove unused Error variants

- Require both "terminal-block-hash-epoch-override" and "terminal-block-hash-override" when either flag is used

* - Remove a couple more unused Error variants

Co-authored-by: Paul Hauner <paul@paulhauner.com>

* update initializing from eth1 for merge genesis

* read execution payload header from file lcli

* add `create-payload-header` command to `lcli`

* fix base fee parsing

* Apply suggestions from code review

* default `execution_payload_header` bool to false when deserializing `meta.yml` in EF tests

Co-authored-by: Paul Hauner <paul@paulhauner.com>

* Add payload verification status to fork choice

* Pass payload verification status to import_block

* Add valid back-propagation

* Add head safety status latch to API

* Remove ExecutionLayerStatus

* Add execution info to client notifier

* Update notifier logs

* Change use of "hash" to refer to beacon block

* Shutdown on invalid finalized block

* Tidy, add comments

* Fix failing FC tests

* Allow blocks with unsafe head

* Fix forkchoiceUpdate call on startup

* Thread eth1_block_hash into interop genesis state

* Add merge-fork-epoch flag

* Build LH with minimal spec by default

* Add verbose logs to execution_layer

* Add --http-allow-sync-stalled flag

* Update lcli new-testnet to create genesis state

* Fix http test

* Fix compile errors in tests

* Start adding merge tests

* Expose MockExecutionLayer

* Add mock_execution_layer to BeaconChainHarness

* Progress with merge test

* Return more detailed errors with gas limit issues

* Use a better gas limit in block gen

* Ensure TTD is met in block gen

* Fix basic_merge tests

* Start geth testing

* Fix conflicts after rebase

* Remove geth tests

* Improve merge test

* Address clippy lints

* Make pow block gen a pure function

* Add working new test, breaking existing test

* Fix test names

* Add should_panic

* Don't run merge tests in debug

* Detect a tokio runtime when starting MockServer

* Fix clippy lint, include merge tests

* - Update the fork choice `ProtoNode` to include `is_merge_complete`

- Add database migration for the persisted fork choice

* update tests

* Small cleanup

* lints

* store execution block hash in fork choice rather than bool

Added Execution Payload from Rayonism Fork

Updated new Containers to match Merge Spec

Updated BeaconBlockBody for Merge Spec

Completed updating BeaconState and BeaconBlockBody

Modified ExecutionPayload<T> to use Transaction<T>

Mostly Finished Changes for beacon-chain.md

Added some things for fork-choice.md

Update to match new fork-choice.md/fork.md changes

ran cargo fmt

Added Missing Pieces in eth2_libp2p for Merge

fix ef test

Various Changes to Conform Closer to Merge Spec

## Issue Addressed

Resolves#2545

## Proposed Changes

Adds the long-overdue EF tests for fork choice. Although we had pretty good coverage via other implementations that closely followed our approach, it is nonetheless important for us to implement these tests too.

During testing I found that we were using a hard-coded `SAFE_SLOTS_TO_UPDATE_JUSTIFIED` value rather than one from the `ChainSpec`. This caused a failure during a minimal preset test. This doesn't represent a risk to mainnet or testnets, since the hard-coded value matched the mainnet preset.

## Failing Cases

There is one failing case which is presently marked as `SkippedKnownFailure`:

```

case 4 ("new_finalized_slot_is_justified_checkpoint_ancestor") from /home/paul/development/lighthouse/testing/ef_tests/consensus-spec-tests/tests/minimal/phase0/fork_choice/on_block/pyspec_tests/new_finalized_slot_is_justified_checkpoint_ancestor failed with NotEqual:

head check failed: Got Head { slot: Slot(40), root: 0x9183dbaed4191a862bd307d476e687277fc08469fc38618699863333487703e7 } | Expected Head { slot: Slot(24), root: 0x105b49b51bf7103c182aa58860b039550a89c05a4675992e2af703bd02c84570 }

```

This failure is due to #2741. It's not a particularly high priority issue at the moment, so we fix it after merging this PR.

## Issue Addressed

Resolves#2612

## Proposed Changes

Implements both the checks mentioned in the original issue.

1. Verifies the `randao_reveal` in the beacon node

2. Cross checks the proposer index after getting back the block from the beacon node.

## Additional info

The block production time increases by ~10x because of the signature verification on the beacon node (based on the `beacon_block_production_process_seconds` metric) when running on a local testnet.

## Proposed Changes

* Add the `Eth-Consensus-Version` header to the HTTP API for the block and state endpoints. This is part of the v2.1.0 API that was recently released: https://github.com/ethereum/beacon-APIs/pull/170

* Add tests for the above. I refactored the `eth2` crate's helper functions to make this more straight-forward, and introduced some new mixin traits that I think greatly improve readability and flexibility.

* Add a new `map_with_fork!` macro which is useful for decoding a superstruct type without naming all its variants. It is now used for SSZ-decoding `BeaconBlock` and `BeaconState`, and for JSON-decoding `SignedBeaconBlock` in the API.

## Additional Info

The `map_with_fork!` changes will conflict with the Merge changes, but when resolving the conflict the changes from this branch should be preferred (it is no longer necessary to enumerate every fork). The merge fork _will_ need to be added to `map_fork_name_with`.

## Issue Addressed

Continuation of #2728, fix the fork choice tests for Rust 1.56.0 so that `unstable` is free of warnings.

CI will be broken until this PR merges, because we strictly enforce the absence of warnings (even for tests)

## Issue Addressed

Resolves#2689

## Proposed Changes

Copy of #2709 so I can appease CI and merge without waiting for @realbigsean to come online. See #2709 for more information.

## Additional Info

NA

Co-authored-by: realbigsean <seananderson33@gmail.com>

## Issue Addressed

NA

## Proposed Changes

This is a wholesale rip-off of #2708, see that PR for more of a description.

I've made this PR since @realbigsean is offline and I can't merge his PR due to Github's frustrating `target-branch-check` bug. I also changed the branch to `unstable`, since I'm trying to minimize the diff between `merge-f2f`/`unstable`. I'll just rebase `merge-f2f` onto `unstable` after this PR merges.

When running `make lint` I noticed the following warning:

```

warning: patch for `fixed-hash` uses the features mechanism. default-features and features will not take effect because the patch dependency does not support this mechanism

```

So, I removed the `features` section from the patch.

## Additional Info

NA

Co-authored-by: realbigsean <seananderson33@gmail.com>

## Issue Addressed

NA

## Proposed Changes

This PR is near-identical to https://github.com/sigp/lighthouse/pull/2652, however it is to be merged into `unstable` instead of `merge-f2f`. Please see that PR for reasoning.

I'm making this duplicate PR to merge to `unstable` in an effort to shrink the diff between `unstable` and `merge-f2f` by doing smaller, lead-up PRs.

## Additional Info

NA

## Issue Addressed

NA

## Proposed Changes

Implements the "union" type from the SSZ spec for `ssz`, `ssz_derive`, `tree_hash` and `tree_hash_derive` so it may be derived for `enums`:

https://github.com/ethereum/consensus-specs/blob/v1.1.0-beta.3/ssz/simple-serialize.md#union

The union type is required for the merge, since the `Transaction` type is defined as a single-variant union `Union[OpaqueTransaction]`.

### Crate Updates

This PR will (hopefully) cause CI to publish new versions for the following crates:

- `eth2_ssz_derive`: `0.2.1` -> `0.3.0`

- `eth2_ssz`: `0.3.0` -> `0.4.0`

- `eth2_ssz_types`: `0.2.0` -> `0.2.1`

- `tree_hash`: `0.3.0` -> `0.4.0`

- `tree_hash_derive`: `0.3.0` -> `0.4.0`

These these crates depend on each other, I've had to add a workspace-level `[patch]` for these crates. A follow-up PR will need to remove this patch, ones the new versions are published.

### Union Behaviors

We already had SSZ `Encode` and `TreeHash` derive for enums, however it just did a "transparent" pass-through of the inner value. Since the "union" decoding from the spec is in conflict with the transparent method, I've required that all `enum` have exactly one of the following enum-level attributes:

#### SSZ

- `#[ssz(enum_behaviour = "union")]`

- matches the spec used for the merge

- `#[ssz(enum_behaviour = "transparent")]`

- maintains existing functionality

- not supported for `Decode` (never was)

#### TreeHash

- `#[tree_hash(enum_behaviour = "union")]`

- matches the spec used for the merge

- `#[tree_hash(enum_behaviour = "transparent")]`

- maintains existing functionality

This means that we can maintain the existing transparent behaviour, but all existing users will get a compile-time error until they explicitly opt-in to being transparent.

### Legacy Option Encoding

Before this PR, we already had a union-esque encoding for `Option<T>`. However, this was with the *old* SSZ spec where the union selector was 4 bytes. During merge specification, the spec was changed to use 1 byte for the selector.

Whilst the 4-byte `Option` encoding was never used in the spec, we used it in our database. Writing a migrate script for all occurrences of `Option` in the database would be painful, especially since it's used in the `CommitteeCache`. To avoid the migrate script, I added a serde-esque `#[ssz(with = "module")]` field-level attribute to `ssz_derive` so that we can opt into the 4-byte encoding on a field-by-field basis.

The `ssz::legacy::four_byte_impl!` macro allows a one-liner to define the module required for the `#[ssz(with = "module")]` for some `Option<T> where T: Encode + Decode`.

Notably, **I have removed `Encode` and `Decode` impls for `Option`**. I've done this to force a break on downstream users. Like I mentioned, `Option` isn't used in the spec so I don't think it'll be *that* annoying. I think it's nicer than quietly having two different union implementations or quietly breaking the existing `Option` impl.

### Crate Publish Ordering

I've modified the order in which CI publishes crates to ensure that we don't publish a crate without ensuring we already published a crate that it depends upon.

## TODO

- [ ] Queue a follow-up `[patch]`-removing PR.

## Issue Addressed

NA

## Proposed Changes

Adds the ability to verify batches of aggregated/unaggregated attestations from the network.

When the `BeaconProcessor` finds there are messages in the aggregated or unaggregated attestation queues, it will first check the length of the queue:

- `== 1` verify the attestation individually.

- `>= 2` take up to 64 of those attestations and verify them in a batch.

Notably, we only perform batch verification if the queue has a backlog. We don't apply any artificial delays to attestations to try and force them into batches.

### Batching Details

To assist with implementing batches we modify `beacon_chain::attestation_verification` to have two distinct categories for attestations:

- *Indexed* attestations: those which have passed initial validation and were valid enough for us to derive an `IndexedAttestation`.

- *Verified* attestations: those attestations which were indexed *and also* passed signature verification. These are well-formed, interesting messages which were signed by validators.

The batching functions accept `n` attestations and then return `n` attestation verification `Result`s, where those `Result`s can be any combination of `Ok` or `Err`. In other words, we attempt to verify as many attestations as possible and return specific per-attestation results so peer scores can be updated, if required.

When we batch verify attestations, we first try to map all those attestations to *indexed* attestations. If any of those attestations were able to be indexed, we then perform batch BLS verification on those indexed attestations. If the batch verification succeeds, we convert them into *verified* attestations, disabling individual signature checking. If the batch fails, we convert to verified attestations with individual signature checking enabled.

Ultimately, we optimistically try to do a batch verification of attestation signatures and fall-back to individual verification if it fails. This opens an attach vector for "poisoning" the attestations and causing us to waste a batch verification. I argue that peer scoring should do a good-enough job of defending against this and the typical-case gains massively outweigh the worst-case losses.

## Additional Info

Before this PR, attestation verification took the attestations by value (instead of by reference). It turns out that this was unnecessary and, in my opinion, resulted in some undesirable ergonomics (e.g., we had to pass the attestation back in the `Err` variant to avoid clones). In this PR I've modified attestation verification so that it now takes a reference.

I refactored the `beacon_chain/tests/attestation_verification.rs` tests so they use a builder-esque "tester" struct instead of a weird macro. It made it easier for me to test individual/batch with the same set of tests and I think it was a nice tidy-up. Notably, I did this last to try and make sure my new refactors to *actual* production code would pass under the existing test suite.

## Issue Addressed

Closes#1891Closes#1784

## Proposed Changes

Implement checkpoint sync for Lighthouse, enabling it to start from a weak subjectivity checkpoint.

## Additional Info

- [x] Return unavailable status for out-of-range blocks requested by peers (#2561)

- [x] Implement sync daemon for fetching historical blocks (#2561)

- [x] Verify chain hashes (either in `historical_blocks.rs` or the calling module)

- [x] Consistency check for initial block + state

- [x] Fetch the initial state and block from a beacon node HTTP endpoint

- [x] Don't crash fetching beacon states by slot from the API

- [x] Background service for state reconstruction, triggered by CLI flag or API call.

Considered out of scope for this PR:

- Drop the requirement to provide the `--checkpoint-block` (this would require some pretty heavy refactoring of block verification)

Co-authored-by: Diva M <divma@protonmail.com>

## Issue Addressed

N/A

## Proposed Changes

Add a fork_digest to `ForkContext` only if it is set in the config.

Reject gossip messages on post fork topics before the fork happens.

Edit: Instead of rejecting gossip messages on post fork topics, we now subscribe to post fork topics 2 slots before the fork.

Co-authored-by: Age Manning <Age@AgeManning.com>

[EIP-3030]: https://eips.ethereum.org/EIPS/eip-3030

[Web3Signer]: https://consensys.github.io/web3signer/web3signer-eth2.html

## Issue Addressed

Resolves#2498

## Proposed Changes

Allows the VC to call out to a [Web3Signer] remote signer to obtain signatures.

## Additional Info

### Making Signing Functions `async`

To allow remote signing, I needed to make all the signing functions `async`. This caused a bit of noise where I had to convert iterators into `for` loops.

In `duties_service.rs` there was a particularly tricky case where we couldn't hold a write-lock across an `await`, so I had to first take a read-lock, then grab a write-lock.

### Move Signing from Core Executor

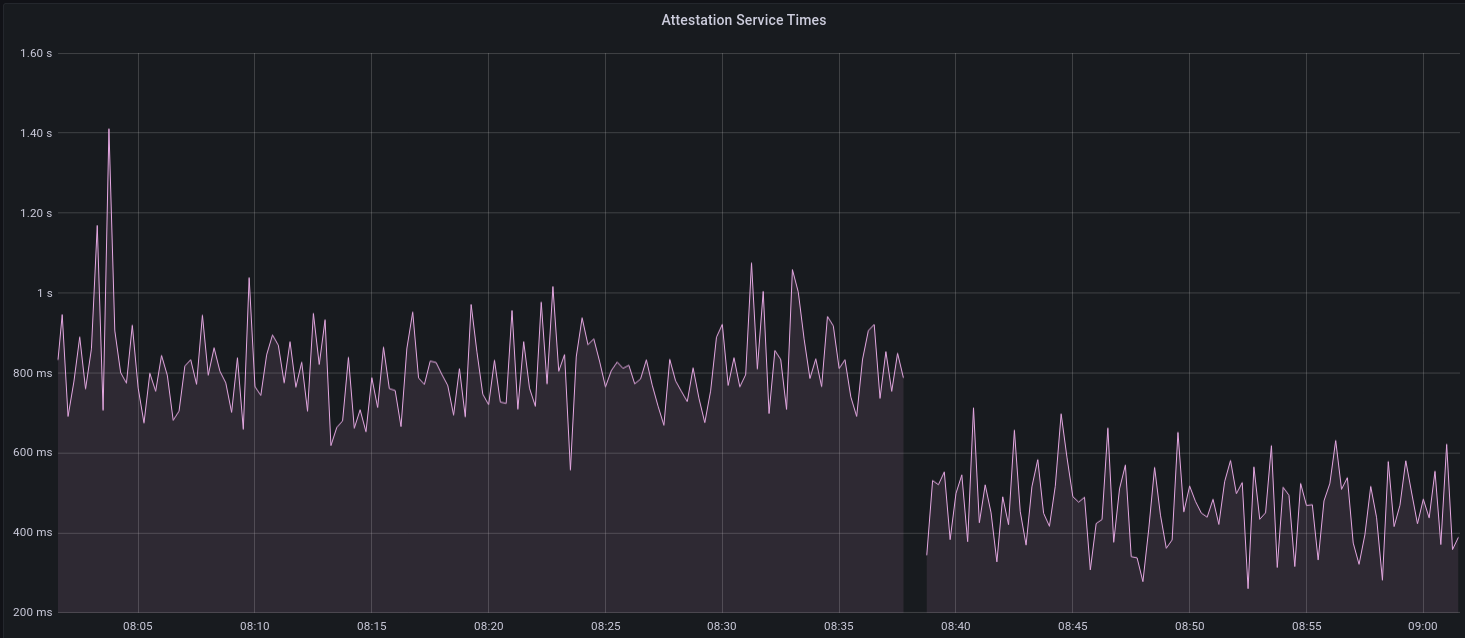

Whilst implementing this feature, I noticed that we signing was happening on the core tokio executor. I suspect this was causing the executor to temporarily lock and occasionally trigger some HTTP timeouts (and potentially SQL pool timeouts, but I can't verify this). Since moving all signing into blocking tokio tasks, I noticed a distinct drop in the "atttestations_http_get" metric on a Prater node:

I think this graph indicates that freeing the core executor allows the VC to operate more smoothly.

### Refactor TaskExecutor

I noticed that the `TaskExecutor::spawn_blocking_handle` function would fail to spawn tasks if it were unable to obtain handles to some metrics (this can happen if the same metric is defined twice). It seemed that a more sensible approach would be to keep spawning tasks, but without metrics. To that end, I refactored the function so that it would still function without metrics. There are no other changes made.

## TODO

- [x] Restructure to support multiple signing methods.

- [x] Add calls to remote signer from VC.

- [x] Documentation

- [x] Test all endpoints

- [x] Test HTTPS certificate

- [x] Allow adding remote signer validators via the API

- [x] Add Altair support via [21.8.1-rc1](https://github.com/ConsenSys/web3signer/releases/tag/21.8.1-rc1)

- [x] Create issue to start using latest version of web3signer. (See #2570)

## Notes

- ~~Web3Signer doesn't yet support the Altair fork for Prater. See https://github.com/ConsenSys/web3signer/issues/423.~~

- ~~There is not yet a release of Web3Signer which supports Altair blocks. See https://github.com/ConsenSys/web3signer/issues/391.~~

## Issue Addressed

Resolves#2582

## Proposed Changes

Use `quoted_u64` and `quoted_u64_vec` custom serde deserializers from `eth2_serde_utils` to support the proper Eth2.0 API spec for `/eth/v1/validator/sync_committee_subscriptions`

## Additional Info

N/A

## Proposed Changes

Cache the total active balance for the current epoch in the `BeaconState`. Computing this value takes around 1ms, and this was negatively impacting block processing times on Prater, particularly when reconstructing states.

With a large number of attestations in each block, I saw the `process_attestations` function taking 150ms, which means that reconstructing hot states can take up to 4.65s (31 * 150ms), and reconstructing freezer states can take up to 307s (2047 * 150ms).

I opted to add the cache to the beacon state rather than computing the total active balance at the start of state processing and threading it through. Although this would be simpler in a way, it would waste time, particularly during block replay, as the total active balance doesn't change for the duration of an epoch. So we save ~32ms for hot states, and up to 8.1s for freezer states (using `--slots-per-restore-point 8192`).

## Issue Addressed

Related to: #2259

Made an attempt at all the necessary updates here to publish the crates to crates.io. I incremented the minor versions on all the crates that have been previously published. We still might run into some issues as we try to publish because I'm not able to test this out but I think it's a good starting point.

## Proposed Changes

- Add description and license to `ssz_types` and `serde_util`

- rename `serde_util` to `eth2_serde_util`

- increment minor versions

- remove path dependencies

- remove patch dependencies

## Additional Info

Crates published:

- [x] `tree_hash` -- need to publish `tree_hash_derive` and `eth2_hashing` first

- [x] `eth2_ssz_types` -- need to publish `eth2_serde_util` first

- [x] `tree_hash_derive`

- [x] `eth2_ssz`

- [x] `eth2_ssz_derive`

- [x] `eth2_serde_util`

- [x] `eth2_hashing`

Co-authored-by: realbigsean <seananderson33@gmail.com>

## Issue Addressed

N/A

## Proposed Changes

Add functionality in the validator monitor to provide sync committee related metrics for monitored validators.

Co-authored-by: Michael Sproul <michael@sigmaprime.io>

## Issue Addressed

Closes#2526

## Proposed Changes

If the head block fails to decode on start up, do two things:

1. Revert all blocks between the head and the most recent hard fork (to `fork_slot - 1`).

2. Reset fork choice so that it contains the new head, and all blocks back to the new head's finalized checkpoint.

## Additional Info

I tweaked some of the beacon chain test harness stuff in order to make it generic enough to test with a non-zero slot clock on start-up. In the process I consolidated all the various `new_` methods into a single generic one which will hopefully serve all future uses 🤞

## Issue Addressed

Resolves#2524

## Proposed Changes

- Return all known forks in the `/config/fork_schedule`, previously returned only the head of the chain's fork.

- Deleted the `StateId::head` method because it was only previously used in this endpoint.

Co-authored-by: realbigsean <seananderson33@gmail.com>

## Proposed Changes

* Consolidate Tokio versions: everything now uses the latest v1.10.0, no more `tokio-compat`.

* Many semver-compatible changes via `cargo update`. Notably this upgrades from the yanked v0.8.0 version of crossbeam-deque which is present in v1.5.0-rc.0

* Many semver incompatible upgrades via `cargo upgrades` and `cargo upgrade --workspace pkg_name`. Notable ommissions:

- Prometheus, to be handled separately: https://github.com/sigp/lighthouse/issues/2485

- `rand`, `rand_xorshift`: the libsecp256k1 package requires 0.7.x, so we'll stick with that for now

- `ethereum-types` is pinned at 0.11.0 because that's what `web3` is using and it seems nice to have just a single version

## Additional Info

We still have two versions of `libp2p-core` due to `discv5` depending on the v0.29.0 release rather than `master`. AFAIK it should be OK to release in this state (cc @AgeManning )

## Issue Addressed

- Resolves#2502

## Proposed Changes

Adds tree-hash caching (THC 🍁) for `state.inactivity_scores`, as per #2502.

Since the `inactivity_scores` field is introduced during Altair, the cache must be optional (i.e., not present pre-Altair). The mechanism for optional caches was already implemented via the `ParticipationTreeHashCache`, albeit not quite generically enough. To this end, I made the `ParticipationTreeHashCache` more generic and renamed it to `OptionalTreeHashCache`. This made the code a little more verbose around the previous/current epoch participation fields, but overall less verbose when the needs of `inactivity_scores` are considered.

All changes to `ParticipationTreeHashCache` should be *non-substantial*.

## Additional Info

NA

## Proposed Changes

* Implement the validator client and HTTP API changes necessary to support Altair

Co-authored-by: realbigsean <seananderson33@gmail.com>

Co-authored-by: Michael Sproul <michael@sigmaprime.io>

## Issue Addressed

N/A

## Proposed Changes

- Removing a bunch of unnecessary references

- Updated `Error::VariantError` to `Error::Variant`

- There were additional enum variant lints that I ignored, because I thought our variant names were fine

- removed `MonitoredValidator`'s `pubkey` field, because I couldn't find it used anywhere. It looks like we just use the string version of the pubkey (the `id` field) if there is no index

## Additional Info

Co-authored-by: realbigsean <seananderson33@gmail.com>

## Issue Addressed

- Resolves#2169

## Proposed Changes

Adds the `AttesterCache` to allow validators to produce attestations for older slots. Presently, some arbitrary restrictions can force validators to receive an error when attesting to a slot earlier than the present one. This can cause attestation misses when there is excessive load on the validator client or time sync issues between the VC and BN.

## Additional Info

NA

## Proposed Changes

Add the `sync_aggregate` from `BeaconBlock` to the bulk signature verifier for blocks. This necessitates a new signature set constructor for the sync aggregate, which is different from the others due to the use of [`eth2_fast_aggregate_verify`](https://github.com/ethereum/eth2.0-specs/blob/v1.1.0-alpha.7/specs/altair/bls.md#eth2_fast_aggregate_verify) for sync aggregates, per [`process_sync_aggregate`](https://github.com/ethereum/eth2.0-specs/blob/v1.1.0-alpha.7/specs/altair/beacon-chain.md#sync-aggregate-processing). I made the choice to return an optional signature set, with `None` representing the case where the signature is valid on account of being the point at infinity (requires no further checking).

To "dogfood" the changes and prevent duplication, the consensus logic now uses the signature set approach as well whenever it is required to verify signatures (which should only be in testing AFAIK). The EF tests pass with the code as it exists currently, but failed before I adapted the `eth2_fast_aggregate_verify` changes (which is good).

As a result of this change Altair block processing should be a little faster, and importantly, we will no longer accidentally verify signatures when replaying blocks, e.g. when replaying blocks from the database.

## Issue Addressed

NA

## Proposed Changes

This PR addresses two things:

1. Allows the `ValidatorMonitor` to work with Altair states.

1. Optimizes `altair::process_epoch` (see [code](https://github.com/paulhauner/lighthouse/blob/participation-cache/consensus/state_processing/src/per_epoch_processing/altair/participation_cache.rs) for description)

## Breaking Changes

The breaking changes in this PR revolve around one premise:

*After the Altair fork, it's not longer possible (given only a `BeaconState`) to identify if a validator had *any* attestation included during some epoch. The best we can do is see if that validator made the "timely" source/target/head flags.*

Whilst this seems annoying, it's not actually too bad. Finalization is based upon "timely target" attestations, so that's really the most important thing. Although there's *some* value in knowing if a validator had *any* attestation included, it's far more important to know about "timely target" participation, since this is what affects finality and justification.

For simplicity and consistency, I've also removed the ability to determine if *any* attestation was included from metrics and API endpoints. Now, all Altair and non-Altair states will simply report on the head/target attestations.

The following section details where we've removed fields and provides replacement values.

### Breaking Changes: Prometheus Metrics

Some participation metrics have been removed and replaced. Some were removed since they are no longer relevant to Altair (e.g., total attesting balance) and others replaced with gwei values instead of pre-computed values. This provides more flexibility at display-time (e.g., Grafana).

The following metrics were added as replacements:

- `beacon_participation_prev_epoch_head_attesting_gwei_total`

- `beacon_participation_prev_epoch_target_attesting_gwei_total`

- `beacon_participation_prev_epoch_source_attesting_gwei_total`

- `beacon_participation_prev_epoch_active_gwei_total`

The following metrics were removed:

- `beacon_participation_prev_epoch_attester`

- instead use `beacon_participation_prev_epoch_source_attesting_gwei_total / beacon_participation_prev_epoch_active_gwei_total`.

- `beacon_participation_prev_epoch_target_attester`

- instead use `beacon_participation_prev_epoch_target_attesting_gwei_total / beacon_participation_prev_epoch_active_gwei_total`.

- `beacon_participation_prev_epoch_head_attester`

- instead use `beacon_participation_prev_epoch_head_attesting_gwei_total / beacon_participation_prev_epoch_active_gwei_total`.

The `beacon_participation_prev_epoch_attester` endpoint has been removed. Users should instead use the pre-existing `beacon_participation_prev_epoch_target_attester`.

### Breaking Changes: HTTP API

The `/lighthouse/validator_inclusion/{epoch}/{validator_id}` endpoint loses the following fields:

- `current_epoch_attesting_gwei` (use `current_epoch_target_attesting_gwei` instead)

- `previous_epoch_attesting_gwei` (use `previous_epoch_target_attesting_gwei` instead)

The `/lighthouse/validator_inclusion/{epoch}/{validator_id}` endpoint lose the following fields:

- `is_current_epoch_attester` (use `is_current_epoch_target_attester` instead)

- `is_previous_epoch_attester` (use `is_previous_epoch_target_attester` instead)

- `is_active_in_current_epoch` becomes `is_active_unslashed_in_current_epoch`.

- `is_active_in_previous_epoch` becomes `is_active_unslashed_in_previous_epoch`.

## Additional Info

NA

## TODO

- [x] Deal with total balances

- [x] Update validator_inclusion API

- [ ] Ensure `beacon_participation_prev_epoch_target_attester` and `beacon_participation_prev_epoch_head_attester` work before Altair

Co-authored-by: realbigsean <seananderson33@gmail.com>

## Proposed Changes

Update to the latest version of the Altair spec, which includes new tests and a tweak to the target sync aggregators.

## Additional Info

This change is _not_ required for the imminent Altair devnet, and is waiting on the merge of #2321 to unstable.

Co-authored-by: Paul Hauner <paul@paulhauner.com>

## Proposed Changes

Remove the remaining Altair `FIXME`s from consensus land.

1. Implement tree hash caching for the participation lists. This required some light type manipulation, including removing the `TreeHash` bound from `CachedTreeHash` which was purely descriptive.

2. Plumb the proposer index through Altair attestation processing, to avoid calculating it for _every_ attestation (potentially 128ms on large networks). This duplicates some work from #2431, but with the aim of getting it in sooner, particularly for the Altair devnets.

3. Removes two FIXMEs related to `superstruct` and cloning, which are unlikely to be particularly detrimental and will be tracked here instead: https://github.com/sigp/superstruct/issues/5

## Issue Addressed

#635

## Proposed Changes

- Keep attestations that reference a block we have not seen for 30secs before being re processed

- If we do import the block before that time elapses, it is reprocessed in that moment

- The first time it fails, do nothing wrt to gossipsub propagation or peer downscoring. If after being re processed it fails, downscore with a `LowToleranceError` and ignore the message.

## Issue Addressed

NA

## Proposed Changes

Adds a metric to see how many set bits are in the sync aggregate for each beacon block being imported.

## Additional Info

NA

## Proposed Changes

Modify the SHA256 implementation in `eth2_hashing` so that it switches between `ring` and `sha2` to take advantage of [x86_64 SHA extensions](https://en.wikipedia.org/wiki/Intel_SHA_extensions). The extensions are available on modern Intel and AMD CPUs, and seem to provide a considerable speed-up: on my Ryzen 5950X it dropped state tree hashing times by about 30% from 35ms to 25ms (on Prater).

## Additional Info

The extensions became available in the `sha2` crate [last year](https://www.reddit.com/r/rust/comments/hf2vcx/ann_rustcryptos_sha1_and_sha2_now_support/), and are not available in Ring, which uses a [pure Rust implementation of sha2](https://github.com/briansmith/ring/blob/main/src/digest/sha2.rs). Ring is faster on CPUs that lack the extensions so I've implemented a runtime switch to use `sha2` only when the extensions are available. The runtime switching seems to impose a miniscule penalty (see the benchmarks linked below).

## Proposed Changes

Implement the consensus changes necessary for the upcoming Altair hard fork.

## Additional Info

This is quite a heavy refactor, with pivotal types like the `BeaconState` and `BeaconBlock` changing from structs to enums. This ripples through the whole codebase with field accesses changing to methods, e.g. `state.slot` => `state.slot()`.

Co-authored-by: realbigsean <seananderson33@gmail.com>

## Issue Addressed

`make lint` failing on rust 1.53.0.

## Proposed Changes

1.53.0 updates

## Additional Info

I haven't figure out why yet, we were now hitting the recursion limit in a few crates. So I had to add `#![recursion_limit = "256"]` in a few places

Co-authored-by: realbigsean <seananderson33@gmail.com>

Co-authored-by: Michael Sproul <michael@sigmaprime.io>

## Issue Addressed

NA

## Primary Change

When investigating memory usage, I noticed that retrieving a block from an early slot (e.g., slot 900) would cause a sharp increase in the memory footprint (from 400mb to 800mb+) which seemed to be ever-lasting.

After some investigation, I found that the reverse iteration from the head back to that slot was the likely culprit. To counter this, I've switched the `BeaconChain::block_root_at_slot` to use the forwards iterator, instead of the reverse one.

I also noticed that the networking stack is using `BeaconChain::root_at_slot` to check if a peer is relevant (`check_peer_relevance`). Perhaps the steep, seemingly-random-but-consistent increases in memory usage are caused by the use of this function.

Using the forwards iterator with the HTTP API alleviated the sharp increases in memory usage. It also made the response much faster (before it felt like to took 1-2s, now it feels instant).

## Additional Changes

In the process I also noticed that we have two functions for getting block roots:

- `BeaconChain::block_root_at_slot`: returns `None` for a skip slot.

- `BeaconChain::root_at_slot`: returns the previous root for a skip slot.

I unified these two functions into `block_root_at_slot` and added the `WhenSlotSkipped` enum. Now, the caller must be explicit about the skip-slot behaviour when requesting a root.

Additionally, I replaced `vec![]` with `Vec::with_capacity` in `store::chunked_vector::range_query`. I stumbled across this whilst debugging and made this modification to see what effect it would have (not much). It seems like a decent change to keep around, but I'm not concerned either way.

Also, `BeaconChain::get_ancestor_block_root` is unused, so I got rid of it 🗑️.

## Additional Info

I haven't also done the same for state roots here. Whilst it's possible and a good idea, it's more work since the fwds iterators are presently block-roots-specific.

Whilst there's a few places a reverse iteration of state roots could be triggered (e.g., attestation production, HTTP API), they're no where near as common as the `check_peer_relevance` call. As such, I think we should get this PR merged first, then come back for the state root iters. I made an issue here https://github.com/sigp/lighthouse/issues/2377.

## Issue Addressed

The latest version of Rust has new clippy rules & the codebase isn't up to date with them.

## Proposed Changes

Small formatting changes that clippy tells me are functionally equivalent

## Issue Addressed

Closes#2274

## Proposed Changes

* Modify the `YamlConfig` to collect unknown fields into an `extra_fields` map, instead of failing hard.

* Log a debug message if there are extra fields returned to the VC from one of its BNs.

This restores Lighthouse's compatibility with Teku beacon nodes (and therefore Infura)

## Issue Addressed

NA

## Proposed Changes

Whilst hacking on something I noticed that the default implementation of `FixedVector` can violate the length constraint!

E.g., `let v: FixedVector<u8; U4> = <_>::default()` would create a fixed vector with length 0, even though it promises to *always* have length 4! This causes SSZ deserialization to fail and probably other things too.

This isn't a security risk as it can't be triggered externally, however it's a foot gun for LH devs.

## Additional Info

NA