## Issue Addressed

Closes#3460

## Proposed Changes

`blocks` and `block_min_delay` are never updated in the epoch summary

Co-authored-by: Michael Sproul <micsproul@gmail.com>

## Issue Addressed

NA

## Proposed Changes

Adds clarification to an error log when there is an error submitting a validator registration.

There seems to be a few cases where relays return errors during validator registration, including spurious timeouts and when a validator has been very recently activated/made pending.

Changing this log helps indicate that it's "just another registration error" rather than something more serious. I didn't drop this to a `WARN` since I still have hope we can eliminate these errors completely by chatting with relays and adjusting timeouts.

## Additional Info

NA

## Issue Addressed

New lints for rust 1.65

## Proposed Changes

Notable change is the identification or parameters that are only used in recursion

## Additional Info

na

## Summary

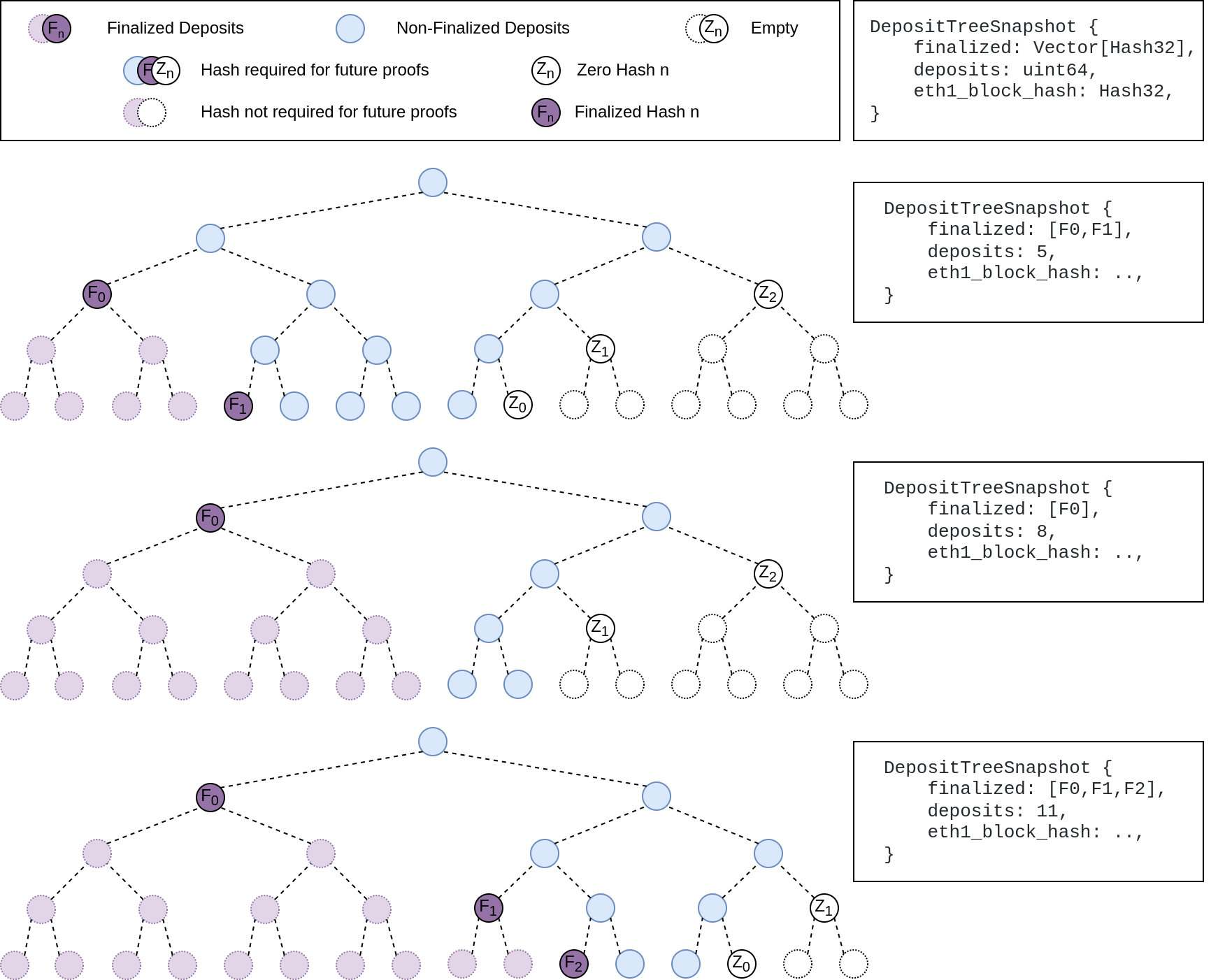

The deposit cache now has the ability to finalize deposits. This will cause it to drop unneeded deposit logs and hashes in the deposit Merkle tree that are no longer required to construct deposit proofs. The cache is finalized whenever the latest finalized checkpoint has a new `Eth1Data` with all deposits imported.

This has three benefits:

1. Improves the speed of constructing Merkle proofs for deposits as we can just replay deposits since the last finalized checkpoint instead of all historical deposits when re-constructing the Merkle tree.

2. Significantly faster weak subjectivity sync as the deposit cache can be transferred to the newly syncing node in compressed form. The Merkle tree that stores `N` finalized deposits requires a maximum of `log2(N)` hashes. The newly syncing node then only needs to download deposits since the last finalized checkpoint to have a full tree.

3. Future proofing in preparation for [EIP-4444](https://eips.ethereum.org/EIPS/eip-4444) as execution nodes will no longer be required to store logs permanently so we won't always have all historical logs available to us.

## More Details

Image to illustrate how the deposit contract merkle tree evolves and finalizes along with the resulting `DepositTreeSnapshot`

## Other Considerations

I've changed the structure of the `SszDepositCache` so once you load & save your database from this version of lighthouse, you will no longer be able to load it from older versions.

Co-authored-by: ethDreamer <37123614+ethDreamer@users.noreply.github.com>

## Issue Addressed

Updates discv5

Pending on

- [x] #3547

- [x] Alex upgrades his deps

## Proposed Changes

updates discv5 and the enr crate. The only relevant change would be some clear indications of ipv4 usage in lighthouse

## Additional Info

Functionally, this should be equivalent to the prev version.

As draft pending a discv5 release

* add capella gossip boiler plate

* get everything compiling

Co-authored-by: realbigsean <sean@sigmaprime.io

Co-authored-by: Mark Mackey <mark@sigmaprime.io>

* small cleanup

* small cleanup

* cargo fix + some test cleanup

* improve block production

* add fixme for potential panic

Co-authored-by: Mark Mackey <mark@sigmaprime.io>

## Issue Addressed

This reverts commit ca9dc8e094 (PR #3559) with some modifications.

## Proposed Changes

Unfortunately that PR introduced a performance regression in fork choice. The optimisation _intended_ to build the exit and pubkey caches on the head state _only if_ they were not already built. However, due to the head state always being cloned without these caches, we ended up building them every time the head changed, leading to a ~70ms+ penalty on mainnet.

fcfd02aeec/beacon_node/beacon_chain/src/canonical_head.rs (L633-L636)

I believe this is a severe enough regression to justify immediately releasing v3.2.1 with this change.

## Additional Info

I didn't fully revert #3559, because there were some unrelated deletions of dead code in that PR which I figured we may as well keep.

An alternative would be to clone the extra caches, but this likely still imposes some cost, so in the interest of applying a conservative fix quickly, I think reversion is the best approach. The optimisation from #3559 was not even optimising a particularly significant path, it was mostly for VCs running larger numbers of inactive keys. We can re-do it in the `tree-states` world where cache clones are cheap.

## Issue Addressed

NA

## Proposed Changes

Bump version to `v3.2.0`

## Additional Info

- ~~Blocked on #3597~~

- ~~Blocked on #3645~~

- ~~Blocked on #3653~~

- ~~Requires additional testing~~

## Issue Addressed

I missed this from https://github.com/sigp/lighthouse/pull/3491. peers were being banned at the behaviour level only. The identify errors are explained by this as well

## Proposed Changes

Add banning and unbanning

## Additional Info

Befor,e having tests that catch this was hard because the swarm was outside the behaviour. We could now have tests that prevent something like this in the future

Overrides any previous option that enables the eth1 service.

Useful for operating a `light` beacon node.

Co-authored-by: Michael Sproul <micsproul@gmail.com>

## Issue Addressed

Add flag to lengthen execution layer timeouts

Which issue # does this PR address?

#3607

## Proposed Changes

Added execution-timeout-multiplier flag and a cli test to ensure the execution layer config has the multiplier set correctly.

Please list or describe the changes introduced by this PR.

Add execution_timeout_multiplier to the execution layer config as Option<u32> and pass the u32 to HttpJsonRpc.

## Additional Info

Not certain that this is the best way to implement it so I'd appreciate any feedback.

Please provide any additional information. For example, future considerations

or information useful for reviewers.

## Issue Addressed

Fix a bug in block production that results in blocks with 0 attestations during the first slot of an epoch.

The bug is marked by debug logs of the form:

> DEBG Discarding attestation because of missing ancestor, block_root: 0x3cc00d9c9e0883b2d0db8606278f2b8423d4902f9a1ee619258b5b60590e64f8, pivot_slot: 4042591

It occurs when trying to look up the shuffling decision root for an attestation from a slot which is prior to fork choice's finalized block. This happens frequently when proposing in the first slot of the epoch where we have:

- `current_epoch == n`

- `attestation.data.target.epoch == n - 1`

- attestation shuffling epoch `== n - 3` (decision block being the last block of `n - 3`)

- `state.finalized_checkpoint.epoch == n - 2` (first block of `n - 2` is finalized)

Hence the shuffling decision slot is out of range of the fork choice backwards iterator _by a single slot_.

Unfortunately this bug was hidden when we weren't pruning fork choice, and then reintroduced in v2.5.1 when we fixed the pruning (https://github.com/sigp/lighthouse/releases/tag/v2.5.1). There's no way to turn that off or disable the filtering in our current release, so we need a new release to fix this issue.

Fortunately, it also does not occur on every epoch boundary because of the gradual pruning of fork choice every 256 blocks (~8 epochs):

01e84b71f5/consensus/proto_array/src/proto_array_fork_choice.rs (L16)01e84b71f5/consensus/proto_array/src/proto_array.rs (L713-L716)

So the probability of proposing a 0-attestation block given a proposal assignment is approximately `1/32 * 1/8 = 0.39%`.

## Proposed Changes

- Load the block's shuffling ID from fork choice and verify it against the expected shuffling ID of the head state. This code was initially written before we had settled on a representation of shuffling IDs, so I think it's a nice simplification to make use of them here rather than more ad-hoc logic that fundamentally does the same thing.

## Additional Info

Thanks to @moshe-blox for noticing this issue and bringing it to our attention.

## Issue Addressed

Closes https://github.com/sigp/lighthouse/issues/2371

## Proposed Changes

Backport some changes from `tree-states` that remove duplicated calculations of the `proposer_index`.

With this change the proposer index should be calculated only once for each block, and then plumbed through to every place it is required.

## Additional Info

In future I hope to add more data to the consensus context that is cached on a per-epoch basis, like the effective balances of validators and the base rewards.

There are some other changes to remove indexing in tests that were also useful for `tree-states` (the `tree-states` types don't implement `Index`).

## Issue Addressed

Implements new optimistic sync test format from https://github.com/ethereum/consensus-specs/pull/2982.

## Proposed Changes

- Add parsing and runner support for the new test format.

- Extend the mock EL with a set of canned responses keyed by block hash. Although this doubles up on some of the existing functionality I think it's really nice to use compared to the `preloaded_responses` or static responses. I think we could write novel new opt sync tests using these primtives much more easily than the previous ones. Forks are natively supported, and different responses to `forkchoiceUpdated` and `newPayload` are also straight-forward.

## Additional Info

Blocked on merge of the spec PR and release of new test vectors.

## Issue Addressed

While digging around in some logs I noticed that queries for validators by pubkey were taking 10ms+, which seemed too long. This was due to a loop through the entire validator registry for each lookup.

## Proposed Changes

Rather than using a loop through the register, this PR utilises the pubkey cache which is usually initialised at the head*. In case the cache isn't built, we fall back to the previous loop logic. In the vast majority of cases I expect the cache will be built, as the validator client queries at the `head` where all caches should be built.

## Additional Info

*I had to modify the cache build that runs after fork choice to build the pubkey cache. I think it had been optimised out, perhaps accidentally. I think it's preferable to have the exit cache and the pubkey cache built on the head state, as they are required for verifying deposits and exits respectively, and we may as well build them off the hot path of block processing. Previously they'd get built the first time a deposit or exit needed to be verified.

I've deleted the unused `map_state` function which was obsoleted by `map_state_and_execution_optimistic`.

## Proposed Changes

In this change I've added a new beacon_node cli flag `--execution-jwt-secret-key` for passing the JWT secret directly as string.

Without this flag, it was non-trivial to pass a secrets file containing a JWT secret key without compromising its contents into some management repo or fiddling around with manual file mounts for cloud-based deployments.

When used in combination with environment variables, the secret can be injected into container-based systems like docker & friends quite easily.

It's both possible to either specify the file_path to the JWT secret or pass the JWT secret directly.

I've modified the docs and attached a test as well.

## Additional Info

The logic has been adapted a bit so that either one of `--execution-jwt` or `--execution-jwt-secret-key` must be set when specifying `--execution-endpoint` so that it's still compatible with the semantics before this change and there's at least one secret provided.

## Issue Addressed

#2847

## Proposed Changes

Add under a feature flag the required changes to subscribe to long lived subnets in a deterministic way

## Additional Info

There is an additional required change that is actually searching for peers using the prefix, but I find that it's best to make this change in the future

## Issue Addressed

Adding CLI tests for logging flags: log-color and disable-log-timestamp

Which issue # does this PR address?

#3588

## Proposed Changes

Add CLI tests for logging flags as described in #3588

Please list or describe the changes introduced by this PR.

Added logger_config to client::Config as suggested. Implemented Default for LoggerConfig based on what was being done elsewhere in the repo. Created 2 tests for each flag addressed.

## Additional Info

Please provide any additional information. For example, future considerations

or information useful for reviewers.

## Issue Addressed

N/A

## Proposed Changes

With https://github.com/sigp/lighthouse/pull/3214 we made it such that you can either have 1 auth endpoint or multiple non auth endpoints. Now that we are post merge on all networks (testnets and mainnet), we cannot progress a chain without a dedicated auth execution layer connection so there is no point in having a non-auth eth1-endpoint for syncing deposit cache.

This code removes all fallback related code in the eth1 service. We still keep the single non-auth endpoint since it's useful for testing.

## Additional Info

This removes all eth1 fallback related metrics that were relevant for the monitoring service, so we might need to change the api upstream.

## Issue Addressed

fixes lints from the last rust release

## Proposed Changes

Fix the lints, most of the lints by `clippy::question-mark` are false positives in the form of https://github.com/rust-lang/rust-clippy/issues/9518 so it's allowed for now

## Additional Info

## Issue Addressed

🐞 in which we don't actually unsubscribe from a random long lived subnet when it expires

## Proposed Changes

Remove code addressing a specific case in which we are subscribed to all subnets and handle the removal of the long lived subnet. I don't think the special case code is particularly important as, if someone is running with that many validators to be subscribed to all subnets, it should use `--subscribe-all-subnets` instead

## Additional Info

Noticed on some test nodes climbing bandwidth usage periodically (around 27hours, the time of subnet expirations) I'm running this code to test this does not happen anymore, but I think it should be good now

## Issue Addressed

NA

## Proposed Changes

This PR attempts to fix the following spurious CI failure:

```

---- store_tests::garbage_collect_temp_states_from_failed_block stdout ----

thread 'store_tests::garbage_collect_temp_states_from_failed_block' panicked at 'disk store should initialize: DBError { message: "Error { message: \"IO error: lock /tmp/.tmp6DcBQ9/cold_db/LOCK: already held by process\" }" }', beacon_node/beacon_chain/tests/store_tests.rs:59:10

```

I believe that some async task is taking a clone of the store and holding it in some other thread for a short time. This creates a race-condition when we try to open a new instance of the store.

## Additional Info

NA

## Issue Addressed

NA

## Proposed Changes

This PR removes duplicated block root computation.

Computing the `SignedBeaconBlock::canonical_root` has become more expensive since the merge as we need to compute the merke root of each transaction inside an `ExecutionPayload`.

Computing the root for [a mainnet block](https://beaconcha.in/slot/4704236) is taking ~10ms on my i7-8700K CPU @ 3.70GHz (no sha extensions). Given that our median seen-to-imported time for blocks is presently 300-400ms, removing a few duplicated block roots (~30ms) could represent an easy 10% improvement. When we consider that the seen-to-imported times include operations *after* the block has been placed in the early attester cache, we could expect the 30ms to be more significant WRT our seen-to-attestable times.

## Additional Info

NA

## Issue Addressed

NA

## Proposed Changes

Fixes an issue introduced in #3574 where I erroneously assumed that a `crossbeam_channel` multiple receiver queue was a *broadcast* queue. This is incorrect, each message will be received by *only one* receiver. The effect of this mistake is these logs:

```

Sep 20 06:56:17.001 INFO Synced slot: 4736079, block: 0xaa8a…180d, epoch: 148002, finalized_epoch: 148000, finalized_root: 0x2775…47f2, exec_hash: 0x2ca5…ffde (verified), peers: 6, service: slot_notifier

Sep 20 06:56:23.237 ERRO Unable to validate attestation error: CommitteeCacheWait(RecvError), peer_id: 16Uiu2HAm2Jnnj8868tb7hCta1rmkXUf5YjqUH1YPj35DCwNyeEzs, type: "aggregated", slot: Slot(4736047), beacon_block_root: 0x88d318534b1010e0ebd79aed60b6b6da1d70357d72b271c01adf55c2b46206c1

```

## Additional Info

NA

## Proposed Changes

Improve the payload pruning feature in several ways:

- Payload pruning is now entirely optional. It is enabled by default but can be disabled with `--prune-payloads false`. The previous `--prune-payloads-on-startup` flag from #3565 is removed.

- Initial payload pruning on startup now runs in a background thread. This thread will always load the split state, which is a small fraction of its total work (up to ~300ms) and then backtrack from that state. This pruning process ran in 2m5s on one Prater node with good I/O and 16m on a node with slower I/O.

- To work with the optional payload pruning the database function `try_load_full_block` will now attempt to load execution payloads for finalized slots _if_ pruning is currently disabled. This gives users an opt-out for the extensive traffic between the CL and EL for reconstructing payloads.

## Additional Info

If the `prune-payloads` flag is toggled on and off then the on-startup check may not see any payloads to delete and fail to clean them up. In this case the `lighthouse db prune_payloads` command should be used to force a manual sweep of the database.

## Issue Addressed

https://github.com/ethereum/beacon-APIs/pull/222

## Proposed Changes

Update Lighthouse's randao verification API to match the `beacon-APIs` spec. We implemented the API before spec stabilisation, and it changed slightly in the course of review.

Rather than a flag `verify_randao` taking a boolean value, the new API uses a `skip_randao_verification` flag which takes no argument. The new spec also requires the randao reveal to be present and equal to the point-at-infinity when `skip_randao_verification` is set.

I've also updated the `POST /lighthouse/analysis/block_rewards` API to take blinded blocks as input, as the execution payload is irrelevant and we may want to assess blocks produced by builders.

## Additional Info

This is technically a breaking change, but seeing as I suspect I'm the only one using these parameters/APIs, I think we're OK to include this in a patch release.

## Issue Addressed

Closes https://github.com/sigp/lighthouse/issues/3556

## Proposed Changes

Delete finalized execution payloads from the database in two places:

1. When running the finalization migration in `migrate_database`. We delete the finalized payloads between the last split point and the new updated split point. _If_ payloads are already pruned prior to this then this is sufficient to prune _all_ payloads as non-canonical payloads are already deleted by the head pruner, and all canonical payloads prior to the previous split will already have been pruned.

2. To address the fact that users will update to this code _after_ the merge on mainnet (and testnets), we need a one-off scan to delete the finalized payloads from the canonical chain. This is implemented in `try_prune_execution_payloads` which runs on startup and scans the chain back to the Bellatrix fork or the anchor slot (if checkpoint synced after Bellatrix). In the case where payloads are already pruned this check only imposes a single state load for the split state, which shouldn't be _too slow_. Even so, a flag `--prepare-payloads-on-startup=false` is provided to turn this off after it has run the first time, which provides faster start-up times.

There is also a new `lighthouse db prune_payloads` subcommand for users who prefer to run the pruning manually.

## Additional Info

The tests have been updated to not rely on finalized payloads in the database, instead using the `MockExecutionLayer` to reconstruct them. Additionally a check was added to `check_chain_dump` which asserts the non-existence or existence of payloads on disk depending on their slot.

## Issue Addressed

NA

## Proposed Changes

I have observed scenarios on Goerli where Lighthouse was receiving attestations which reference the same, un-cached shuffling on multiple threads at the same time. Lighthouse was then loading the same state from database and determining the shuffling on multiple threads at the same time. This is unnecessary load on the disk and RAM.

This PR modifies the shuffling cache so that each entry can be either:

- A committee

- A promise for a committee (i.e., a `crossbeam_channel::Receiver`)

Now, in the scenario where we have thread A and thread B simultaneously requesting the same un-cached shuffling, we will have the following:

1. Thread A will take the write-lock on the shuffling cache, find that there's no cached committee and then create a "promise" (a `crossbeam_channel::Sender`) for a committee before dropping the write-lock.

1. Thread B will then be allowed to take the write-lock for the shuffling cache and find the promise created by thread A. It will block the current thread waiting for thread A to fulfill that promise.

1. Thread A will load the state from disk, obtain the shuffling, send it down the channel, insert the entry into the cache and then continue to verify the attestation.

1. Thread B will then receive the shuffling from the receiver, be un-blocked and then continue to verify the attestation.

In the case where thread A fails to generate the shuffling and drops the sender, the next time that specific shuffling is requested we will detect that the channel is disconnected and return a `None` entry for that shuffling. This will cause the shuffling to be re-calculated.

## Additional Info

NA

## Issue Addressed

#3285

## Proposed Changes

Adds support for specifying histogram with buckets and adds new metric buckets for metrics mentioned in issue.

## Additional Info

Need some help for the buckets.

Co-authored-by: Michael Sproul <micsproul@gmail.com>

## Issue Addressed

We were unable to update lighthouse by running `cargo update` because some of the `mev-build-rs` deps weren't pinned. But `mev-build-rs` is now pinned here and includes it's own pinned commits for `ssz-rs` and `etheruem-consensus`

Co-authored-by: realbigsean <sean@sigmaprime.io>

## Issue Addressed

Closes#3514

## Proposed Changes

- Change default monitoring endpoint frequency to 120 seconds to fit with 30k requests/month limit.

- Allow configuration of the monitoring endpoint frequency using `--monitoring-endpoint-frequency N` where `N` is a value in seconds.

## Issue Addressed

Resolves https://github.com/sigp/lighthouse/issues/3517

## Proposed Changes

Adds a `--builder-profit-threshold <wei value>` flag to the BN. If an external payload's value field is less than this value, the local payload will be used. The value of the local payload will not be checked (it can't really be checked until the engine API is updated to support this).

Co-authored-by: realbigsean <sean@sigmaprime.io>

## Issue Addressed

Add a flag that can increase count unrealized strictness, defaults to false

## Proposed Changes

Please list or describe the changes introduced by this PR.

## Additional Info

Please provide any additional information. For example, future considerations

or information useful for reviewers.

Co-authored-by: realbigsean <seananderson33@gmail.com>

Co-authored-by: sean <seananderson33@gmail.com>

## Issue Addressed

When requesting an index which is not active during `start_epoch`, Lighthouse returns:

```

curl "http://localhost:5052/lighthouse/analysis/attestation_performance/999999999?start_epoch=100000&end_epoch=100000"

```

```json

{

"code": 500,

"message": "INTERNAL_SERVER_ERROR: ParticipationCache(InvalidValidatorIndex(999999999))",

"stacktraces": []

}

```

This error occurs even when the index in question becomes active before `end_epoch` which is undesirable as it can prevent larger queries from completing.

## Proposed Changes

In the event the index is out-of-bounds (has not yet been activated), simply return all fields as `false`:

```

-> curl "http://localhost:5052/lighthouse/analysis/attestation_performance/999999999?start_epoch=100000&end_epoch=100000"

```

```json

[

{

"index": 999999999,

"epochs": {

"100000": {

"active": false,

"head": false,

"target": false,

"source": false

}

}

}

]

```

By doing this, we cover the case where a validator becomes active sometime between `start_epoch` and `end_epoch`.

## Additional Info

Note that this error only occurs for epochs after the Altair hard fork.