## Issue Addressed

#3804

## Proposed Changes

- Add `total_balance` to the validator monitor and adjust the number of historical epochs which are cached.

- Allow certain values in the cache to be served out via the HTTP API without requiring a state read.

## Usage

```

curl -X POST "http://localhost:5052/lighthouse/ui/validator_info" -d '{"indices": [0]}' -H "Content-Type: application/json" | jq

```

```

{

"data": {

"validators": {

"0": {

"info": [

{

"epoch": 172981,

"total_balance": 36566388519

},

...

{

"epoch": 172990,

"total_balance": 36566496513

}

]

},

"1": {

"info": [

{

"epoch": 172981,

"total_balance": 36355797968

},

...

{

"epoch": 172990,

"total_balance": 36355905962

}

]

}

}

}

}

```

## Additional Info

This requires no historical states to operate which mean it will still function on the freshly checkpoint synced node, however because of this, the values will populate each epoch (up to a maximum of 10 entries).

Another benefit of this method, is that we can easily cache any other values which would normally require a state read and serve them via the same endpoint. However, we would need be cautious about not overly increasing block processing time by caching values from complex computations.

This also caches some of the validator metrics directly, rather than pulling them from the Prometheus metrics when the API is called. This means when the validator count exceeds the individual monitor threshold, the cached values will still be available.

Co-authored-by: Paul Hauner <paul@paulhauner.com>

## Proposed Changes

* Bump Go from 1.17 to 1.20. The latest Geth release v1.11.0 requires 1.18 minimum.

* Prevent a cache miss during payload building by using the right fee recipient. This prevents Geth v1.11.0 from building a block with 0 transactions. The payload building mechanism is overhauled in the new Geth to improve the payload every 2s, and the tests were failing because we were falling back on a `getPayload` call with no lookahead due to `get_payload_id` cache miss caused by the mismatched fee recipient. Alternatively we could hack the tests to send `proposer_preparation_data`, but I think the static fee recipient is simpler for now.

* Add support for optionally enabling Lighthouse logs in the integration tests. Enable using `cargo run --release --features logging/test_logger`. This was very useful for debugging.

On heavily crowded networks, we are seeing many attempted connections to our node every second.

Often these connections come from peers that have just been disconnected. This can be for a number of reasons including:

- We have deemed them to be not as useful as other peers

- They have performed poorly

- They have dropped the connection with us

- The connection was spontaneously lost

- They were randomly removed because we have too many peers

In all of these cases, if we have reached or exceeded our target peer limit, there is no desire to accept new connections immediately after the disconnect from these peers. In fact, it often costs us resources to handle the established connections and defeats some of the logic of dropping them in the first place.

This PR adds a timeout, that prevents recently disconnected peers from reconnecting to us.

Technically we implement a ban at the swarm layer to prevent immediate re connections for at least 10 minutes. I decided to keep this light, and use a time-based LRUCache which only gets updated during the peer manager heartbeat to prevent added stress of polling a delay map for what could be a large number of peers.

This cache is bounded in time. An extra space bound could be added should people consider this a risk.

Co-authored-by: Diva M <divma@protonmail.com>

## Issue Addressed

NA

## Proposed Changes

Our `ERRO` stream has been rather noisy since the merge due to some unexpected behaviours of builders and EEs. Now that we've been running post-merge for a while, I think we can drop some of these `ERRO` to `WARN` so we're not "crying wolf".

The modified logs are:

#### `ERRO Execution engine call failed`

I'm seeing this quite frequently on Geth nodes. They seem to timeout when they're busy and it rarely indicates a serious issue. We also have logging across block import, fork choice updating and payload production that raise `ERRO` or `CRIT` when the EE times out, so I think we're not at risk of silencing actual issues.

#### `ERRO "Builder failed to reveal payload"`

In #3775 we reduced this log from `CRIT` to `ERRO` since it's common for builders to fail to reveal the block to the producer directly whilst still broadcasting it to the networ. I think it's worth dropping this to `WARN` since it's rarely interesting.

I elected to stay with `WARN` since I really do wish builders would fulfill their API promises by returning the block to us. Perhaps I'm just being pedantic here, I could be convinced otherwise.

#### `ERRO "Relay error when registering validator(s)"`

It seems like builders and/or mev-boost struggle to handle heavy loads of validator registrations. I haven't observed issues with validators not actually being registered, but I see timeouts on these endpoints many times a day. It doesn't seem like this `ERRO` is worth it.

#### `ERRO Error fetching block for peer ExecutionLayerErrorPayloadReconstruction`

This means we failed to respond to a peer on the P2P network with a block they requested because of an error in the `execution_layer`. It's very common to see timeouts or incomplete responses on this endpoint whilst the EE is busy and I don't think it's important enough for an `ERRO`. As long as the peer count stays high, I don't think the user needs to be actively concerned about how we're responding to peers.

## Additional Info

NA

## Issue Addressed

Resolves the cargo-audit failure caused by https://rustsec.org/advisories/RUSTSEC-2023-0010.

I also removed the ignore for `RUSTSEC-2020-0159` as we are no longer using a vulnerable version of `chrono`. We still need the other ignore for `time 0.1` because we depend on it via `sloggers -> chrono -> time 0.1`.

* Add first efforts at broadcast

* Tidy

* Move broadcast code to client

* Progress with broadcast impl

* Rename to address change

* Fix compile errors

* Use `while` loop

* Tidy

* Flip broadcast condition

* Switch to forgetting individual indices

* Always broadcast when the node starts

* Refactor into two functions

* Add testing

* Add another test

* Tidy, add more testing

* Tidy

* Add test, rename enum

* Rename enum again

* Tidy

* Break loop early

* Add V15 schema migration

* Bump schema version

* Progress with migration

* Update beacon_node/client/src/address_change_broadcast.rs

Co-authored-by: Michael Sproul <micsproul@gmail.com>

* Fix typo in function name

---------

Co-authored-by: Michael Sproul <micsproul@gmail.com>

## Proposed Changes

Remove the `[patch]` for `fixed-hash`.

We pinned it years ago in #2710 to fix `arbitrary` support. Nowadays the 0.7 version of `fixed-hash` is only used by the `web3` crate and doesn't need `arbitrary`.

~~Blocked on #3916 but could be merged in the same Bors batch.~~

## Proposed Changes

Another `tree-states` motivated PR, this adds `jemalloc` as the default allocator, with an option to use the system allocator by compiling with `FEATURES="" make`.

- [x] Metrics

- [x] Test on Windows

- [x] Test on macOS

- [x] Test with `musl`

- [x] Metrics dashboard on `lighthouse-metrics` (https://github.com/sigp/lighthouse-metrics/pull/37)

Co-authored-by: Michael Sproul <micsproul@gmail.com>

## Proposed Changes

Another `tree-states` motivated PR, this adds `jemalloc` as the default allocator, with an option to use the system allocator by compiling with `FEATURES="" make`.

- [x] Metrics

- [x] Test on Windows

- [x] Test on macOS

- [x] Test with `musl`

- [x] Metrics dashboard on `lighthouse-metrics` (https://github.com/sigp/lighthouse-metrics/pull/37)

Co-authored-by: Michael Sproul <micsproul@gmail.com>

## Proposed Changes

Update all dependencies to new semver-compatible releases with `cargo update`. Importantly this patches a Tokio vuln: https://rustsec.org/advisories/RUSTSEC-2023-0001. I don't think we were affected by the vuln because it only applies to named pipes on Windows, but it's still good hygiene to patch.

## Issue Addressed

Recent discussions with other client devs about optimistic sync have revealed a conceptual issue with the optimisation implemented in #3738. In designing that feature I failed to consider that the execution node checks the `blockHash` of the execution payload before responding with `SYNCING`, and that omitting this check entirely results in a degradation of the full node's validation. A node omitting the `blockHash` checks could be tricked by a supermajority of validators into following an invalid chain, something which is ordinarily impossible.

## Proposed Changes

I've added verification of the `payload.block_hash` in Lighthouse. In case of failure we log a warning and fall back to verifying the payload with the execution client.

I've used our existing dependency on `ethers_core` for RLP support, and a new dependency on Parity's `triehash` crate for the Merkle patricia trie. Although the `triehash` crate is currently unmaintained it seems like our best option at the moment (it is also used by Reth, and requires vastly less boilerplate than Parity's generic `trie-root` library).

Block hash verification is pretty quick, about 500us per block on my machine (mainnet).

The optimistic finalized sync feature can be disabled using `--disable-optimistic-finalized-sync` which forces full verification with the EL.

## Additional Info

This PR also introduces a new dependency on our [`metastruct`](https://github.com/sigp/metastruct) library, which was perfectly suited to the RLP serialization method. There will likely be changes as `metastruct` grows, but I think this is a good way to start dogfooding it.

I took inspiration from some Parity and Reth code while writing this, and have preserved the relevant license headers on the files containing code that was copied and modified.

I've needed to do this work in order to do some episub testing.

This version of libp2p has not yet been released, so this is left as a draft for when we wish to update.

Co-authored-by: Diva M <divma@protonmail.com>

## Proposed Changes

With proposer boosting implemented (#2822) we have an opportunity to re-org out late blocks.

This PR adds three flags to the BN to control this behaviour:

* `--disable-proposer-reorgs`: turn aggressive re-orging off (it's on by default).

* `--proposer-reorg-threshold N`: attempt to orphan blocks with less than N% of the committee vote. If this parameter isn't set then N defaults to 20% when the feature is enabled.

* `--proposer-reorg-epochs-since-finalization N`: only attempt to re-org late blocks when the number of epochs since finalization is less than or equal to N. The default is 2 epochs, meaning re-orgs will only be attempted when the chain is finalizing optimally.

For safety Lighthouse will only attempt a re-org under very specific conditions:

1. The block being proposed is 1 slot after the canonical head, and the canonical head is 1 slot after its parent. i.e. at slot `n + 1` rather than building on the block from slot `n` we build on the block from slot `n - 1`.

2. The current canonical head received less than N% of the committee vote. N should be set depending on the proposer boost fraction itself, the fraction of the network that is believed to be applying it, and the size of the largest entity that could be hoarding votes.

3. The current canonical head arrived after the attestation deadline from our perspective. This condition was only added to support suppression of forkchoiceUpdated messages, but makes intuitive sense.

4. The block is being proposed in the first 2 seconds of the slot. This gives it time to propagate and receive the proposer boost.

## Additional Info

For the initial idea and background, see: https://github.com/ethereum/consensus-specs/pull/2353#issuecomment-950238004

There is also a specification for this feature here: https://github.com/ethereum/consensus-specs/pull/3034

Co-authored-by: Michael Sproul <micsproul@gmail.com>

Co-authored-by: pawan <pawandhananjay@gmail.com>

## Issue Addressed

NA

## Proposed Changes

- Bump versions

- Pin the `nethermind` version since our method of getting the latest tags on `master` is giving us an old version (`1.14.1`).

- Increase timeout for execution engine startup.

## Additional Info

- [x] ~Awaiting further testing~

This PR adds some health endpoints for the beacon node and the validator client.

Specifically it adds the endpoint:

`/lighthouse/ui/health`

These are not entirely stable yet. But provide a base for modification for our UI.

These also may have issues with various platforms and may need modification.

## Issue Addressed

This PR addresses partially #3651

## Proposed Changes

This PR adds the following methods:

* a new method to trait `TreeHash`, `hash_tree_leaves` which returns all the Merkle leaves of the ssz object.

* a new method to `BeaconState`: `compute_merkle_proof` which generates a specific merkle proof for given depth and index by using the `hash_tree_leaves` as leaves function.

## Additional Info

Now here is some rationale on why I decided to go down this route: adding a new function to commonly used trait is a pain but was necessary to make sure we have all merkle leaves for every object, that is why I just added `hash_tree_leaves` in the trait and not `compute_merkle_proof` as well. although it would make sense it gives us code duplication/harder review time and we just need it from one specific object in one specific usecase so not worth the effort YET. In my humble opinion.

Co-authored-by: Michael Sproul <micsproul@gmail.com>

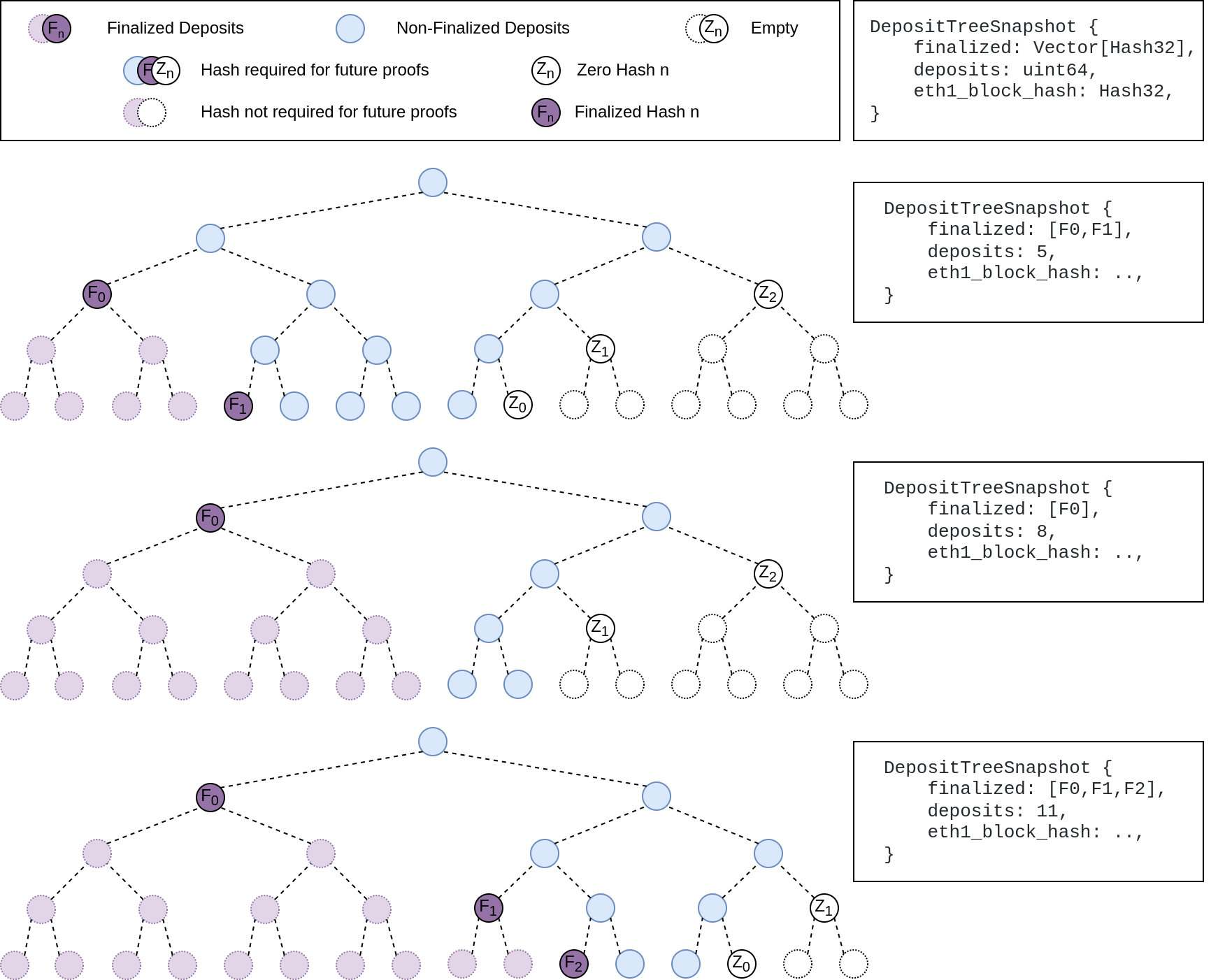

## Summary

The deposit cache now has the ability to finalize deposits. This will cause it to drop unneeded deposit logs and hashes in the deposit Merkle tree that are no longer required to construct deposit proofs. The cache is finalized whenever the latest finalized checkpoint has a new `Eth1Data` with all deposits imported.

This has three benefits:

1. Improves the speed of constructing Merkle proofs for deposits as we can just replay deposits since the last finalized checkpoint instead of all historical deposits when re-constructing the Merkle tree.

2. Significantly faster weak subjectivity sync as the deposit cache can be transferred to the newly syncing node in compressed form. The Merkle tree that stores `N` finalized deposits requires a maximum of `log2(N)` hashes. The newly syncing node then only needs to download deposits since the last finalized checkpoint to have a full tree.

3. Future proofing in preparation for [EIP-4444](https://eips.ethereum.org/EIPS/eip-4444) as execution nodes will no longer be required to store logs permanently so we won't always have all historical logs available to us.

## More Details

Image to illustrate how the deposit contract merkle tree evolves and finalizes along with the resulting `DepositTreeSnapshot`

## Other Considerations

I've changed the structure of the `SszDepositCache` so once you load & save your database from this version of lighthouse, you will no longer be able to load it from older versions.

Co-authored-by: ethDreamer <37123614+ethDreamer@users.noreply.github.com>

## Issue Addressed

Updates discv5

Pending on

- [x] #3547

- [x] Alex upgrades his deps

## Proposed Changes

updates discv5 and the enr crate. The only relevant change would be some clear indications of ipv4 usage in lighthouse

## Additional Info

Functionally, this should be equivalent to the prev version.

As draft pending a discv5 release

* add capella gossip boiler plate

* get everything compiling

Co-authored-by: realbigsean <sean@sigmaprime.io

Co-authored-by: Mark Mackey <mark@sigmaprime.io>

* small cleanup

* small cleanup

* cargo fix + some test cleanup

* improve block production

* add fixme for potential panic

Co-authored-by: Mark Mackey <mark@sigmaprime.io>

## Issue Addressed

NA

## Proposed Changes

Bump version to `v3.2.0`

## Additional Info

- ~~Blocked on #3597~~

- ~~Blocked on #3645~~

- ~~Blocked on #3653~~

- ~~Requires additional testing~~

## Issue Addressed

Implements new optimistic sync test format from https://github.com/ethereum/consensus-specs/pull/2982.

## Proposed Changes

- Add parsing and runner support for the new test format.

- Extend the mock EL with a set of canned responses keyed by block hash. Although this doubles up on some of the existing functionality I think it's really nice to use compared to the `preloaded_responses` or static responses. I think we could write novel new opt sync tests using these primtives much more easily than the previous ones. Forks are natively supported, and different responses to `forkchoiceUpdated` and `newPayload` are also straight-forward.

## Additional Info

Blocked on merge of the spec PR and release of new test vectors.

## Issue Addressed

#2847

## Proposed Changes

Add under a feature flag the required changes to subscribe to long lived subnets in a deterministic way

## Additional Info

There is an additional required change that is actually searching for peers using the prefix, but I find that it's best to make this change in the future

## Issue Addressed

Adding CLI tests for logging flags: log-color and disable-log-timestamp

Which issue # does this PR address?

#3588

## Proposed Changes

Add CLI tests for logging flags as described in #3588

Please list or describe the changes introduced by this PR.

Added logger_config to client::Config as suggested. Implemented Default for LoggerConfig based on what was being done elsewhere in the repo. Created 2 tests for each flag addressed.

## Additional Info

Please provide any additional information. For example, future considerations

or information useful for reviewers.

## Issue Addressed

N/A

## Proposed Changes

With https://github.com/sigp/lighthouse/pull/3214 we made it such that you can either have 1 auth endpoint or multiple non auth endpoints. Now that we are post merge on all networks (testnets and mainnet), we cannot progress a chain without a dedicated auth execution layer connection so there is no point in having a non-auth eth1-endpoint for syncing deposit cache.

This code removes all fallback related code in the eth1 service. We still keep the single non-auth endpoint since it's useful for testing.

## Additional Info

This removes all eth1 fallback related metrics that were relevant for the monitoring service, so we might need to change the api upstream.

## Issue Addressed

NA

## Proposed Changes

Fixes an issue introduced in #3574 where I erroneously assumed that a `crossbeam_channel` multiple receiver queue was a *broadcast* queue. This is incorrect, each message will be received by *only one* receiver. The effect of this mistake is these logs:

```

Sep 20 06:56:17.001 INFO Synced slot: 4736079, block: 0xaa8a…180d, epoch: 148002, finalized_epoch: 148000, finalized_root: 0x2775…47f2, exec_hash: 0x2ca5…ffde (verified), peers: 6, service: slot_notifier

Sep 20 06:56:23.237 ERRO Unable to validate attestation error: CommitteeCacheWait(RecvError), peer_id: 16Uiu2HAm2Jnnj8868tb7hCta1rmkXUf5YjqUH1YPj35DCwNyeEzs, type: "aggregated", slot: Slot(4736047), beacon_block_root: 0x88d318534b1010e0ebd79aed60b6b6da1d70357d72b271c01adf55c2b46206c1

```

## Additional Info

NA

## Issue Addressed

NA

## Proposed Changes

I have observed scenarios on Goerli where Lighthouse was receiving attestations which reference the same, un-cached shuffling on multiple threads at the same time. Lighthouse was then loading the same state from database and determining the shuffling on multiple threads at the same time. This is unnecessary load on the disk and RAM.

This PR modifies the shuffling cache so that each entry can be either:

- A committee

- A promise for a committee (i.e., a `crossbeam_channel::Receiver`)

Now, in the scenario where we have thread A and thread B simultaneously requesting the same un-cached shuffling, we will have the following:

1. Thread A will take the write-lock on the shuffling cache, find that there's no cached committee and then create a "promise" (a `crossbeam_channel::Sender`) for a committee before dropping the write-lock.

1. Thread B will then be allowed to take the write-lock for the shuffling cache and find the promise created by thread A. It will block the current thread waiting for thread A to fulfill that promise.

1. Thread A will load the state from disk, obtain the shuffling, send it down the channel, insert the entry into the cache and then continue to verify the attestation.

1. Thread B will then receive the shuffling from the receiver, be un-blocked and then continue to verify the attestation.

In the case where thread A fails to generate the shuffling and drops the sender, the next time that specific shuffling is requested we will detect that the channel is disconnected and return a `None` entry for that shuffling. This will cause the shuffling to be re-calculated.

## Additional Info

NA

## Issue Addressed

Fix a `cargo-audit` failure. We don't use `axum` for anything besides tests, but `cargo-audit` is failing due to this vulnerability in `axum-core`: https://rustsec.org/advisories/RUSTSEC-2022-0055

## Issue Addressed

We were unable to update lighthouse by running `cargo update` because some of the `mev-build-rs` deps weren't pinned. But `mev-build-rs` is now pinned here and includes it's own pinned commits for `ssz-rs` and `etheruem-consensus`

Co-authored-by: realbigsean <sean@sigmaprime.io>

## Issue Addressed

We currently subscribe to attestation subnets as soon as the subscription arrives (one epoch in advance), this makes it so that subscriptions for future slots are scheduled instead of done immediately.

## Proposed Changes

- Schedule subscriptions to subnets for future slots.

- Finish removing hashmap_delay, in favor of [delay_map](https://github.com/AgeManning/delay_map). This was the only remaining service to do this.

- Subscriptions for past slots are rejected, before we would subscribe for one slot.

- Add a new test for subscriptions that are not consecutive.

## Additional Info

This is also an effort in making the code easier to understand

## Issue Addressed

NA

## Proposed Changes

This PR is motivated by a recent consensus failure in Geth where it returned `INVALID` for an `VALID` block. Without this PR, the only way to recover is by re-syncing Lighthouse. Whilst ELs "shouldn't have consensus failures", in reality it's something that we can expect from time to time due to the complex nature of Ethereum. Being able to recover easily will help the network recover and EL devs to troubleshoot.

The risk introduced with this PR is that genuinely INVALID payloads get a "second chance" at being imported. I believe the DoS risk here is negligible since LH needs to be restarted in order to re-process the payload. Furthermore, there's no reason to think that a well-performing EL will accept a truly invalid payload the second-time-around.

## Additional Info

This implementation has the following intricacies:

1. Instead of just resetting *invalid* payloads to optimistic, we'll also reset *valid* payloads. This is an artifact of our existing implementation.

1. We will only reset payload statuses when we detect an invalid payload present in `proto_array`

- This helps save us from forgetting that all our blocks are valid in the "best case scenario" where there are no invalid blocks.

1. If we fail to revert the payload statuses we'll log a `CRIT` and just continue with a `proto_array` that *does not* have reverted payload statuses.

- The code to revert statuses needs to deal with balances and proposer-boost, so it's a failure point. This is a defensive measure to avoid introducing new show-stopping bugs to LH.

## Proposed Changes

This PR has two aims: to speed up attestation packing in the op pool, and to fix bugs in the verification of attester slashings, proposer slashings and voluntary exits. The changes are bundled into a single database schema upgrade (v12).

Attestation packing is sped up by removing several inefficiencies:

- No more recalculation of `attesting_indices` during packing.

- No (unnecessary) examination of the `ParticipationFlags`: a bitfield suffices. See `RewardCache`.

- No re-checking of attestation validity during packing: the `AttestationMap` provides attestations which are "correct by construction" (I have checked this using Hydra).

- No SSZ re-serialization for the clunky `AttestationId` type (it can be removed in a future release).

So far the speed-up seems to be roughly 2-10x, from 500ms down to 50-100ms.

Verification of attester slashings, proposer slashings and voluntary exits is fixed by:

- Tracking the `ForkVersion`s that were used to verify each message inside the `SigVerifiedOp`. This allows us to quickly re-verify that they match the head state's opinion of what the `ForkVersion` should be at the epoch(s) relevant to the message.

- Storing the `SigVerifiedOp` on disk rather than the raw operation. This allows us to continue track the fork versions after a reboot.

This is mostly contained in this commit 52bb1840ae5c4356a8fc3a51e5df23ed65ed2c7f.

## Additional Info

The schema upgrade uses the justified state to re-verify attestations and compute `attesting_indices` for them. It will drop any attestations that fail to verify, by the logic that attestations are most valuable in the few slots after they're observed, and are probably stale and useless by the time a node restarts. Exits and proposer slashings and similarly re-verified to obtain `SigVerifiedOp`s.

This PR contains a runtime killswitch `--paranoid-block-proposal` which opts out of all the optimisations in favour of closely verifying every included message. Although I'm quite sure that the optimisations are correct this flag could be useful in the event of an unforeseen emergency.

Finally, you might notice that the `RewardCache` appears quite useless in its current form because it is only updated on the hot-path immediately before proposal. My hope is that in future we can shift calls to `RewardCache::update` into the background, e.g. while performing the state advance. It is also forward-looking to `tree-states` compatibility, where iterating and indexing `state.{previous,current}_epoch_participation` is expensive and needs to be minimised.

## Issue Addressed

#3032

## Proposed Changes

Pause sync when ee is offline. Changes include three main parts:

- Online/offline notification system

- Pause sync

- Resume sync

#### Online/offline notification system

- The engine state is now guarded behind a new struct `State` that ensures every change is correctly notified. Notifications are only sent if the state changes. The new `State` is behind a `RwLock` (as before) as the synchronization mechanism.

- The actual notification channel is a [tokio::sync::watch](https://docs.rs/tokio/latest/tokio/sync/watch/index.html) which ensures only the last value is in the receiver channel. This way we don't need to worry about message order etc.

- Sync waits for state changes concurrently with normal messages.

#### Pause Sync

Sync has four components, pausing is done differently in each:

- **Block lookups**: Disabled while in this state. We drop current requests and don't search for new blocks. Block lookups are infrequent and I don't think it's worth the extra logic of keeping these and delaying processing. If we later see that this is required, we can add it.

- **Parent lookups**: Disabled while in this state. We drop current requests and don't search for new parents. Parent lookups are even less frequent and I don't think it's worth the extra logic of keeping these and delaying processing. If we later see that this is required, we can add it.

- **Range**: Chains don't send batches for processing to the beacon processor. This is easily done by guarding the channel to the beacon processor and giving it access only if the ee is responsive. I find this the simplest and most powerful approach since we don't need to deal with new sync states and chain segments that are added while the ee is offline will follow the same logic without needing to synchronize a shared state among those. Another advantage of passive pause vs active pause is that we can still keep track of active advertised chain segments so that on resume we don't need to re-evaluate all our peers.

- **Backfill**: Not affected by ee states, we don't pause.

#### Resume Sync

- **Block lookups**: Enabled again.

- **Parent lookups**: Enabled again.

- **Range**: Active resume. Since the only real pause range does is not sending batches for processing, resume makes all chains that are holding read-for-processing batches send them.

- **Backfill**: Not affected by ee states, no need to resume.

## Additional Info

**QUESTION**: Originally I made this to notify and change on synced state, but @pawanjay176 on talks with @paulhauner concluded we only need to check online/offline states. The upcheck function mentions extra checks to have a very up to date sync status to aid the networking stack. However, the only need the networking stack would have is this one. I added a TODO to review if the extra check can be removed

Next gen of #3094

Will work best with #3439

Co-authored-by: Pawan Dhananjay <pawandhananjay@gmail.com>

## Issue Addressed

NA

## Proposed Changes

Bump versions to v3.0.0

## Additional Info

- ~~Blocked on #3439~~

- ~~Blocked on #3459~~

- ~~Blocked on #3463~~

- ~~Blocked on #3462~~

- ~~Requires further testing~~

Co-authored-by: Michael Sproul <michael@sigmaprime.io>

## Issue Addressed

NA

## Proposed Changes

Adds some metrics so we can track payload status responses from the EE. I think this will be useful for troubleshooting and alerting.

I also bumped the `BecaonChain::per_slot_task` to `debug` since it doesn't seem too noisy and would have helped us with some things we were debugging in the past.

## Additional Info

NA

## Proposed Changes

Enable multiple database backends for the slasher, either MDBX (default) or LMDB. The backend can be selected using `--slasher-backend={lmdb,mdbx}`.

## Additional Info

In order to abstract over the two library's different handling of database lifetimes I've used `Box::leak` to give the `Environment` type a `'static` lifetime. This was the only way I could think of using 100% safe code to construct a self-referential struct `SlasherDB`, where the `OpenDatabases` refers to the `Environment`. I think this is OK, as the `Environment` is expected to live for the life of the program, and both database engines leave the database in a consistent state after each write. The memory claimed for memory-mapping will be freed by the OS and appropriately flushed regardless of whether the `Environment` is actually dropped.

We are depending on two `sigp` forks of `libmdbx-rs` and `lmdb-rs`, to give us greater control over MDBX OS support and LMDB's version.

## Issue Addressed

Resolves#3388Resolves#2638

## Proposed Changes

- Return the `BellatrixPreset` on `/eth/v1/config/spec` by default.

- Allow users to opt out of this by providing `--http-spec-fork=altair` (unless there's a Bellatrix fork epoch set).

- Add the Altair constants from #2638 and make serving the constants non-optional (the `http-disable-legacy-spec` flag is deprecated).

- Modify the VC to only read the `Config` and not to log extra fields. This prevents it from having to muck around parsing the `ConfigAndPreset` fields it doesn't need.

## Additional Info

This change is backwards-compatible for the VC and the BN, but is marked as a breaking change for the removal of `--http-disable-legacy-spec`.

I tried making `Config` a `superstruct` too, but getting the automatic decoding to work was a huge pain and was going to require a lot of hacks, so I gave up in favour of keeping the default-based approach we have now.

## Issue Addressed

NA

## Proposed Changes

Modifies `lcli skip-slots` and `lcli transition-blocks` allow them to source blocks/states from a beaconAPI and also gives them some more features to assist with benchmarking.

## Additional Info

Breaks the current `lcli skip-slots` and `lcli transition-blocks` APIs by changing some flag names. It should be simple enough to figure out the changes via `--help`.

Currently blocked on #3263.

## Issue Addressed

https://github.com/sigp/lighthouse/issues/3091

Extends https://github.com/sigp/lighthouse/pull/3062, adding pre-bellatrix block support on blinded endpoints and allowing the normal proposal flow (local payload construction) on blinded endpoints. This resulted in better fallback logic because the VC will not have to switch endpoints on failure in the BN <> Builder API, the BN can just fallback immediately and without repeating block processing that it shouldn't need to. We can also keep VC fallback from the VC<>BN API's blinded endpoint to full endpoint.

## Proposed Changes

- Pre-bellatrix blocks on blinded endpoints

- Add a new `PayloadCache` to the execution layer

- Better fallback-from-builder logic

## Todos

- [x] Remove VC transition logic

- [x] Add logic to only enable builder flow after Merge transition finalization

- [x] Tests

- [x] Fix metrics

- [x] Rustdocs

Co-authored-by: Mac L <mjladson@pm.me>

Co-authored-by: realbigsean <sean@sigmaprime.io>

## Issue Addressed

Closes https://github.com/sigp/lighthouse/issues/3241

Closes https://github.com/sigp/lighthouse/issues/3242

## Proposed Changes

* [x] Implement logic to remove equivocating validators from fork choice per https://github.com/ethereum/consensus-specs/pull/2845

* [x] Update tests to v1.2.0-rc.1. The new test which exercises `equivocating_indices` is passing.

* [x] Pull in some SSZ abstractions from the `tree-states` branch that make implementing Vec-compatible encoding for types like `BTreeSet` and `BTreeMap`.

* [x] Implement schema upgrades and downgrades for the database (new schema version is V11).

* [x] Apply attester slashings from blocks to fork choice

## Additional Info

* This PR doesn't need the `BTreeMap` impl, but `tree-states` does, and I don't think there's any harm in keeping it. But I could also be convinced to drop it.

Blocked on #3322.

## Issue Addressed

Add a flag that optionally enables unrealized vote tracking. Would like to test out on testnets and benchmark differences in methods of vote tracking. This PR includes a DB schema upgrade to enable to new vote tracking style.

Co-authored-by: realbigsean <sean@sigmaprime.io>

Co-authored-by: Paul Hauner <paul@paulhauner.com>

Co-authored-by: sean <seananderson33@gmail.com>

Co-authored-by: Mac L <mjladson@pm.me>

## Issue Addressed

#3031

## Proposed Changes

Updates the following API endpoints to conform with https://github.com/ethereum/beacon-APIs/pull/190 and https://github.com/ethereum/beacon-APIs/pull/196

- [x] `beacon/states/{state_id}/root`

- [x] `beacon/states/{state_id}/fork`

- [x] `beacon/states/{state_id}/finality_checkpoints`

- [x] `beacon/states/{state_id}/validators`

- [x] `beacon/states/{state_id}/validators/{validator_id}`

- [x] `beacon/states/{state_id}/validator_balances`

- [x] `beacon/states/{state_id}/committees`

- [x] `beacon/states/{state_id}/sync_committees`

- [x] `beacon/headers`

- [x] `beacon/headers/{block_id}`

- [x] `beacon/blocks/{block_id}`

- [x] `beacon/blocks/{block_id}/root`

- [x] `beacon/blocks/{block_id}/attestations`

- [x] `debug/beacon/states/{state_id}`

- [x] `debug/beacon/heads`

- [x] `validator/duties/attester/{epoch}`

- [x] `validator/duties/proposer/{epoch}`

- [x] `validator/duties/sync/{epoch}`

Updates the following Server-Sent Events:

- [x] `events?topics=head`

- [x] `events?topics=block`

- [x] `events?topics=finalized_checkpoint`

- [x] `events?topics=chain_reorg`

## Backwards Incompatible

There is a very minor breaking change with the way the API now handles requests to `beacon/blocks/{block_id}/root` and `beacon/states/{state_id}/root` when `block_id` or `state_id` is the `Root` variant of `BlockId` and `StateId` respectively.

Previously a request to a non-existent root would simply echo the root back to the requester:

```

curl "http://localhost:5052/eth/v1/beacon/states/0xaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaa/root"

{"data":{"root":"0xaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaa"}}

```

Now it will return a `404`:

```

curl "http://localhost:5052/eth/v1/beacon/blocks/0xaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaa/root"

{"code":404,"message":"NOT_FOUND: beacon block with root 0xaaaa…aaaa","stacktraces":[]}

```

In addition to this is the block root `0x0000000000000000000000000000000000000000000000000000000000000000` previously would return the genesis block. It will now return a `404`:

```

curl "http://localhost:5052/eth/v1/beacon/blocks/0x0000000000000000000000000000000000000000000000000000000000000000"

{"code":404,"message":"NOT_FOUND: beacon block with root 0x0000…0000","stacktraces":[]}

```

## Additional Info

- `execution_optimistic` is always set, and will return `false` pre-Bellatrix. I am also open to the idea of doing something like `#[serde(skip_serializing_if = "Option::is_none")]`.

- The value of `execution_optimistic` is set to `false` where possible. Any computation that is reliant on the `head` will simply use the `ExecutionStatus` of the head (unless the head block is pre-Bellatrix).

Co-authored-by: Paul Hauner <paul@paulhauner.com>

## Issue Addressed

Closes https://github.com/sigp/lighthouse/issues/3189.

## Proposed Changes

- Always supply the justified block hash as the `safe_block_hash` when calling `forkchoiceUpdated` on the execution engine.

- Refactor the `get_payload` routine to use the new `ForkchoiceUpdateParameters` struct rather than just the `finalized_block_hash`. I think this is a nice simplification and that the old way of computing the `finalized_block_hash` was unnecessary, but if anyone sees reason to keep that approach LMK.

## Issue Addressed

N/A

## Proposed Changes

Make simulator merge compatible. Adds a `--post_merge` flag to the eth1 simulator that enables a ttd and simulates the merge transition. Uses the `MockServer` in the execution layer test utils to simulate a dummy execution node.

Adds the merge transition simulation to CI.

## Issue Addressed

Resolves#3159

## Proposed Changes

Sends transactions to the EE before requesting for a payload in the `execution_integration_tests`. Made some changes to the integration tests in order to be able to sign and publish transactions to the EE:

1. `genesis.json` for both geth and nethermind was modified to include pre-funded accounts that we know private keys for

2. Using the unauthenticated port again in order to make `eth_sendTransaction` and calls from the `personal` namespace to import keys

Also added a `fcu` call with `PayloadAttributes` before calling `getPayload` in order to give EEs sufficient time to pack transactions into the payload.

## Overview

This rather extensive PR achieves two primary goals:

1. Uses the finalized/justified checkpoints of fork choice (FC), rather than that of the head state.

2. Refactors fork choice, block production and block processing to `async` functions.

Additionally, it achieves:

- Concurrent forkchoice updates to the EL and cache pruning after a new head is selected.

- Concurrent "block packing" (attestations, etc) and execution payload retrieval during block production.

- Concurrent per-block-processing and execution payload verification during block processing.

- The `Arc`-ification of `SignedBeaconBlock` during block processing (it's never mutated, so why not?):

- I had to do this to deal with sending blocks into spawned tasks.

- Previously we were cloning the beacon block at least 2 times during each block processing, these clones are either removed or turned into cheaper `Arc` clones.

- We were also `Box`-ing and un-`Box`-ing beacon blocks as they moved throughout the networking crate. This is not a big deal, but it's nice to avoid shifting things between the stack and heap.

- Avoids cloning *all the blocks* in *every chain segment* during sync.

- It also has the potential to clean up our code where we need to pass an *owned* block around so we can send it back in the case of an error (I didn't do much of this, my PR is already big enough 😅)

- The `BeaconChain::HeadSafetyStatus` struct was removed. It was an old relic from prior merge specs.

For motivation for this change, see https://github.com/sigp/lighthouse/pull/3244#issuecomment-1160963273

## Changes to `canonical_head` and `fork_choice`

Previously, the `BeaconChain` had two separate fields:

```

canonical_head: RwLock<Snapshot>,

fork_choice: RwLock<BeaconForkChoice>

```

Now, we have grouped these values under a single struct:

```

canonical_head: CanonicalHead {

cached_head: RwLock<Arc<Snapshot>>,

fork_choice: RwLock<BeaconForkChoice>

}

```

Apart from ergonomics, the only *actual* change here is wrapping the canonical head snapshot in an `Arc`. This means that we no longer need to hold the `cached_head` (`canonical_head`, in old terms) lock when we want to pull some values from it. This was done to avoid deadlock risks by preventing functions from acquiring (and holding) the `cached_head` and `fork_choice` locks simultaneously.

## Breaking Changes

### The `state` (root) field in the `finalized_checkpoint` SSE event

Consider the scenario where epoch `n` is just finalized, but `start_slot(n)` is skipped. There are two state roots we might in the `finalized_checkpoint` SSE event:

1. The state root of the finalized block, which is `get_block(finalized_checkpoint.root).state_root`.

4. The state root at slot of `start_slot(n)`, which would be the state from (1), but "skipped forward" through any skip slots.

Previously, Lighthouse would choose (2). However, we can see that when [Teku generates that event](de2b2801c8/data/beaconrestapi/src/main/java/tech/pegasys/teku/beaconrestapi/handlers/v1/events/EventSubscriptionManager.java (L171-L182)) it uses [`getStateRootFromBlockRoot`](de2b2801c8/data/provider/src/main/java/tech/pegasys/teku/api/ChainDataProvider.java (L336-L341)) which uses (1).

I have switched Lighthouse from (2) to (1). I think it's a somewhat arbitrary choice between the two, where (1) is easier to compute and is consistent with Teku.

## Notes for Reviewers

I've renamed `BeaconChain::fork_choice` to `BeaconChain::recompute_head`. Doing this helped ensure I broke all previous uses of fork choice and I also find it more descriptive. It describes an action and can't be confused with trying to get a reference to the `ForkChoice` struct.

I've changed the ordering of SSE events when a block is received. It used to be `[block, finalized, head]` and now it's `[block, head, finalized]`. It was easier this way and I don't think we were making any promises about SSE event ordering so it's not "breaking".

I've made it so fork choice will run when it's first constructed. I did this because I wanted to have a cached version of the last call to `get_head`. Ensuring `get_head` has been run *at least once* means that the cached values doesn't need to wrapped in an `Option`. This was fairly simple, it just involved passing a `slot` to the constructor so it knows *when* it's being run. When loading a fork choice from the store and a slot clock isn't handy I've just used the `slot` that was saved in the `fork_choice_store`. That seems like it would be a faithful representation of the slot when we saved it.

I added the `genesis_time: u64` to the `BeaconChain`. It's small, constant and nice to have around.

Since we're using FC for the fin/just checkpoints, we no longer get the `0x00..00` roots at genesis. You can see I had to remove a work-around in `ef-tests` here: b56be3bc2. I can't find any reason why this would be an issue, if anything I think it'll be better since the genesis-alias has caught us out a few times (0x00..00 isn't actually a real root). Edit: I did find a case where the `network` expected the 0x00..00 alias and patched it here: 3f26ac3e2.

You'll notice a lot of changes in tests. Generally, tests should be functionally equivalent. Here are the things creating the most diff-noise in tests:

- Changing tests to be `tokio::async` tests.

- Adding `.await` to fork choice, block processing and block production functions.

- Refactor of the `canonical_head` "API" provided by the `BeaconChain`. E.g., `chain.canonical_head.cached_head()` instead of `chain.canonical_head.read()`.

- Wrapping `SignedBeaconBlock` in an `Arc`.

- In the `beacon_chain/tests/block_verification`, we can't use the `lazy_static` `CHAIN_SEGMENT` variable anymore since it's generated with an async function. We just generate it in each test, not so efficient but hopefully insignificant.

I had to disable `rayon` concurrent tests in the `fork_choice` tests. This is because the use of `rayon` and `block_on` was causing a panic.

Co-authored-by: Mac L <mjladson@pm.me>

## Issue Addressed

This PR is a subset of the changes in #3134. Unstable will still not function correctly with the new builder spec once this is merged, #3134 should be used on testnets

## Proposed Changes

- Removes redundancy in "builders" (servers implementing the builder spec)

- Renames `payload-builder` flag to `builder`

- Moves from old builder RPC API to new HTTP API, but does not implement the validator registration API (implemented in https://github.com/sigp/lighthouse/pull/3194)

Co-authored-by: sean <seananderson33@gmail.com>

Co-authored-by: realbigsean <sean@sigmaprime.io>

## Issue Addressed

Lays the groundwork for builder API changes by implementing the beacon-API's new `register_validator` endpoint

## Proposed Changes

- Add a routine in the VC that runs on startup (re-try until success), once per epoch or whenever `suggested_fee_recipient` is updated, signing `ValidatorRegistrationData` and sending it to the BN.

- TODO: `gas_limit` config options https://github.com/ethereum/builder-specs/issues/17

- BN only sends VC registration data to builders on demand, but VC registration data *does update* the BN's prepare proposer cache and send an updated fcU to a local EE. This is necessary for fee recipient consistency between the blinded and full block flow in the event of fallback. Having the BN only send registration data to builders on demand gives feedback directly to the VC about relay status. Also, since the BN has no ability to sign these messages anyways (so couldn't refresh them if it wanted), and validator registration is independent of the BN head, I think this approach makes sense.

- Adds upcoming consensus spec changes for this PR https://github.com/ethereum/consensus-specs/pull/2884

- I initially applied the bit mask based on a configured application domain.. but I ended up just hard coding it here instead because that's how it's spec'd in the builder repo.

- Should application mask appear in the api?

Co-authored-by: realbigsean <sean@sigmaprime.io>

## Issue Addressed

Resolves#3069

## Proposed Changes

Unify the `eth1-endpoints` and `execution-endpoints` flags in a backwards compatible way as described in https://github.com/sigp/lighthouse/issues/3069#issuecomment-1134219221

Users have 2 options:

1. Use multiple non auth execution endpoints for deposit processing pre-merge

2. Use a single jwt authenticated execution endpoint for both execution layer and deposit processing post merge

Related https://github.com/sigp/lighthouse/issues/3118

To enable jwt authenticated deposit processing, this PR removes the calls to `net_version` as the `net` namespace is not exposed in the auth server in execution clients.

Moving away from using `networkId` is a good step in my opinion as it doesn't provide us with any added guarantees over `chainId`. See https://github.com/ethereum/consensus-specs/issues/2163 and https://github.com/sigp/lighthouse/issues/2115

Co-authored-by: Paul Hauner <paul@paulhauner.com>

## Proposed Changes

Add a new HTTP endpoint `POST /lighthouse/analysis/block_rewards` which takes a vec of `BeaconBlock`s as input and outputs the `BlockReward`s for them.

Augment the `BlockReward` struct with the attestation data for attestations in the block, which simplifies access to this information from blockprint. Using attestation data I've been able to make blockprint up to 95% accurate across Prysm/Lighthouse/Teku/Nimbus. I hope to go even higher using a bunch of synthetic blocks produced for Prysm/Nimbus/Lodestar, which are underrepresented in the current training data.

## Issue Addressed

Following up from https://github.com/sigp/lighthouse/pull/3223#issuecomment-1158718102, it has been observed that the validator client uses vastly more memory in some compilation configurations than others. Compiling with Cross and then putting the binary into an Ubuntu 22.04 image seems to use 3x more memory than compiling with Cargo directly on Debian bullseye.

## Proposed Changes

Enable malloc metrics for the validator client. This will hopefully allow us to see the difference between the two compilation configs and compare heap fragmentation. This PR doesn't enable malloc tuning for the VC because it was found to perform significantly worse. The `--disable-malloc-tuning` flag is repurposed to just disable the metrics.

## Issue Addressed

na

## Proposed Changes

Updates libp2p to https://github.com/libp2p/rust-libp2p/pull/2662

## Additional Info

From comments on the relevant PRs listed, we should pay attention at peer management consistency, but I don't think anything weird will happen.

This is running in prater tok and sin