## Summary

The deposit cache now has the ability to finalize deposits. This will cause it to drop unneeded deposit logs and hashes in the deposit Merkle tree that are no longer required to construct deposit proofs. The cache is finalized whenever the latest finalized checkpoint has a new `Eth1Data` with all deposits imported.

This has three benefits:

1. Improves the speed of constructing Merkle proofs for deposits as we can just replay deposits since the last finalized checkpoint instead of all historical deposits when re-constructing the Merkle tree.

2. Significantly faster weak subjectivity sync as the deposit cache can be transferred to the newly syncing node in compressed form. The Merkle tree that stores `N` finalized deposits requires a maximum of `log2(N)` hashes. The newly syncing node then only needs to download deposits since the last finalized checkpoint to have a full tree.

3. Future proofing in preparation for [EIP-4444](https://eips.ethereum.org/EIPS/eip-4444) as execution nodes will no longer be required to store logs permanently so we won't always have all historical logs available to us.

## More Details

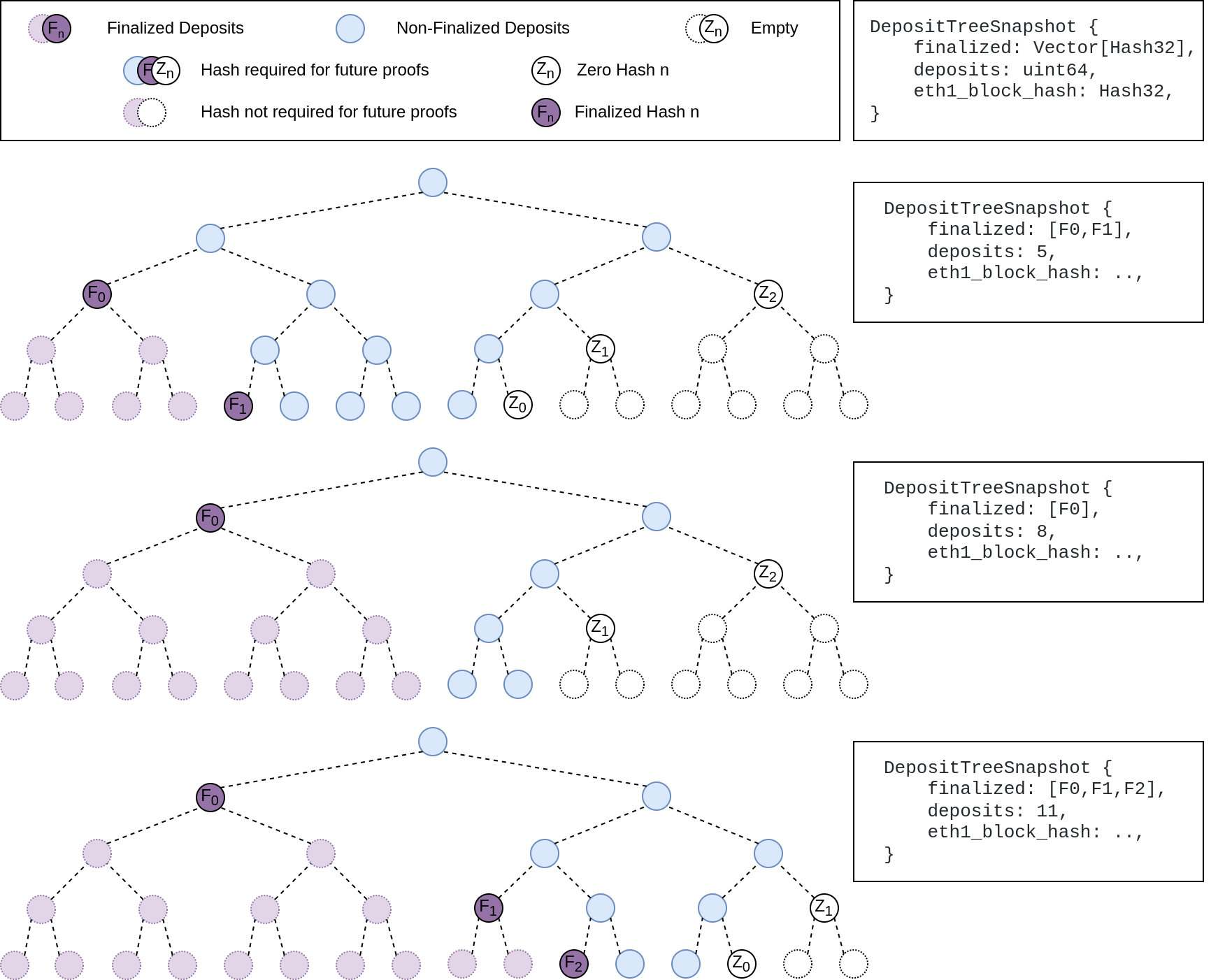

Image to illustrate how the deposit contract merkle tree evolves and finalizes along with the resulting `DepositTreeSnapshot`

## Other Considerations

I've changed the structure of the `SszDepositCache` so once you load & save your database from this version of lighthouse, you will no longer be able to load it from older versions.

Co-authored-by: ethDreamer <37123614+ethDreamer@users.noreply.github.com>

## Issue Addressed

Updates discv5

Pending on

- [x] #3547

- [x] Alex upgrades his deps

## Proposed Changes

updates discv5 and the enr crate. The only relevant change would be some clear indications of ipv4 usage in lighthouse

## Additional Info

Functionally, this should be equivalent to the prev version.

As draft pending a discv5 release

* add capella gossip boiler plate

* get everything compiling

Co-authored-by: realbigsean <sean@sigmaprime.io

Co-authored-by: Mark Mackey <mark@sigmaprime.io>

* small cleanup

* small cleanup

* cargo fix + some test cleanup

* improve block production

* add fixme for potential panic

Co-authored-by: Mark Mackey <mark@sigmaprime.io>

## Issue Addressed

NA

## Proposed Changes

Bump version to `v3.2.0`

## Additional Info

- ~~Blocked on #3597~~

- ~~Blocked on #3645~~

- ~~Blocked on #3653~~

- ~~Requires additional testing~~

## Issue Addressed

N/A

## Proposed Changes

With https://github.com/sigp/lighthouse/pull/3214 we made it such that you can either have 1 auth endpoint or multiple non auth endpoints. Now that we are post merge on all networks (testnets and mainnet), we cannot progress a chain without a dedicated auth execution layer connection so there is no point in having a non-auth eth1-endpoint for syncing deposit cache.

This code removes all fallback related code in the eth1 service. We still keep the single non-auth endpoint since it's useful for testing.

## Additional Info

This removes all eth1 fallback related metrics that were relevant for the monitoring service, so we might need to change the api upstream.

## Issue Addressed

NA

## Proposed Changes

Fixes an issue introduced in #3574 where I erroneously assumed that a `crossbeam_channel` multiple receiver queue was a *broadcast* queue. This is incorrect, each message will be received by *only one* receiver. The effect of this mistake is these logs:

```

Sep 20 06:56:17.001 INFO Synced slot: 4736079, block: 0xaa8a…180d, epoch: 148002, finalized_epoch: 148000, finalized_root: 0x2775…47f2, exec_hash: 0x2ca5…ffde (verified), peers: 6, service: slot_notifier

Sep 20 06:56:23.237 ERRO Unable to validate attestation error: CommitteeCacheWait(RecvError), peer_id: 16Uiu2HAm2Jnnj8868tb7hCta1rmkXUf5YjqUH1YPj35DCwNyeEzs, type: "aggregated", slot: Slot(4736047), beacon_block_root: 0x88d318534b1010e0ebd79aed60b6b6da1d70357d72b271c01adf55c2b46206c1

```

## Additional Info

NA

## Issue Addressed

https://github.com/ethereum/beacon-APIs/pull/222

## Proposed Changes

Update Lighthouse's randao verification API to match the `beacon-APIs` spec. We implemented the API before spec stabilisation, and it changed slightly in the course of review.

Rather than a flag `verify_randao` taking a boolean value, the new API uses a `skip_randao_verification` flag which takes no argument. The new spec also requires the randao reveal to be present and equal to the point-at-infinity when `skip_randao_verification` is set.

I've also updated the `POST /lighthouse/analysis/block_rewards` API to take blinded blocks as input, as the execution payload is irrelevant and we may want to assess blocks produced by builders.

## Additional Info

This is technically a breaking change, but seeing as I suspect I'm the only one using these parameters/APIs, I think we're OK to include this in a patch release.

## Issue Addressed

#3285

## Proposed Changes

Adds support for specifying histogram with buckets and adds new metric buckets for metrics mentioned in issue.

## Additional Info

Need some help for the buckets.

Co-authored-by: Michael Sproul <micsproul@gmail.com>

## Issue Addressed

Closes#3514

## Proposed Changes

- Change default monitoring endpoint frequency to 120 seconds to fit with 30k requests/month limit.

- Allow configuration of the monitoring endpoint frequency using `--monitoring-endpoint-frequency N` where `N` is a value in seconds.

## Issue Addressed

[Have --checkpoint-sync-url timeout](https://github.com/sigp/lighthouse/issues/3478)

## Proposed Changes

I added a parameter for `get_bytes_opt_accept_header<U: IntoUrl>` which accept a timeout duration, and modified the body of `get_beacon_blocks_ssz` and `get_debug_beacon_states_ssz` to pass corresponding timeout durations.

## Issue Addressed

We currently subscribe to attestation subnets as soon as the subscription arrives (one epoch in advance), this makes it so that subscriptions for future slots are scheduled instead of done immediately.

## Proposed Changes

- Schedule subscriptions to subnets for future slots.

- Finish removing hashmap_delay, in favor of [delay_map](https://github.com/AgeManning/delay_map). This was the only remaining service to do this.

- Subscriptions for past slots are rejected, before we would subscribe for one slot.

- Add a new test for subscriptions that are not consecutive.

## Additional Info

This is also an effort in making the code easier to understand

## Issue Addressed

NA

## Proposed Changes

As we've seen on Prater, there seems to be a correlation between these messages

```

WARN Not enough time for a discovery search subnet_id: ExactSubnet { subnet_id: SubnetId(19), slot: Slot(3742336) }, service: attestation_service

```

... and nodes falling 20-30 slots behind the head for short periods. These nodes are running ~20k Prater validators.

After running some metrics, I can see that the `network_recv` channel is processing ~250k `AttestationSubscribe` messages per minute. It occurred to me that perhaps the `AttestationSubscribe` messages are "washing out" the `SendRequest` and `SendResponse` messages. In this PR I separate the `AttestationSubscribe` and `SyncCommitteeSubscribe` messages into their own queue so the `tokio::select!` in the `NetworkService` can still process the other messages in the `network_recv` channel without necessarily having to clear all the subscription messages first.

~~I've also added filter to the HTTP API to prevent duplicate subscriptions going to the network service.~~

## Additional Info

- Currently being tested on Prater

## Issue Addressed

NA

## Proposed Changes

Bump versions to v3.0.0

## Additional Info

- ~~Blocked on #3439~~

- ~~Blocked on #3459~~

- ~~Blocked on #3463~~

- ~~Blocked on #3462~~

- ~~Requires further testing~~

Co-authored-by: Michael Sproul <michael@sigmaprime.io>

## Issue Addressed

N/A

## Proposed Changes

Fix clippy lints for latest rust version 1.63. I have allowed the [derive_partial_eq_without_eq](https://rust-lang.github.io/rust-clippy/master/index.html#derive_partial_eq_without_eq) lint as satisfying this lint would result in more code that we might not want and I feel it's not required.

Happy to fix this lint across lighthouse if required though.

## Issue Addressed

N/A

## Proposed Changes

If the build tree is not a git repository, the unit test will fail. This PR fixes the issue.

## Additional Info

N/A

## Issue Addressed

Resolves#3388Resolves#2638

## Proposed Changes

- Return the `BellatrixPreset` on `/eth/v1/config/spec` by default.

- Allow users to opt out of this by providing `--http-spec-fork=altair` (unless there's a Bellatrix fork epoch set).

- Add the Altair constants from #2638 and make serving the constants non-optional (the `http-disable-legacy-spec` flag is deprecated).

- Modify the VC to only read the `Config` and not to log extra fields. This prevents it from having to muck around parsing the `ConfigAndPreset` fields it doesn't need.

## Additional Info

This change is backwards-compatible for the VC and the BN, but is marked as a breaking change for the removal of `--http-disable-legacy-spec`.

I tried making `Config` a `superstruct` too, but getting the automatic decoding to work was a huge pain and was going to require a lot of hacks, so I gave up in favour of keeping the default-based approach we have now.

## Issue Addressed

NA

## Proposed Changes

Update bootnodes for Prater. There are new IP addresses for the Sigma Prime nodes. Teku and Nimbus nodes were also added.

## Additional Info

Related: 24760cd4b4

## Issue Addressed

NA

## Proposed Changes

Modifies `lcli skip-slots` and `lcli transition-blocks` allow them to source blocks/states from a beaconAPI and also gives them some more features to assist with benchmarking.

## Additional Info

Breaks the current `lcli skip-slots` and `lcli transition-blocks` APIs by changing some flag names. It should be simple enough to figure out the changes via `--help`.

Currently blocked on #3263.

## Issue Addressed

https://github.com/status-im/nimbus-eth2/issues/3930

## Proposed Changes

We can trivially support beacon nodes which do not provide the `is_optimistic` field by wrapping the field in an `Option`.

## Issue Addressed

Fixes an issue identified by @remyroy whereby we were logging a recommendation to use `--eth1-endpoints` on merge-ready setups (when the execution layer was out of sync).

## Proposed Changes

I took the opportunity to clean up the other eth1-related logs, replacing "eth1" by "deposit contract" or "execution" as appropriate.

I've downgraded the severity of the `CRIT` log to `ERRO` and removed most of the recommendation text. The reason being that users lacking an execution endpoint will be informed by the new `WARN Not merge ready` log pre-Bellatrix, or the regular errors from block verification post-Bellatrix.

## Issue Addressed

https://github.com/sigp/lighthouse/issues/3091

Extends https://github.com/sigp/lighthouse/pull/3062, adding pre-bellatrix block support on blinded endpoints and allowing the normal proposal flow (local payload construction) on blinded endpoints. This resulted in better fallback logic because the VC will not have to switch endpoints on failure in the BN <> Builder API, the BN can just fallback immediately and without repeating block processing that it shouldn't need to. We can also keep VC fallback from the VC<>BN API's blinded endpoint to full endpoint.

## Proposed Changes

- Pre-bellatrix blocks on blinded endpoints

- Add a new `PayloadCache` to the execution layer

- Better fallback-from-builder logic

## Todos

- [x] Remove VC transition logic

- [x] Add logic to only enable builder flow after Merge transition finalization

- [x] Tests

- [x] Fix metrics

- [x] Rustdocs

Co-authored-by: Mac L <mjladson@pm.me>

Co-authored-by: realbigsean <sean@sigmaprime.io>

## Issue Addressed

As specified in the [Beacon Chain API specs](https://github.com/ethereum/beacon-APIs/blob/master/apis/node/syncing.yaml#L32-L35) we should return `is_optimistic` as part of the response to a query for the `eth/v1/node/syncing` endpoint.

## Proposed Changes

Compute the optimistic status of the head and add it to the `SyncingData` response.

## Issue Addressed

#3031

## Proposed Changes

Updates the following API endpoints to conform with https://github.com/ethereum/beacon-APIs/pull/190 and https://github.com/ethereum/beacon-APIs/pull/196

- [x] `beacon/states/{state_id}/root`

- [x] `beacon/states/{state_id}/fork`

- [x] `beacon/states/{state_id}/finality_checkpoints`

- [x] `beacon/states/{state_id}/validators`

- [x] `beacon/states/{state_id}/validators/{validator_id}`

- [x] `beacon/states/{state_id}/validator_balances`

- [x] `beacon/states/{state_id}/committees`

- [x] `beacon/states/{state_id}/sync_committees`

- [x] `beacon/headers`

- [x] `beacon/headers/{block_id}`

- [x] `beacon/blocks/{block_id}`

- [x] `beacon/blocks/{block_id}/root`

- [x] `beacon/blocks/{block_id}/attestations`

- [x] `debug/beacon/states/{state_id}`

- [x] `debug/beacon/heads`

- [x] `validator/duties/attester/{epoch}`

- [x] `validator/duties/proposer/{epoch}`

- [x] `validator/duties/sync/{epoch}`

Updates the following Server-Sent Events:

- [x] `events?topics=head`

- [x] `events?topics=block`

- [x] `events?topics=finalized_checkpoint`

- [x] `events?topics=chain_reorg`

## Backwards Incompatible

There is a very minor breaking change with the way the API now handles requests to `beacon/blocks/{block_id}/root` and `beacon/states/{state_id}/root` when `block_id` or `state_id` is the `Root` variant of `BlockId` and `StateId` respectively.

Previously a request to a non-existent root would simply echo the root back to the requester:

```

curl "http://localhost:5052/eth/v1/beacon/states/0xaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaa/root"

{"data":{"root":"0xaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaa"}}

```

Now it will return a `404`:

```

curl "http://localhost:5052/eth/v1/beacon/blocks/0xaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaa/root"

{"code":404,"message":"NOT_FOUND: beacon block with root 0xaaaa…aaaa","stacktraces":[]}

```

In addition to this is the block root `0x0000000000000000000000000000000000000000000000000000000000000000` previously would return the genesis block. It will now return a `404`:

```

curl "http://localhost:5052/eth/v1/beacon/blocks/0x0000000000000000000000000000000000000000000000000000000000000000"

{"code":404,"message":"NOT_FOUND: beacon block with root 0x0000…0000","stacktraces":[]}

```

## Additional Info

- `execution_optimistic` is always set, and will return `false` pre-Bellatrix. I am also open to the idea of doing something like `#[serde(skip_serializing_if = "Option::is_none")]`.

- The value of `execution_optimistic` is set to `false` where possible. Any computation that is reliant on the `head` will simply use the `ExecutionStatus` of the head (unless the head block is pre-Bellatrix).

Co-authored-by: Paul Hauner <paul@paulhauner.com>

## Issue Addressed

- Resolves#3338

## Proposed Changes

This PR adds a new `--network goerli` flag that reuses the [Prater network configs](https://github.com/sigp/lighthouse/tree/stable/common/eth2_network_config/built_in_network_configs/prater).

As you'll see in #3338, there are several approaches to the problem of the Goerli/Prater alias. This approach achieves:

1. No duplication of the genesis state between Goerli and Prater.

- Upside: the genesis state for Prater is ~17mb, duplication would increase the size of the binary by that much.

2. When the user supplies `--network goerli`, they will get a datadir in `~/.lighthouse/goerli`.

- Upside: our docs stay correct when they declare a datadir is located at `~/.lighthouse/{network}`

- Downside: switching from `--network prater` to `--network goerli` will require some manual migration.

3. When using `--network goerli`, the [`config/spec`](https://ethereum.github.io/beacon-APIs/#/Config/getSpec) endpoint will return a [`CONFIG_NAME`](02a2b71d64/configs/mainnet.yaml (L11)) of "prater".

- Upside: VC running `--network prater` will still think it's on the same network as one using `--network goerli`.

- Downside: potentially confusing.

#3348 achieves the same goal as this PR with a different approach and set of trade-offs.

## Additional Info

### Notes for reviewers:

In e4896c2682 you'll see that I remove the `$name_str` by just using `stringify!($name_ident)` instead. This is a simplification that should have have been there in the first place.

Then, in 90b5e22fca I reclaim that second parameter with a new purpose; to specify the directory from which to load configs.

## Issue Addressed

#3302

## Proposed Changes

Move the `reqwest::Client` from being initialized per-validator, to being initialized per distinct Web3Signer.

This is done by placing the `Client` into a `HashMap` keyed by the definition of the Web3Signer as specified by the `ValidatorDefintion`. This will allow multiple Web3Signers to be used with a single VC and also maintains backwards compatibility.

## Additional Info

This was done to reduce the memory used by the VC when connecting to a Web3Signer.

I set up a local testnet using [a custom script](https://github.com/macladson/lighthouse/tree/web3signer-local-test/scripts/local_testnet_web3signer) and ran a VC with 200 validator keys:

VC with Web3Signer:

- `unstable`: ~200MB

- With fix: ~50MB

VC with Local Signer:

- `unstable`: ~35MB

- With fix: ~35MB

> I'm seeing some fragmentation with the VC using the Web3Signer, but not when using a local signer (this is most likely due to making lots of http requests and dealing with lots of JSON objects). I tested the above using `MALLOC_ARENA_MAX=1` to try to reduce the fragmentation. Without it, the values are around +50MB for both `unstable` and the fix.

## Issue Addressed

Resolves#3276.

## Proposed Changes

Add a timeout for the sync committee contributions at 1/4 the slot length such that we may be able to try backup beacon nodes in the case of contribution post failure.

## Additional Info

1/4 slot length seemed standard for the timeouts, but may want to decrease this to 1/2.

I did not find any timeout related / sync committee related tests, so there are no tests. Happy to write some with a bit of guidance.