Checkpointz now supports deposit snapshot but [they only support returning them in JSON](https://github.com/ethpandaops/checkpointz/issues/74) so I've modified lighthouse to request them in JSON by default.

There's also `get_opt` & `get_opt_with_timeout` methods which seem to expect responses in JSON but were not adding `Accept: application/json` to the request headers so I fixed that as well.

Also the beacon API puts quantities in quotes so I fixed that in the snapshot JSON serialization

## Issue Addressed

#3510

## Proposed Changes

- Replace mime with MediaTypeList

- Remove parse_accept fn as MediaTypeList does it built-in

- Get the supported media type of the highest q-factor in a single list iteration without sorting

## Additional Info

I have addressed the suggested changes in previous [PR](https://github.com/sigp/lighthouse/pull/3520#discussion_r959048633)

## Issue Addressed

Closes https://github.com/sigp/lighthouse/issues/4291, part of #3613.

## Proposed Changes

- Implement the `el_offline` field on `/eth/v1/node/syncing`. We set `el_offline=true` if:

- The EL's internal status is `Offline` or `AuthFailed`, _or_

- The most recent call to `newPayload` resulted in an error (more on this in a moment).

- Use the `el_offline` field in the VC to mark nodes with offline ELs as _unsynced_. These nodes will still be used, but only after synced nodes.

- Overhaul the usage of `RequireSynced` so that `::No` is used almost everywhere. The `--allow-unsynced` flag was broken and had the opposite effect to intended, so it has been deprecated.

- Add tests for the EL being offline on the upcheck call, and being offline due to the newPayload check.

## Why track `newPayload` errors?

Tracking the EL's online/offline status is too coarse-grained to be useful in practice, because:

- If the EL is timing out to some calls, it's unlikely to timeout on the `upcheck` call, which is _just_ `eth_syncing`. Every failed call is followed by an upcheck [here](693886b941/beacon_node/execution_layer/src/engines.rs (L372-L380)), which would have the effect of masking the failure and keeping the status _online_.

- The `newPayload` call is the most likely to time out. It's the call in which ELs tend to do most of their work (often 1-2 seconds), with `forkchoiceUpdated` usually returning much faster (<50ms).

- If `newPayload` is failing consistently (e.g. timing out) then this is a good indication that either the node's EL is in trouble, or the network as a whole is. In the first case validator clients _should_ prefer other BNs if they have one available. In the second case, all of their BNs will likely report `el_offline` and they'll just have to proceed with trying to use them.

## Additional Changes

- Add utility method `ForkName::latest` which is quite convenient for test writing, but probably other things too.

- Delete some stale comments from when we used to support multiple execution nodes.

## Issue Addressed

#4233

## Proposed Changes

Remove the `best_justified_checkpoint` from the `PersistedForkChoiceStore` type as it is now unused.

Additionally, remove the `Option`'s wrapping the `justified_checkpoint` and `finalized_checkpoint` fields on `ProtoNode` which were only present to facilitate a previous migration.

Include the necessary code to facilitate the migration to a new DB schema.

## Issue Addressed

#4146

## Proposed Changes

Removes the `ExecutionOptimisticForkVersionedResponse` type and the associated Beacon API endpoint which is now deprecated. Also removes the test associated with the endpoint.

> This is currently a WIP and all features are subject to alteration or removal at any time.

## Overview

The successor to #2873.

Contains the backbone of `beacon.watch` including syncing code, the initial API, and several core database tables.

See `watch/README.md` for more information, requirements and usage.

## Issue Addressed

Addresses #4117

## Proposed Changes

See https://github.com/ethereum/keymanager-APIs/pull/58 for proposed API specification.

## TODO

- [x] ~~Add submission to BN~~

- removed, see discussion in [keymanager API](https://github.com/ethereum/keymanager-APIs/pull/58)

- [x] ~~Add flag to allow voluntary exit via the API~~

- no longer needed now the VC doesn't submit exit directly

- [x] ~~Additional verification / checks, e.g. if validator on same network as BN~~

- to be done on client side

- [x] ~~Potentially wait for the message to propagate and return some exit information in the response~~

- not required

- [x] Update http tests

- [x] ~~Update lighthouse book~~

- not required if this endpoint makes it to the standard keymanager API

Co-authored-by: Paul Hauner <paul@paulhauner.com>

Co-authored-by: Jimmy Chen <jimmy@sigmaprime.io>

## Issue Addressed

#3708

## Proposed Changes

- Add `is_finalized_block` method to `BeaconChain` in `beacon_node/beacon_chain/src/beacon_chain.rs`.

- Add `is_finalized_state` method to `BeaconChain` in `beacon_node/beacon_chain/src/beacon_chain.rs`.

- Add `fork_and_execution_optimistic_and_finalized` in `beacon_node/http_api/src/state_id.rs`.

- Add `ExecutionOptimisticFinalizedForkVersionedResponse` type in `consensus/types/src/fork_versioned_response.rs`.

- Add `execution_optimistic_finalized_fork_versioned_response`function in `beacon_node/http_api/src/version.rs`.

- Add `ExecutionOptimisticFinalizedResponse` type in `common/eth2/src/types.rs`.

- Add `add_execution_optimistic_finalized` method in `common/eth2/src/types.rs`.

- Update API response methods to include finalized.

- Remove `execution_optimistic_fork_versioned_response`

Co-authored-by: Michael Sproul <michael@sigmaprime.io>

## Issue Addressed

Which issue # does this PR address?

https://github.com/sigp/lighthouse/issues/3669

## Proposed Changes

Please list or describe the changes introduced by this PR.

- A new API to fetch fork choice data, as specified [here](https://github.com/ethereum/beacon-APIs/pull/232)

- A new integration test to test the new API

## Additional Info

Please provide any additional information. For example, future considerations

or information useful for reviewers.

- `extra_data` field specified in the beacon-API spec is not implemented, please let me know if I should instead.

Co-authored-by: Michael Sproul <micsproul@gmail.com>

## Issue Addressed

In #4027 I forgot to add the `parent_block_number` to the payload attributes SSE.

## Proposed Changes

Compute the parent block number while computing the pre-payload attributes. Pass it on to the SSE stream.

## Additional Info

Not essential for v3.5.1 as I suspect most builders don't need the `parent_block_root`. I would like to use it for my dummy no-op builder however.

## Issue Addressed

Closes#3896Closes#3998Closes#3700

## Proposed Changes

- Optimise the calculation of withdrawals for payload attributes by avoiding state clones, avoiding unnecessary state advances and reading from the snapshot cache if possible.

- Use the execution layer's payload attributes cache to avoid re-calculating payload attributes. I actually implemented a new LRU cache just for withdrawals but it had the exact same key and most of the same data as the existing payload attributes cache, so I deleted it.

- Add a new SSE event that fires when payloadAttributes are calculated. This is useful for block builders, a la https://github.com/ethereum/beacon-APIs/issues/244.

- Add a new CLI flag `--always-prepare-payload` which forces payload attributes to be sent with every fcU regardless of connected proposers. This is intended for use by builders/relays.

For maximum effect, the flags I've been using to run Lighthouse in "payload builder mode" are:

```

--always-prepare-payload \

--prepare-payload-lookahead 12000 \

--suggested-fee-recipient 0x0000000000000000000000000000000000000000

```

The fee recipient is required so Lighthouse has something to pack in the payload attributes (it can be ignored by the builder). The lookahead causes fcU to be sent at the start of every slot rather than at 8s. As usual, fcU will also be sent after each change of head block. I think this combination is sufficient for builders to build on all viable heads. Often there will be two fcU (and two payload attributes) sent for the same slot: one sent at the start of the slot with the head from `n - 1` as the parent, and one sent after the block arrives with `n` as the parent.

Example usage of the new event stream:

```bash

curl -N "http://localhost:5052/eth/v1/events?topics=payload_attributes"

```

## Additional Info

- [x] Tests added by updating the proposer re-org tests. This has the benefit of testing the proposer re-org code paths with withdrawals too, confirming that the new changes don't interact poorly.

- [ ] Benchmarking with `blockdreamer` on devnet-7 showed promising results but I'm yet to do a comparison to `unstable`.

Co-authored-by: Michael Sproul <micsproul@gmail.com>

## Issue Addressed

Cleans up all the remnants of 4844 in capella. This makes sure when 4844 is reviewed there is nothing we are missing because it got included here

## Proposed Changes

drop a bomb on every 4844 thing

## Additional Info

Merge process I did (locally) is as follows:

- squash merge to produce one commit

- in new branch off unstable with the squashed commit create a `git revert HEAD` commit

- merge that new branch onto 4844 with `--strategy ours`

- compare local 4844 to remote 4844 and make sure the diff is empty

- enjoy

Co-authored-by: Paul Hauner <paul@paulhauner.com>

* Import BLS to execution changes before Capella

* Test for BLS to execution change HTTP API

* Pack BLS to execution changes in LIFO order

* Remove unused var

* Clippy

## Summary

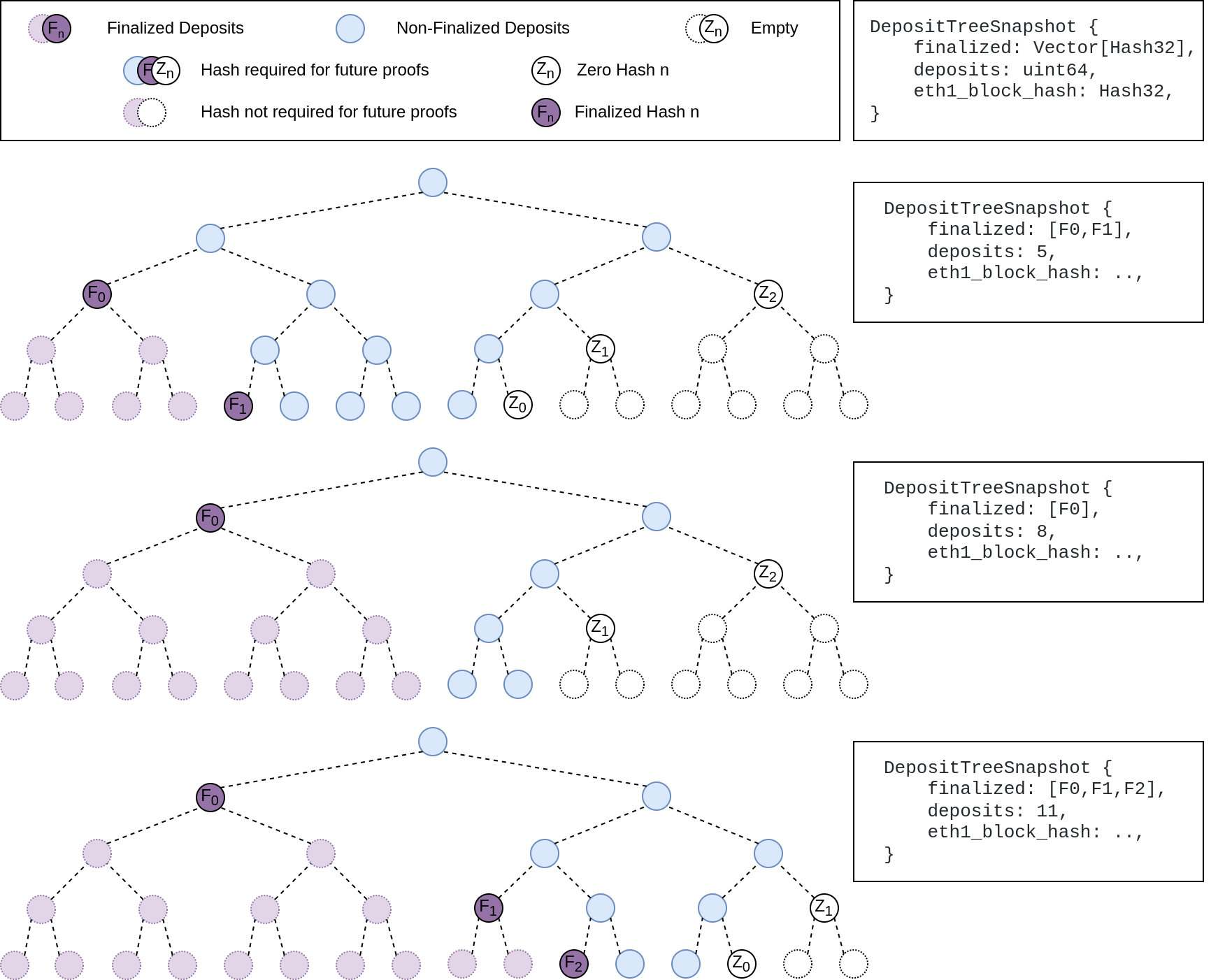

The deposit cache now has the ability to finalize deposits. This will cause it to drop unneeded deposit logs and hashes in the deposit Merkle tree that are no longer required to construct deposit proofs. The cache is finalized whenever the latest finalized checkpoint has a new `Eth1Data` with all deposits imported.

This has three benefits:

1. Improves the speed of constructing Merkle proofs for deposits as we can just replay deposits since the last finalized checkpoint instead of all historical deposits when re-constructing the Merkle tree.

2. Significantly faster weak subjectivity sync as the deposit cache can be transferred to the newly syncing node in compressed form. The Merkle tree that stores `N` finalized deposits requires a maximum of `log2(N)` hashes. The newly syncing node then only needs to download deposits since the last finalized checkpoint to have a full tree.

3. Future proofing in preparation for [EIP-4444](https://eips.ethereum.org/EIPS/eip-4444) as execution nodes will no longer be required to store logs permanently so we won't always have all historical logs available to us.

## More Details

Image to illustrate how the deposit contract merkle tree evolves and finalizes along with the resulting `DepositTreeSnapshot`

## Other Considerations

I've changed the structure of the `SszDepositCache` so once you load & save your database from this version of lighthouse, you will no longer be able to load it from older versions.

Co-authored-by: ethDreamer <37123614+ethDreamer@users.noreply.github.com>

* add capella gossip boiler plate

* get everything compiling

Co-authored-by: realbigsean <sean@sigmaprime.io

Co-authored-by: Mark Mackey <mark@sigmaprime.io>

* small cleanup

* small cleanup

* cargo fix + some test cleanup

* improve block production

* add fixme for potential panic

Co-authored-by: Mark Mackey <mark@sigmaprime.io>

## Issue Addressed

https://github.com/ethereum/beacon-APIs/pull/222

## Proposed Changes

Update Lighthouse's randao verification API to match the `beacon-APIs` spec. We implemented the API before spec stabilisation, and it changed slightly in the course of review.

Rather than a flag `verify_randao` taking a boolean value, the new API uses a `skip_randao_verification` flag which takes no argument. The new spec also requires the randao reveal to be present and equal to the point-at-infinity when `skip_randao_verification` is set.

I've also updated the `POST /lighthouse/analysis/block_rewards` API to take blinded blocks as input, as the execution payload is irrelevant and we may want to assess blocks produced by builders.

## Additional Info

This is technically a breaking change, but seeing as I suspect I'm the only one using these parameters/APIs, I think we're OK to include this in a patch release.

## Issue Addressed

[Have --checkpoint-sync-url timeout](https://github.com/sigp/lighthouse/issues/3478)

## Proposed Changes

I added a parameter for `get_bytes_opt_accept_header<U: IntoUrl>` which accept a timeout duration, and modified the body of `get_beacon_blocks_ssz` and `get_debug_beacon_states_ssz` to pass corresponding timeout durations.

## Issue Addressed

NA

## Proposed Changes

As we've seen on Prater, there seems to be a correlation between these messages

```

WARN Not enough time for a discovery search subnet_id: ExactSubnet { subnet_id: SubnetId(19), slot: Slot(3742336) }, service: attestation_service

```

... and nodes falling 20-30 slots behind the head for short periods. These nodes are running ~20k Prater validators.

After running some metrics, I can see that the `network_recv` channel is processing ~250k `AttestationSubscribe` messages per minute. It occurred to me that perhaps the `AttestationSubscribe` messages are "washing out" the `SendRequest` and `SendResponse` messages. In this PR I separate the `AttestationSubscribe` and `SyncCommitteeSubscribe` messages into their own queue so the `tokio::select!` in the `NetworkService` can still process the other messages in the `network_recv` channel without necessarily having to clear all the subscription messages first.

~~I've also added filter to the HTTP API to prevent duplicate subscriptions going to the network service.~~

## Additional Info

- Currently being tested on Prater

## Issue Addressed

Resolves#3388Resolves#2638

## Proposed Changes

- Return the `BellatrixPreset` on `/eth/v1/config/spec` by default.

- Allow users to opt out of this by providing `--http-spec-fork=altair` (unless there's a Bellatrix fork epoch set).

- Add the Altair constants from #2638 and make serving the constants non-optional (the `http-disable-legacy-spec` flag is deprecated).

- Modify the VC to only read the `Config` and not to log extra fields. This prevents it from having to muck around parsing the `ConfigAndPreset` fields it doesn't need.

## Additional Info

This change is backwards-compatible for the VC and the BN, but is marked as a breaking change for the removal of `--http-disable-legacy-spec`.

I tried making `Config` a `superstruct` too, but getting the automatic decoding to work was a huge pain and was going to require a lot of hacks, so I gave up in favour of keeping the default-based approach we have now.

## Issue Addressed

NA

## Proposed Changes

Modifies `lcli skip-slots` and `lcli transition-blocks` allow them to source blocks/states from a beaconAPI and also gives them some more features to assist with benchmarking.

## Additional Info

Breaks the current `lcli skip-slots` and `lcli transition-blocks` APIs by changing some flag names. It should be simple enough to figure out the changes via `--help`.

Currently blocked on #3263.

## Issue Addressed

https://github.com/status-im/nimbus-eth2/issues/3930

## Proposed Changes

We can trivially support beacon nodes which do not provide the `is_optimistic` field by wrapping the field in an `Option`.

## Issue Addressed

https://github.com/sigp/lighthouse/issues/3091

Extends https://github.com/sigp/lighthouse/pull/3062, adding pre-bellatrix block support on blinded endpoints and allowing the normal proposal flow (local payload construction) on blinded endpoints. This resulted in better fallback logic because the VC will not have to switch endpoints on failure in the BN <> Builder API, the BN can just fallback immediately and without repeating block processing that it shouldn't need to. We can also keep VC fallback from the VC<>BN API's blinded endpoint to full endpoint.

## Proposed Changes

- Pre-bellatrix blocks on blinded endpoints

- Add a new `PayloadCache` to the execution layer

- Better fallback-from-builder logic

## Todos

- [x] Remove VC transition logic

- [x] Add logic to only enable builder flow after Merge transition finalization

- [x] Tests

- [x] Fix metrics

- [x] Rustdocs

Co-authored-by: Mac L <mjladson@pm.me>

Co-authored-by: realbigsean <sean@sigmaprime.io>

## Issue Addressed

As specified in the [Beacon Chain API specs](https://github.com/ethereum/beacon-APIs/blob/master/apis/node/syncing.yaml#L32-L35) we should return `is_optimistic` as part of the response to a query for the `eth/v1/node/syncing` endpoint.

## Proposed Changes

Compute the optimistic status of the head and add it to the `SyncingData` response.

## Issue Addressed

#3031

## Proposed Changes

Updates the following API endpoints to conform with https://github.com/ethereum/beacon-APIs/pull/190 and https://github.com/ethereum/beacon-APIs/pull/196

- [x] `beacon/states/{state_id}/root`

- [x] `beacon/states/{state_id}/fork`

- [x] `beacon/states/{state_id}/finality_checkpoints`

- [x] `beacon/states/{state_id}/validators`

- [x] `beacon/states/{state_id}/validators/{validator_id}`

- [x] `beacon/states/{state_id}/validator_balances`

- [x] `beacon/states/{state_id}/committees`

- [x] `beacon/states/{state_id}/sync_committees`

- [x] `beacon/headers`

- [x] `beacon/headers/{block_id}`

- [x] `beacon/blocks/{block_id}`

- [x] `beacon/blocks/{block_id}/root`

- [x] `beacon/blocks/{block_id}/attestations`

- [x] `debug/beacon/states/{state_id}`

- [x] `debug/beacon/heads`

- [x] `validator/duties/attester/{epoch}`

- [x] `validator/duties/proposer/{epoch}`

- [x] `validator/duties/sync/{epoch}`

Updates the following Server-Sent Events:

- [x] `events?topics=head`

- [x] `events?topics=block`

- [x] `events?topics=finalized_checkpoint`

- [x] `events?topics=chain_reorg`

## Backwards Incompatible

There is a very minor breaking change with the way the API now handles requests to `beacon/blocks/{block_id}/root` and `beacon/states/{state_id}/root` when `block_id` or `state_id` is the `Root` variant of `BlockId` and `StateId` respectively.

Previously a request to a non-existent root would simply echo the root back to the requester:

```

curl "http://localhost:5052/eth/v1/beacon/states/0xaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaa/root"

{"data":{"root":"0xaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaa"}}

```

Now it will return a `404`:

```

curl "http://localhost:5052/eth/v1/beacon/blocks/0xaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaaa/root"

{"code":404,"message":"NOT_FOUND: beacon block with root 0xaaaa…aaaa","stacktraces":[]}

```

In addition to this is the block root `0x0000000000000000000000000000000000000000000000000000000000000000` previously would return the genesis block. It will now return a `404`:

```

curl "http://localhost:5052/eth/v1/beacon/blocks/0x0000000000000000000000000000000000000000000000000000000000000000"

{"code":404,"message":"NOT_FOUND: beacon block with root 0x0000…0000","stacktraces":[]}

```

## Additional Info

- `execution_optimistic` is always set, and will return `false` pre-Bellatrix. I am also open to the idea of doing something like `#[serde(skip_serializing_if = "Option::is_none")]`.

- The value of `execution_optimistic` is set to `false` where possible. Any computation that is reliant on the `head` will simply use the `ExecutionStatus` of the head (unless the head block is pre-Bellatrix).

Co-authored-by: Paul Hauner <paul@paulhauner.com>

## Issue Addressed

Resolves#3276.

## Proposed Changes

Add a timeout for the sync committee contributions at 1/4 the slot length such that we may be able to try backup beacon nodes in the case of contribution post failure.

## Additional Info

1/4 slot length seemed standard for the timeouts, but may want to decrease this to 1/2.

I did not find any timeout related / sync committee related tests, so there are no tests. Happy to write some with a bit of guidance.

## Issue Addressed

* #3173

## Proposed Changes

Moved all `fee_recipient_file` related logic inside the `ValidatorStore` as it makes more sense to have this all together there. I tested this with the validators I have on `mainnet-shadow-fork-5` and everything appeared to work well. Only technicality is that I can't get the method to return `401` when the authorization header is not specified (it returns `400` instead). Fixing this is probably quite difficult given that none of `warp`'s rejections have code `401`.. I don't really think this matters too much though as long as it fails.

## Issue Addressed

This PR is a subset of the changes in #3134. Unstable will still not function correctly with the new builder spec once this is merged, #3134 should be used on testnets

## Proposed Changes

- Removes redundancy in "builders" (servers implementing the builder spec)

- Renames `payload-builder` flag to `builder`

- Moves from old builder RPC API to new HTTP API, but does not implement the validator registration API (implemented in https://github.com/sigp/lighthouse/pull/3194)

Co-authored-by: sean <seananderson33@gmail.com>

Co-authored-by: realbigsean <sean@sigmaprime.io>

## Issue Addressed

Lays the groundwork for builder API changes by implementing the beacon-API's new `register_validator` endpoint

## Proposed Changes

- Add a routine in the VC that runs on startup (re-try until success), once per epoch or whenever `suggested_fee_recipient` is updated, signing `ValidatorRegistrationData` and sending it to the BN.

- TODO: `gas_limit` config options https://github.com/ethereum/builder-specs/issues/17

- BN only sends VC registration data to builders on demand, but VC registration data *does update* the BN's prepare proposer cache and send an updated fcU to a local EE. This is necessary for fee recipient consistency between the blinded and full block flow in the event of fallback. Having the BN only send registration data to builders on demand gives feedback directly to the VC about relay status. Also, since the BN has no ability to sign these messages anyways (so couldn't refresh them if it wanted), and validator registration is independent of the BN head, I think this approach makes sense.

- Adds upcoming consensus spec changes for this PR https://github.com/ethereum/consensus-specs/pull/2884

- I initially applied the bit mask based on a configured application domain.. but I ended up just hard coding it here instead because that's how it's spec'd in the builder repo.

- Should application mask appear in the api?

Co-authored-by: realbigsean <sean@sigmaprime.io>

## Proposed Changes

Add a new HTTP endpoint `POST /lighthouse/analysis/block_rewards` which takes a vec of `BeaconBlock`s as input and outputs the `BlockReward`s for them.

Augment the `BlockReward` struct with the attestation data for attestations in the block, which simplifies access to this information from blockprint. Using attestation data I've been able to make blockprint up to 95% accurate across Prysm/Lighthouse/Teku/Nimbus. I hope to go even higher using a bunch of synthetic blocks produced for Prysm/Nimbus/Lodestar, which are underrepresented in the current training data.

## Issue Addressed

Web3Signer validators do not support client authentication. This means the `--tls-known-clients-file` option on Web3Signer can't be used with Lighthouse.

## Proposed Changes

Add two new fields to Web3Signer validators, `client_identity_path` and `client_identity_password`, which specify the path and password for a PKCS12 file containing a certificate and private key. If `client_identity_path` is present, use the certificate for SSL client authentication.

## Additional Info

I am successfully validating on Prater using client authentication with Web3Signer and client authentication.

## Issue Addressed

Which issue # does this PR address?

#3114

## Proposed Changes

1. introduce `mime` package

2. Parse `Accept` field in the header with `mime`

## Additional Info

Please provide any additional information. For example, future considerations

or information useful for reviewers.