## Issue Addressed

Addresses #4117

## Proposed Changes

See https://github.com/ethereum/keymanager-APIs/pull/58 for proposed API specification.

## TODO

- [x] ~~Add submission to BN~~

- removed, see discussion in [keymanager API](https://github.com/ethereum/keymanager-APIs/pull/58)

- [x] ~~Add flag to allow voluntary exit via the API~~

- no longer needed now the VC doesn't submit exit directly

- [x] ~~Additional verification / checks, e.g. if validator on same network as BN~~

- to be done on client side

- [x] ~~Potentially wait for the message to propagate and return some exit information in the response~~

- not required

- [x] Update http tests

- [x] ~~Update lighthouse book~~

- not required if this endpoint makes it to the standard keymanager API

Co-authored-by: Paul Hauner <paul@paulhauner.com>

Co-authored-by: Jimmy Chen <jimmy@sigmaprime.io>

## Title

Optimise `update_validators` by decrypting key cache only when necessary

## Issue Addressed

Resolves [#3968: Slow performance of validator client PATCH API with hundreds of keys](https://github.com/sigp/lighthouse/issues/3968)

## Proposed Changes

1. Add a check to determine if there is at least one local definition before decrypting the key cache.

2. Assign an empty `KeyCache` when all definitions are of the `Web3Signer` type.

3. Perform cache-related operations (e.g., saving the modified key cache) only if there are local definitions.

## Additional Info

This PR addresses the excessive CPU usage and slow performance experienced when using the `PATCH lighthouse/validators/{pubkey}` request with a large number of keys. The issue was caused by the key cache using cryptography to decipher and cipher the cache entities every time the request was made. This operation called `scrypt`, which was very slow and required a lot of memory when there were many concurrent requests.

These changes have no impact on the overall functionality but can lead to significant performance improvements when working with remote signers. Importantly, the key cache is never used when there are only `Web3Signer` definitions, avoiding the expensive operation of decrypting the key cache in such cases.

Co-authored-by: Maksim Shcherbo <max.shcherbo@consensys.net>

* rename 4844 to deneb

* rename 4844 to deneb

* move excess data gas field

* get EF tests working

* fix ef tests lint

* fix the blob identifier ef test

* fix accessed files ef test script

* get beacon chain tests passing

## Issue Addressed

NA

## Proposed Changes

In #4024 we added metrics to expose the latency measurements from a VC to each BN. Whilst playing with these new metrics on our infra I realised it would be great to have a single metric to make sure that the primary BN for each VC has a reasonable latency. With the current "metrics for all BNs" it's hard to tell which is the primary.

## Additional Info

NA

## Issue Addressed

NA

## Proposed Changes

- Adds a `WARN` statement for Capella, just like the previous forks.

- Adds a hint message to all those WARNs to suggest the user update the BN or VC.

## Additional Info

NA

## Issue Addressed

Closes#3963 (hopefully)

## Proposed Changes

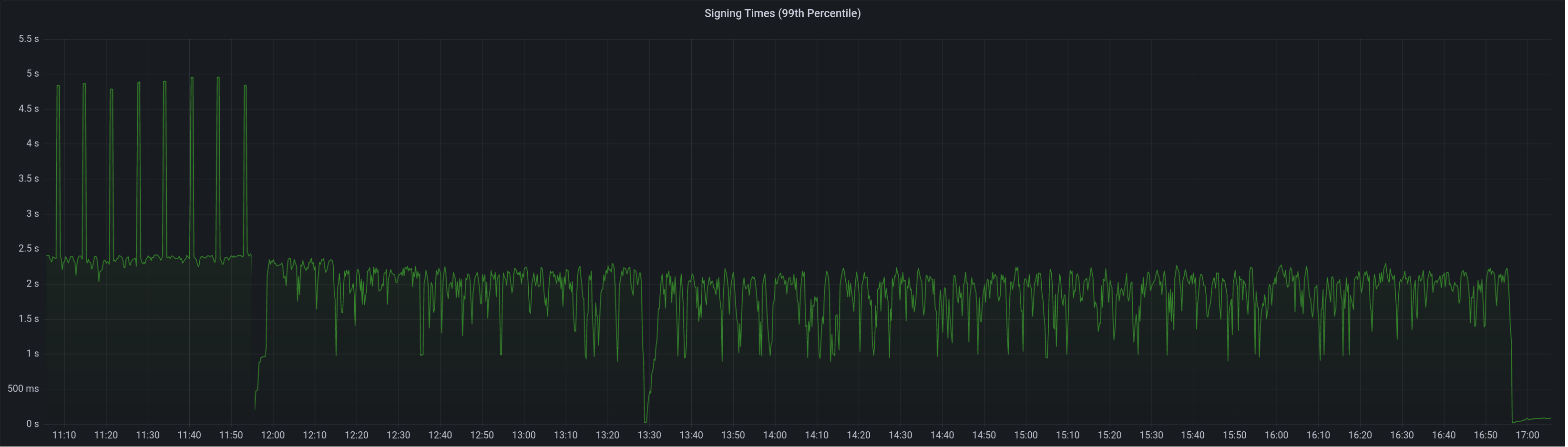

Compute attestation selection proofs gradually each slot rather than in a single `join_all` at the start of each epoch. On a machine with 5k validators this replaces 5k tasks signing 5k proofs with 1 task that signs 5k/32 ~= 160 proofs each slot.

Based on testing with Goerli validators this seems to reduce the average time to produce a signature by preventing Tokio and the OS from falling over each other trying to run hundreds of threads. My testing so far has been with local keystores, which run on a dynamic pool of up to 512 OS threads because they use [`spawn_blocking`](https://docs.rs/tokio/1.11.0/tokio/task/fn.spawn_blocking.html) (and we haven't changed the default).

An earlier version of this PR hyper-optimised the time-per-signature metric to the detriment of the entire system's performance (see the reverted commits). The current PR is conservative in that it avoids touching the attestation service at all. I think there's more optimising to do here, but we can come back for that in a future PR rather than expanding the scope of this one.

The new algorithm for attestation selection proofs is:

- We sign a small batch of selection proofs each slot, for slots up to 8 slots in the future. On average we'll sign one slot's worth of proofs per slot, with an 8 slot lookahead.

- The batch is signed halfway through the slot when there is unlikely to be contention for signature production (blocks are <4s, attestations are ~4-6 seconds, aggregates are 8s+).

## Performance Data

_See first comment for updated graphs_.

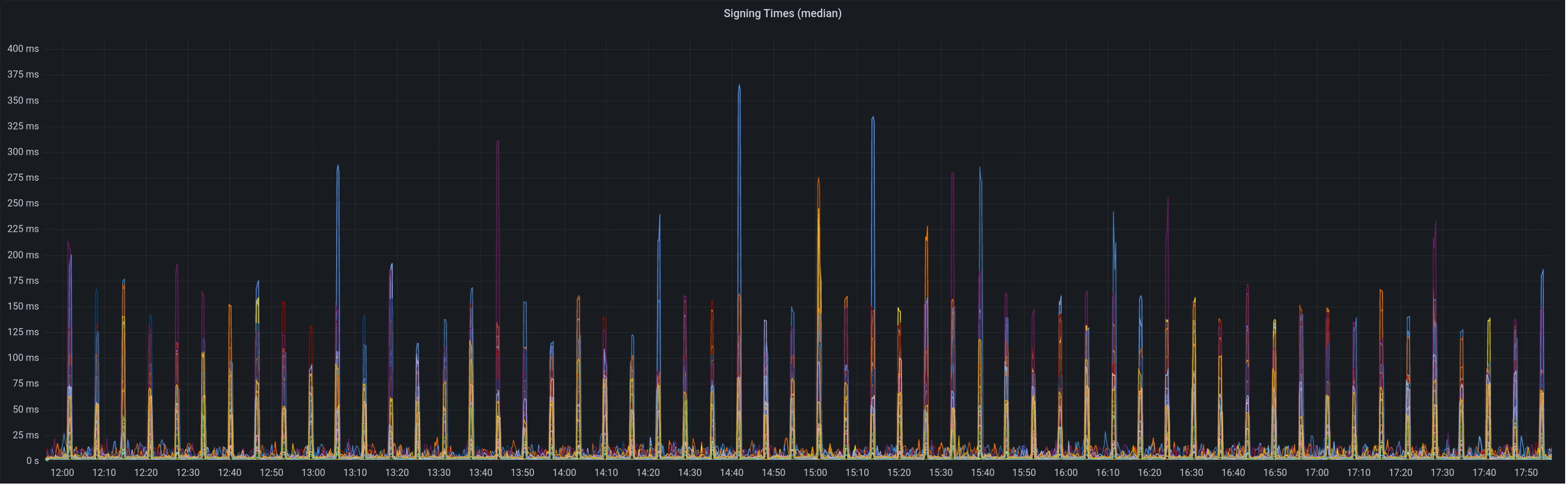

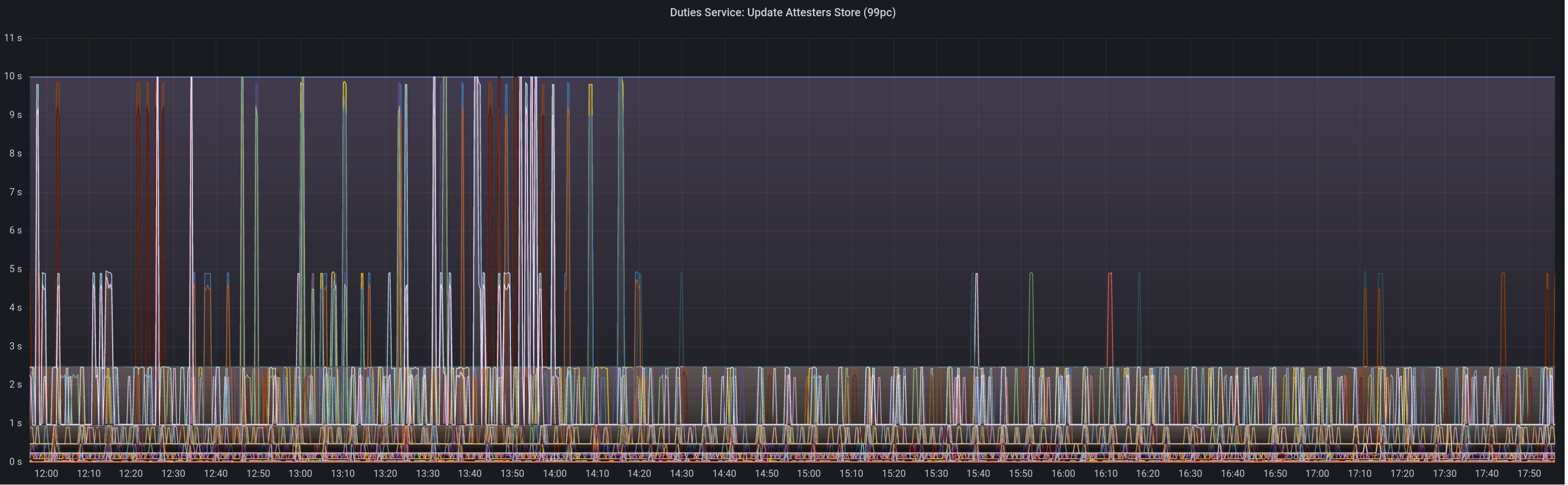

Graph of median signing times before this PR:

Graph of update attesters metric (includes selection proof signing) before this PR:

Median signing time after this PR (prototype from 12:00, updated version from 13:30):

99th percentile on signing times (bounded attestation signing from 16:55, now removed):

Attester map update timing after this PR:

Selection proof signings per second change:

## Link to late blocks

I believe this is related to the slow block signings because logs from Stakely in #3963 show these two logs almost 5 seconds apart:

> Feb 23 18:56:23.978 INFO Received unsigned block, slot: 5862880, service: block, module: validator_client::block_service:393

> Feb 23 18:56:28.552 INFO Publishing signed block, slot: 5862880, service: block, module: validator_client::block_service:416

The only thing that happens between those two logs is the signing of the block:

0fb58a680d/validator_client/src/block_service.rs (L410-L414)

Helpfully, Stakely noticed this issue without any Lighthouse BNs in the mix, which pointed to a clear issue in the VC.

## TODO

- [x] Further testing on testnet infrastructure.

- [x] Make the attestation signing parallelism configurable.

## Issue Addressed

NA

## Proposed Changes

Adds a service which periodically polls (11s into each mainnet slot) the `node/version` endpoint on each BN and roughly measures the round-trip latency. The latency is exposed as a `DEBG` log and a Prometheus metric.

The `--latency-measurement-service` has been added to the VC, with the following options:

- `--latency-measurement-service true`: enable the service (default).

- `--latency-measurement-service`: (without a value) has the same effect.

- `--latency-measurement-service false`: disable the service.

## Additional Info

Whilst looking at our staking setup, I think the BN+VC latency is contributing to late blocks. Now that we have to wait for the builders to respond it's nice to try and do everything we can to reduce that latency. Having visibility is the first step.

## Issue Addressed

Cleans up all the remnants of 4844 in capella. This makes sure when 4844 is reviewed there is nothing we are missing because it got included here

## Proposed Changes

drop a bomb on every 4844 thing

## Additional Info

Merge process I did (locally) is as follows:

- squash merge to produce one commit

- in new branch off unstable with the squashed commit create a `git revert HEAD` commit

- merge that new branch onto 4844 with `--strategy ours`

- compare local 4844 to remote 4844 and make sure the diff is empty

- enjoy

Co-authored-by: Paul Hauner <paul@paulhauner.com>

This fixes issues with certain metrics scrapers, which might error if the content-type is not correctly set.

## Issue Addressed

Fixes https://github.com/sigp/lighthouse/issues/3437

## Proposed Changes

Simply set header: `Content-Type: text/plain` on metrics server response. Seems like the errored branch does this correctly already.

## Additional Info

This is needed also to enable influx-db metric scraping which work very nicely with Geth.

## Issue Addressed

Resolves#2521

## Proposed Changes

Add a metric that indicates the next attestation duty slot for all managed validators in the validator client.

## Issue Addressed

#3853

## Proposed Changes

Added `INFO` level logs for requesting and receiving the unsigned block.

## Additional Info

Logging for successfully publishing the signed block is already there. And seemingly there is a log for when "We realize we are going to produce a block" in the `start_update_service`: `info!(log, "Block production service started");

`. Is there anywhere else you'd like to see logging around this event?

Co-authored-by: GeemoCandama <104614073+GeemoCandama@users.noreply.github.com>

## Issue Addressed

#3780

## Proposed Changes

Add error reporting that notifies the node operator that the `voting_keystore_path` in their `validator_definitions.yml` file may be incorrect.

## Additional Info

There is more info in issue #3780

Co-authored-by: Paul Hauner <paul@paulhauner.com>

## Proposed Changes

With proposer boosting implemented (#2822) we have an opportunity to re-org out late blocks.

This PR adds three flags to the BN to control this behaviour:

* `--disable-proposer-reorgs`: turn aggressive re-orging off (it's on by default).

* `--proposer-reorg-threshold N`: attempt to orphan blocks with less than N% of the committee vote. If this parameter isn't set then N defaults to 20% when the feature is enabled.

* `--proposer-reorg-epochs-since-finalization N`: only attempt to re-org late blocks when the number of epochs since finalization is less than or equal to N. The default is 2 epochs, meaning re-orgs will only be attempted when the chain is finalizing optimally.

For safety Lighthouse will only attempt a re-org under very specific conditions:

1. The block being proposed is 1 slot after the canonical head, and the canonical head is 1 slot after its parent. i.e. at slot `n + 1` rather than building on the block from slot `n` we build on the block from slot `n - 1`.

2. The current canonical head received less than N% of the committee vote. N should be set depending on the proposer boost fraction itself, the fraction of the network that is believed to be applying it, and the size of the largest entity that could be hoarding votes.

3. The current canonical head arrived after the attestation deadline from our perspective. This condition was only added to support suppression of forkchoiceUpdated messages, but makes intuitive sense.

4. The block is being proposed in the first 2 seconds of the slot. This gives it time to propagate and receive the proposer boost.

## Additional Info

For the initial idea and background, see: https://github.com/ethereum/consensus-specs/pull/2353#issuecomment-950238004

There is also a specification for this feature here: https://github.com/ethereum/consensus-specs/pull/3034

Co-authored-by: Michael Sproul <micsproul@gmail.com>

Co-authored-by: pawan <pawandhananjay@gmail.com>

## Issue Addressed

#3766

## Proposed Changes

Adds an endpoint to get the graffiti that will be used for the next block proposal for each validator.

## Usage

```bash

curl -H "Authorization: Bearer api-token" http://localhost:9095/lighthouse/ui/graffiti | jq

```

```json

{

"data": {

"0x81283b7a20e1ca460ebd9bbd77005d557370cabb1f9a44f530c4c4c66230f675f8df8b4c2818851aa7d77a80ca5a4a5e": "mr f was here",

"0xa3a32b0f8b4ddb83f1a0a853d81dd725dfe577d4f4c3db8ece52ce2b026eca84815c1a7e8e92a4de3d755733bf7e4a9b": "mr v was here",

"0x872c61b4a7f8510ec809e5b023f5fdda2105d024c470ddbbeca4bc74e8280af0d178d749853e8f6a841083ac1b4db98f": null

}

}

```

## Additional Info

This will only return graffiti that the validator client knows about.

That is from these 3 sources:

1. Graffiti File

2. validator_definitions.yml

3. The `--graffiti` flag on the VC

If the graffiti is set on the BN, it will not be returned. This may warrant an additional endpoint on the BN side which can be used in the event the endpoint returns `null`.

This PR adds some health endpoints for the beacon node and the validator client.

Specifically it adds the endpoint:

`/lighthouse/ui/health`

These are not entirely stable yet. But provide a base for modification for our UI.

These also may have issues with various platforms and may need modification.

## Issue Addressed

Closes#3612

## Proposed Changes

- Iterates through BNs until it finds a non-optimistic head.

A slight change in error behavior:

- Previously: `spawn_contribution_tasks` did not return an error for a non-optimistic block head. It returned `Ok(())` logged a warning.

- Now: `spawn_contribution_tasks` returns an error if it cannot find a non-optimistic block head. The caller of `spawn_contribution_tasks` then logs the error as a critical error.

Co-authored-by: Michael Sproul <micsproul@gmail.com>

## Issue Addressed

New lints for rust 1.65

## Proposed Changes

Notable change is the identification or parameters that are only used in recursion

## Additional Info

na

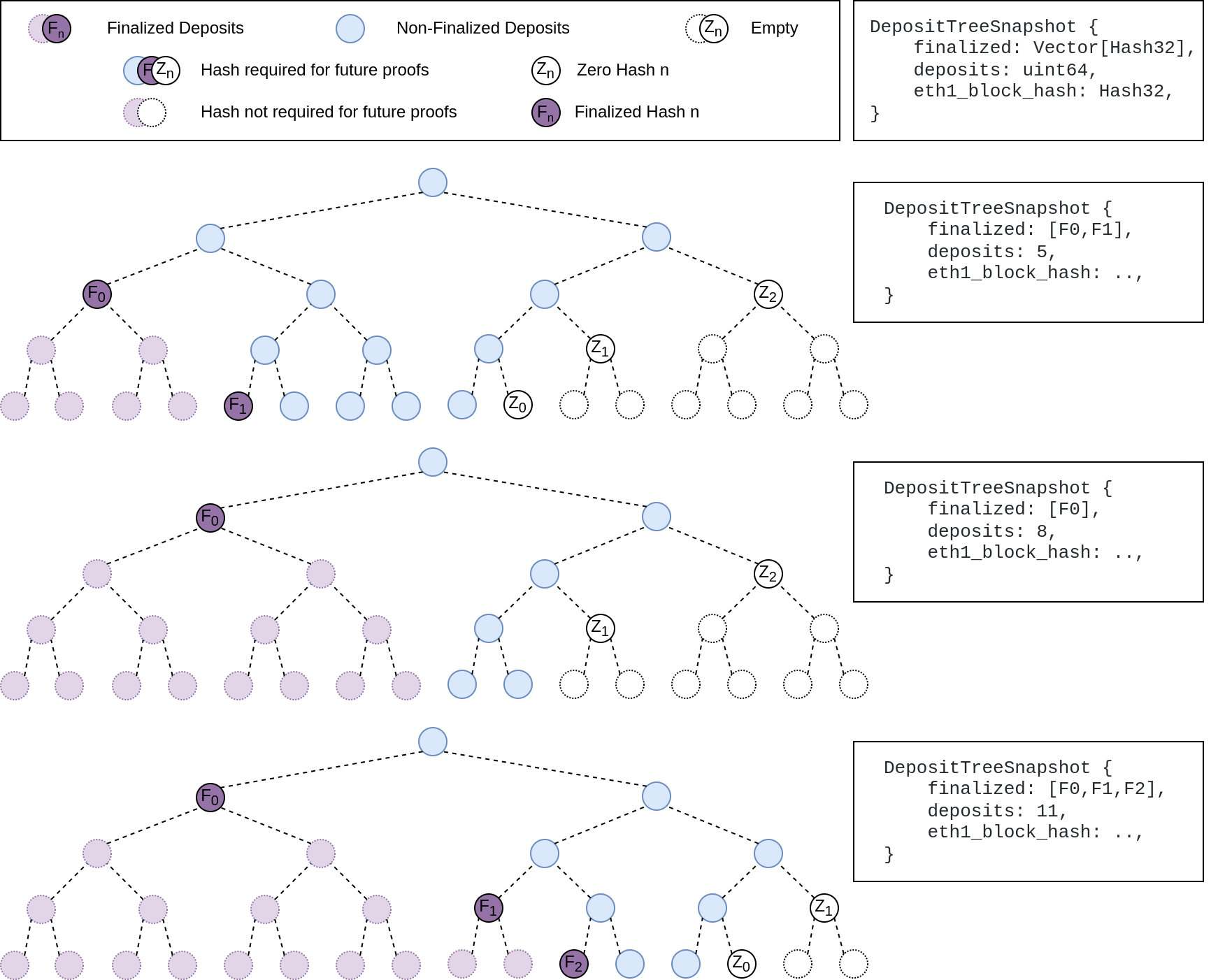

## Summary

The deposit cache now has the ability to finalize deposits. This will cause it to drop unneeded deposit logs and hashes in the deposit Merkle tree that are no longer required to construct deposit proofs. The cache is finalized whenever the latest finalized checkpoint has a new `Eth1Data` with all deposits imported.

This has three benefits:

1. Improves the speed of constructing Merkle proofs for deposits as we can just replay deposits since the last finalized checkpoint instead of all historical deposits when re-constructing the Merkle tree.

2. Significantly faster weak subjectivity sync as the deposit cache can be transferred to the newly syncing node in compressed form. The Merkle tree that stores `N` finalized deposits requires a maximum of `log2(N)` hashes. The newly syncing node then only needs to download deposits since the last finalized checkpoint to have a full tree.

3. Future proofing in preparation for [EIP-4444](https://eips.ethereum.org/EIPS/eip-4444) as execution nodes will no longer be required to store logs permanently so we won't always have all historical logs available to us.

## More Details

Image to illustrate how the deposit contract merkle tree evolves and finalizes along with the resulting `DepositTreeSnapshot`

## Other Considerations

I've changed the structure of the `SszDepositCache` so once you load & save your database from this version of lighthouse, you will no longer be able to load it from older versions.

Co-authored-by: ethDreamer <37123614+ethDreamer@users.noreply.github.com>

* add capella gossip boiler plate

* get everything compiling

Co-authored-by: realbigsean <sean@sigmaprime.io

Co-authored-by: Mark Mackey <mark@sigmaprime.io>

* small cleanup

* small cleanup

* cargo fix + some test cleanup

* improve block production

* add fixme for potential panic

Co-authored-by: Mark Mackey <mark@sigmaprime.io>