## Issue Addressed

Addresses [#4401](https://github.com/sigp/lighthouse/issues/4401)

## Proposed Changes

Shift some constants into ```ChainSpec``` and remove the constant values from code space.

## Additional Info

I mostly used ```MainnetEthSpec::default_spec()``` for getting ```ChainSpec```. I wonder Did I make a mistake about that.

Co-authored-by: armaganyildirak <armaganyildirak@gmail.com>

Co-authored-by: Paul Hauner <paul@paulhauner.com>

Co-authored-by: Age Manning <Age@AgeManning.com>

Co-authored-by: Diva M <divma@protonmail.com>

## Issue Addressed

#4118

## Proposed Changes

This PR introduces a "progressive balances" cache on the `BeaconState`, which keeps track of the accumulated target attestation balance for the current & previous epochs. The cached values are utilised by fork choice to calculate unrealized justification and finalization (instead of converting epoch participation arrays to balances for each block we receive).

This optimization will be rolled out gradually to allow for more testing. A new `--progressive-balances disabled|checked|strict|fast` flag is introduced to support this:

- `checked`: enabled with checks against participation cache, and falls back to the existing epoch processing calculation if there is a total target attester balance mismatch. There is no performance gain from this as the participation cache still needs to be computed. **This is the default mode for now.**

- `strict`: enabled with checks against participation cache, returns error if there is a mismatch. **Used for testing only**.

- `fast`: enabled with no comparative checks and without computing the participation cache. This mode gives us the performance gains from the optimization. This is still experimental and not currently recommended for production usage, but will become the default mode in a future release.

- `disabled`: disable the usage of progressive cache, and use the existing method for FFG progression calculation. This mode may be useful if we find a bug and want to stop the frequent error logs.

### Tasks

- [x] Initial cache implementation in `BeaconState`

- [x] Perform checks in fork choice to compare the progressive balances cache against results from `ParticipationCache`

- [x] Add CLI flag, and disable the optimization by default

- [x] Testing on Goerli & Benchmarking

- [x] Move caching logic from state processing to the `ProgressiveBalancesCache` (see [this comment](https://github.com/sigp/lighthouse/pull/4362#discussion_r1230877001))

- [x] Add attesting balance metrics

Co-authored-by: Jimmy Chen <jimmy@sigmaprime.io>

## Issue Addressed

Resolves#3238

## Proposed Changes

Please list or describe the changes introduced by this PR.

## Additional Info

Please provide any additional information. For example, future considerations

or information useful for reviewers.

## Issue Addressed

Cleans up all the remnants of 4844 in capella. This makes sure when 4844 is reviewed there is nothing we are missing because it got included here

## Proposed Changes

drop a bomb on every 4844 thing

## Additional Info

Merge process I did (locally) is as follows:

- squash merge to produce one commit

- in new branch off unstable with the squashed commit create a `git revert HEAD` commit

- merge that new branch onto 4844 with `--strategy ours`

- compare local 4844 to remote 4844 and make sure the diff is empty

- enjoy

Co-authored-by: Paul Hauner <paul@paulhauner.com>

## Issue Addressed

Recent discussions with other client devs about optimistic sync have revealed a conceptual issue with the optimisation implemented in #3738. In designing that feature I failed to consider that the execution node checks the `blockHash` of the execution payload before responding with `SYNCING`, and that omitting this check entirely results in a degradation of the full node's validation. A node omitting the `blockHash` checks could be tricked by a supermajority of validators into following an invalid chain, something which is ordinarily impossible.

## Proposed Changes

I've added verification of the `payload.block_hash` in Lighthouse. In case of failure we log a warning and fall back to verifying the payload with the execution client.

I've used our existing dependency on `ethers_core` for RLP support, and a new dependency on Parity's `triehash` crate for the Merkle patricia trie. Although the `triehash` crate is currently unmaintained it seems like our best option at the moment (it is also used by Reth, and requires vastly less boilerplate than Parity's generic `trie-root` library).

Block hash verification is pretty quick, about 500us per block on my machine (mainnet).

The optimistic finalized sync feature can be disabled using `--disable-optimistic-finalized-sync` which forces full verification with the EL.

## Additional Info

This PR also introduces a new dependency on our [`metastruct`](https://github.com/sigp/metastruct) library, which was perfectly suited to the RLP serialization method. There will likely be changes as `metastruct` grows, but I think this is a good way to start dogfooding it.

I took inspiration from some Parity and Reth code while writing this, and have preserved the relevant license headers on the files containing code that was copied and modified.

## Issue Addressed

This PR addresses partially #3651

## Proposed Changes

This PR adds the following methods:

* a new method to trait `TreeHash`, `hash_tree_leaves` which returns all the Merkle leaves of the ssz object.

* a new method to `BeaconState`: `compute_merkle_proof` which generates a specific merkle proof for given depth and index by using the `hash_tree_leaves` as leaves function.

## Additional Info

Now here is some rationale on why I decided to go down this route: adding a new function to commonly used trait is a pain but was necessary to make sure we have all merkle leaves for every object, that is why I just added `hash_tree_leaves` in the trait and not `compute_merkle_proof` as well. although it would make sense it gives us code duplication/harder review time and we just need it from one specific object in one specific usecase so not worth the effort YET. In my humble opinion.

Co-authored-by: Michael Sproul <micsproul@gmail.com>

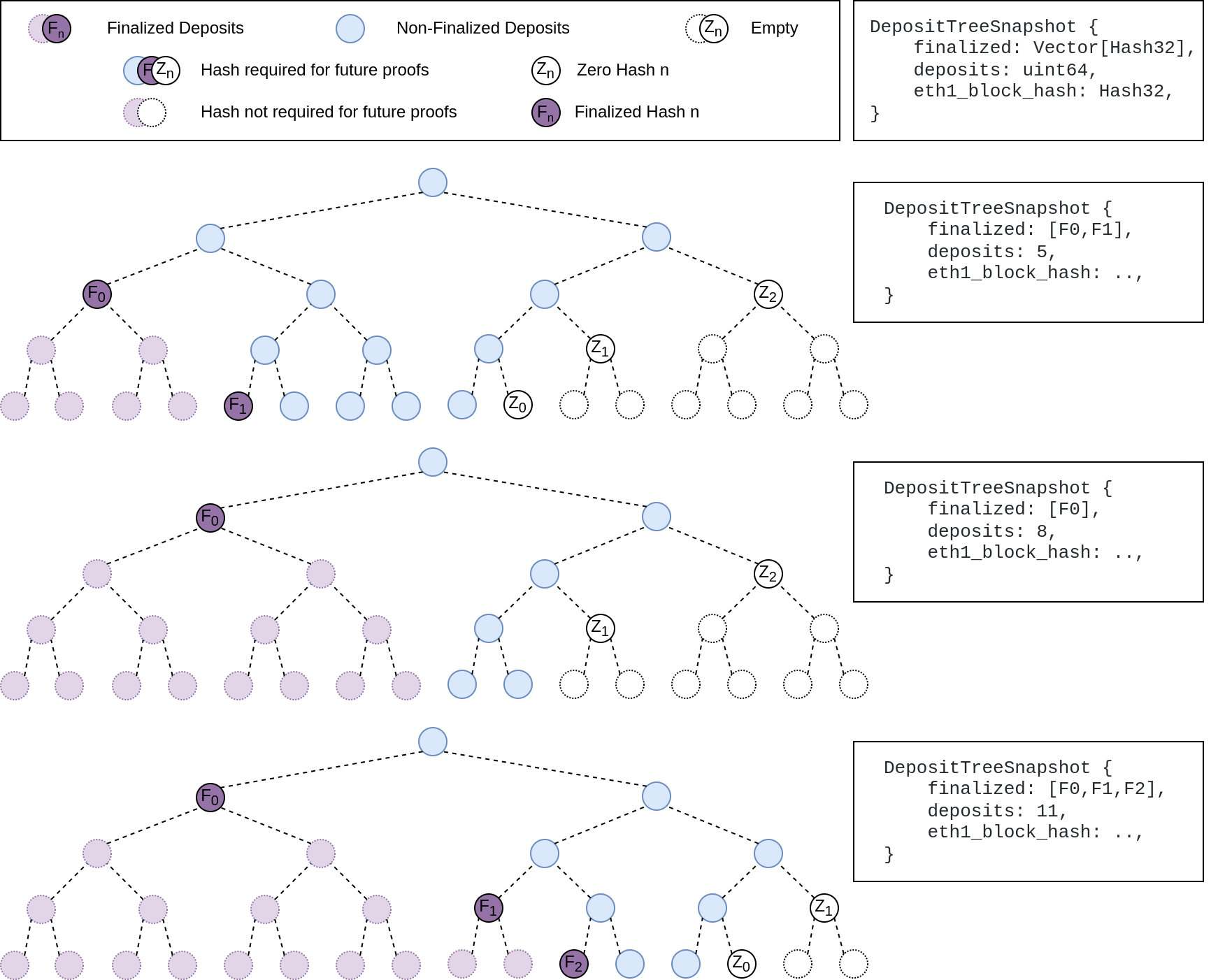

## Summary

The deposit cache now has the ability to finalize deposits. This will cause it to drop unneeded deposit logs and hashes in the deposit Merkle tree that are no longer required to construct deposit proofs. The cache is finalized whenever the latest finalized checkpoint has a new `Eth1Data` with all deposits imported.

This has three benefits:

1. Improves the speed of constructing Merkle proofs for deposits as we can just replay deposits since the last finalized checkpoint instead of all historical deposits when re-constructing the Merkle tree.

2. Significantly faster weak subjectivity sync as the deposit cache can be transferred to the newly syncing node in compressed form. The Merkle tree that stores `N` finalized deposits requires a maximum of `log2(N)` hashes. The newly syncing node then only needs to download deposits since the last finalized checkpoint to have a full tree.

3. Future proofing in preparation for [EIP-4444](https://eips.ethereum.org/EIPS/eip-4444) as execution nodes will no longer be required to store logs permanently so we won't always have all historical logs available to us.

## More Details

Image to illustrate how the deposit contract merkle tree evolves and finalizes along with the resulting `DepositTreeSnapshot`

## Other Considerations

I've changed the structure of the `SszDepositCache` so once you load & save your database from this version of lighthouse, you will no longer be able to load it from older versions.

Co-authored-by: ethDreamer <37123614+ethDreamer@users.noreply.github.com>

## Issue Addressed

Resolves#3388Resolves#2638

## Proposed Changes

- Return the `BellatrixPreset` on `/eth/v1/config/spec` by default.

- Allow users to opt out of this by providing `--http-spec-fork=altair` (unless there's a Bellatrix fork epoch set).

- Add the Altair constants from #2638 and make serving the constants non-optional (the `http-disable-legacy-spec` flag is deprecated).

- Modify the VC to only read the `Config` and not to log extra fields. This prevents it from having to muck around parsing the `ConfigAndPreset` fields it doesn't need.

## Additional Info

This change is backwards-compatible for the VC and the BN, but is marked as a breaking change for the removal of `--http-disable-legacy-spec`.

I tried making `Config` a `superstruct` too, but getting the automatic decoding to work was a huge pain and was going to require a lot of hacks, so I gave up in favour of keeping the default-based approach we have now.

## Overview

This rather extensive PR achieves two primary goals:

1. Uses the finalized/justified checkpoints of fork choice (FC), rather than that of the head state.

2. Refactors fork choice, block production and block processing to `async` functions.

Additionally, it achieves:

- Concurrent forkchoice updates to the EL and cache pruning after a new head is selected.

- Concurrent "block packing" (attestations, etc) and execution payload retrieval during block production.

- Concurrent per-block-processing and execution payload verification during block processing.

- The `Arc`-ification of `SignedBeaconBlock` during block processing (it's never mutated, so why not?):

- I had to do this to deal with sending blocks into spawned tasks.

- Previously we were cloning the beacon block at least 2 times during each block processing, these clones are either removed or turned into cheaper `Arc` clones.

- We were also `Box`-ing and un-`Box`-ing beacon blocks as they moved throughout the networking crate. This is not a big deal, but it's nice to avoid shifting things between the stack and heap.

- Avoids cloning *all the blocks* in *every chain segment* during sync.

- It also has the potential to clean up our code where we need to pass an *owned* block around so we can send it back in the case of an error (I didn't do much of this, my PR is already big enough 😅)

- The `BeaconChain::HeadSafetyStatus` struct was removed. It was an old relic from prior merge specs.

For motivation for this change, see https://github.com/sigp/lighthouse/pull/3244#issuecomment-1160963273

## Changes to `canonical_head` and `fork_choice`

Previously, the `BeaconChain` had two separate fields:

```

canonical_head: RwLock<Snapshot>,

fork_choice: RwLock<BeaconForkChoice>

```

Now, we have grouped these values under a single struct:

```

canonical_head: CanonicalHead {

cached_head: RwLock<Arc<Snapshot>>,

fork_choice: RwLock<BeaconForkChoice>

}

```

Apart from ergonomics, the only *actual* change here is wrapping the canonical head snapshot in an `Arc`. This means that we no longer need to hold the `cached_head` (`canonical_head`, in old terms) lock when we want to pull some values from it. This was done to avoid deadlock risks by preventing functions from acquiring (and holding) the `cached_head` and `fork_choice` locks simultaneously.

## Breaking Changes

### The `state` (root) field in the `finalized_checkpoint` SSE event

Consider the scenario where epoch `n` is just finalized, but `start_slot(n)` is skipped. There are two state roots we might in the `finalized_checkpoint` SSE event:

1. The state root of the finalized block, which is `get_block(finalized_checkpoint.root).state_root`.

4. The state root at slot of `start_slot(n)`, which would be the state from (1), but "skipped forward" through any skip slots.

Previously, Lighthouse would choose (2). However, we can see that when [Teku generates that event](de2b2801c8/data/beaconrestapi/src/main/java/tech/pegasys/teku/beaconrestapi/handlers/v1/events/EventSubscriptionManager.java (L171-L182)) it uses [`getStateRootFromBlockRoot`](de2b2801c8/data/provider/src/main/java/tech/pegasys/teku/api/ChainDataProvider.java (L336-L341)) which uses (1).

I have switched Lighthouse from (2) to (1). I think it's a somewhat arbitrary choice between the two, where (1) is easier to compute and is consistent with Teku.

## Notes for Reviewers

I've renamed `BeaconChain::fork_choice` to `BeaconChain::recompute_head`. Doing this helped ensure I broke all previous uses of fork choice and I also find it more descriptive. It describes an action and can't be confused with trying to get a reference to the `ForkChoice` struct.

I've changed the ordering of SSE events when a block is received. It used to be `[block, finalized, head]` and now it's `[block, head, finalized]`. It was easier this way and I don't think we were making any promises about SSE event ordering so it's not "breaking".

I've made it so fork choice will run when it's first constructed. I did this because I wanted to have a cached version of the last call to `get_head`. Ensuring `get_head` has been run *at least once* means that the cached values doesn't need to wrapped in an `Option`. This was fairly simple, it just involved passing a `slot` to the constructor so it knows *when* it's being run. When loading a fork choice from the store and a slot clock isn't handy I've just used the `slot` that was saved in the `fork_choice_store`. That seems like it would be a faithful representation of the slot when we saved it.

I added the `genesis_time: u64` to the `BeaconChain`. It's small, constant and nice to have around.

Since we're using FC for the fin/just checkpoints, we no longer get the `0x00..00` roots at genesis. You can see I had to remove a work-around in `ef-tests` here: b56be3bc2. I can't find any reason why this would be an issue, if anything I think it'll be better since the genesis-alias has caught us out a few times (0x00..00 isn't actually a real root). Edit: I did find a case where the `network` expected the 0x00..00 alias and patched it here: 3f26ac3e2.

You'll notice a lot of changes in tests. Generally, tests should be functionally equivalent. Here are the things creating the most diff-noise in tests:

- Changing tests to be `tokio::async` tests.

- Adding `.await` to fork choice, block processing and block production functions.

- Refactor of the `canonical_head` "API" provided by the `BeaconChain`. E.g., `chain.canonical_head.cached_head()` instead of `chain.canonical_head.read()`.

- Wrapping `SignedBeaconBlock` in an `Arc`.

- In the `beacon_chain/tests/block_verification`, we can't use the `lazy_static` `CHAIN_SEGMENT` variable anymore since it's generated with an async function. We just generate it in each test, not so efficient but hopefully insignificant.

I had to disable `rayon` concurrent tests in the `fork_choice` tests. This is because the use of `rayon` and `block_on` was causing a panic.

Co-authored-by: Mac L <mjladson@pm.me>

## Issue Addressed

This PR is a subset of the changes in #3134. Unstable will still not function correctly with the new builder spec once this is merged, #3134 should be used on testnets

## Proposed Changes

- Removes redundancy in "builders" (servers implementing the builder spec)

- Renames `payload-builder` flag to `builder`

- Moves from old builder RPC API to new HTTP API, but does not implement the validator registration API (implemented in https://github.com/sigp/lighthouse/pull/3194)

Co-authored-by: sean <seananderson33@gmail.com>

Co-authored-by: realbigsean <sean@sigmaprime.io>

## Proposed Changes

Reduce post-merge disk usage by not storing finalized execution payloads in Lighthouse's database.

⚠️ **This is achieved in a backwards-incompatible way for networks that have already merged** ⚠️. Kiln users and shadow fork enjoyers will be unable to downgrade after running the code from this PR. The upgrade migration may take several minutes to run, and can't be aborted after it begins.

The main changes are:

- New column in the database called `ExecPayload`, keyed by beacon block root.

- The `BeaconBlock` column now stores blinded blocks only.

- Lots of places that previously used full blocks now use blinded blocks, e.g. analytics APIs, block replay in the DB, etc.

- On finalization:

- `prune_abanonded_forks` deletes non-canonical payloads whilst deleting non-canonical blocks.

- `migrate_db` deletes finalized canonical payloads whilst deleting finalized states.

- Conversions between blinded and full blocks are implemented in a compositional way, duplicating some work from Sean's PR #3134.

- The execution layer has a new `get_payload_by_block_hash` method that reconstructs a payload using the EE's `eth_getBlockByHash` call.

- I've tested manually that it works on Kiln, using Geth and Nethermind.

- This isn't necessarily the most efficient method, and new engine APIs are being discussed to improve this: https://github.com/ethereum/execution-apis/pull/146.

- We're depending on the `ethers` master branch, due to lots of recent changes. We're also using a workaround for https://github.com/gakonst/ethers-rs/issues/1134.

- Payload reconstruction is used in the HTTP API via `BeaconChain::get_block`, which is now `async`. Due to the `async` fn, the `blocking_json` wrapper has been removed.

- Payload reconstruction is used in network RPC to serve blocks-by-{root,range} responses. Here the `async` adjustment is messier, although I think I've managed to come up with a reasonable compromise: the handlers take the `SendOnDrop` by value so that they can drop it on _task completion_ (after the `fn` returns). Still, this is introducing disk reads onto core executor threads, which may have a negative performance impact (thoughts appreciated).

## Additional Info

- [x] For performance it would be great to remove the cloning of full blocks when converting them to blinded blocks to write to disk. I'm going to experiment with a `put_block` API that takes the block by value, breaks it into a blinded block and a payload, stores the blinded block, and then re-assembles the full block for the caller.

- [x] We should measure the latency of blocks-by-root and blocks-by-range responses.

- [x] We should add integration tests that stress the payload reconstruction (basic tests done, issue for more extensive tests: https://github.com/sigp/lighthouse/issues/3159)

- [x] We should (manually) test the schema v9 migration from several prior versions, particularly as blocks have changed on disk and some migrations rely on being able to load blocks.

Co-authored-by: Paul Hauner <paul@paulhauner.com>

## Proposed Changes

I did some gardening 🌳 in our dependency tree:

- Remove duplicate versions of `warp` (git vs patch)

- Remove duplicate versions of lots of small deps: `cpufeatures`, `ethabi`, `ethereum-types`, `bitvec`, `nix`, `libsecp256k1`.

- Update MDBX (should resolve#3028). I tested and Lighthouse compiles on Windows 11 now.

- Restore `psutil` back to upstream

- Make some progress updating everything to rand 0.8. There are a few crates stuck on 0.7.

Hopefully this puts us on a better footing for future `cargo audit` issues, and improves compile times slightly.

## Additional Info

Some crates are held back by issues with `zeroize`. libp2p-noise depends on [`chacha20poly1305`](https://crates.io/crates/chacha20poly1305) which depends on zeroize < v1.5, and we can only have one version of zeroize because it's post 1.0 (see https://github.com/rust-lang/cargo/issues/6584). The latest version of `zeroize` is v1.5.4, which is used by the new versions of many other crates (e.g. `num-bigint-dig`). Once a new version of chacha20poly1305 is released we can update libp2p-noise and upgrade everything to the latest `zeroize` version.

I've also opened a PR to `blst` related to zeroize: https://github.com/supranational/blst/pull/111

## Issue Addressed

MEV boost compatibility

## Proposed Changes

See #2987

## Additional Info

This is blocked on the stabilization of a couple specs, [here](https://github.com/ethereum/beacon-APIs/pull/194) and [here](https://github.com/flashbots/mev-boost/pull/20).

Additional TODO's and outstanding questions

- [ ] MEV boost JWT Auth

- [ ] Will `builder_proposeBlindedBlock` return the revealed payload for the BN to propogate

- [ ] Should we remove `private-tx-proposals` flag and communicate BN <> VC with blinded blocks by default once these endpoints enter the beacon-API's repo? This simplifies merge transition logic.

Co-authored-by: realbigsean <seananderson33@gmail.com>

Co-authored-by: realbigsean <sean@sigmaprime.io>

## Proposed Changes

Lots of lint updates related to `flat_map`, `unwrap_or_else` and string patterns. I did a little more creative refactoring in the op pool, but otherwise followed Clippy's suggestions.

## Additional Info

We need this PR to unblock CI.

## Issue Addressed

Alternative to #2935

## Proposed Changes

Replace the `Vec<u8>` inside `Bitfield` with a `SmallVec<[u8; 32>`. This eliminates heap allocations for attestation bitfields until we reach 500K validators, at which point we can consider increasing `SMALLVEC_LEN` to 40 or 48.

While running Lighthouse under `heaptrack` I found that SSZ encoding and decoding of bitfields corresponded to 22% of all allocations by count. I've confirmed that with this change applied those allocations disappear entirely.

## Additional Info

We can win another 8 bytes of space by using `smallvec`'s [`union` feature](https://docs.rs/smallvec/1.8.0/smallvec/#union), although I might leave that for a future PR because I don't know how experimental that feature is and whether it uses some spicy `unsafe` blocks.

## Issue Addressed

#2900

## Proposed Changes

Change type of extra_fields in ConfigAndPreset so it can contain non string values (inside serde_json::Value)

## Proposed Changes

Update `superstruct` to bring in @realbigsean's fixes necessary for MEV-compatible private beacon block types (a la #2795).

The refactoring is due to another change in superstruct that allows partial getters to be auto-generated.

## Issue Addressed

Resolves: https://github.com/sigp/lighthouse/issues/2741

Includes: https://github.com/sigp/lighthouse/pull/2853 so that we can get ssz static tests passing here on v1.1.6. If we want to merge that first, we can make this diff slightly smaller

## Proposed Changes

- Changes the `justified_epoch` and `finalized_epoch` in the `ProtoArrayNode` each to an `Option<Checkpoint>`. The `Option` is necessary only for the migration, so not ideal. But does allow us to add a default logic to `None` on these fields during the database migration.

- Adds a database migration from a legacy fork choice struct to the new one, search for all necessary block roots in fork choice by iterating through blocks in the db.

- updates related to https://github.com/ethereum/consensus-specs/pull/2727

- We will have to update the persisted forkchoice to make sure the justified checkpoint stored is correct according to the updated fork choice logic. This boils down to setting the forkchoice store's justified checkpoint to the justified checkpoint of the block that advanced the finalized checkpoint to the current one.

- AFAICT there's no migration steps necessary for the update to allow applying attestations from prior blocks, but would appreciate confirmation on that

- I updated the consensus spec tests to v1.1.6 here, but they will fail until we also implement the proposer score boost updates. I confirmed that the previously failing scenario `new_finalized_slot_is_justified_checkpoint_ancestor` will now pass after the boost updates, but haven't confirmed _all_ tests will pass because I just quickly stubbed out the proposer boost test scenario formatting.

- This PR now also includes proposer boosting https://github.com/ethereum/consensus-specs/pull/2730

## Additional Info

I realized checking justified and finalized roots in fork choice makes it more likely that we trigger this bug: https://github.com/ethereum/consensus-specs/pull/2727

It's possible the combination of justified checkpoint and finalized checkpoint in the forkchoice store is different from in any block in fork choice. So when trying to startup our store's justified checkpoint seems invalid to the rest of fork choice (but it should be valid). When this happens we get an `InvalidBestNode` error and fail to start up. So I'm including that bugfix in this branch.

Todo:

- [x] Fix fork choice tests

- [x] Self review

- [x] Add fix for https://github.com/ethereum/consensus-specs/pull/2727

- [x] Rebase onto Kintusgi

- [x] Fix `num_active_validators` calculation as @michaelsproul pointed out

- [x] Clean up db migrations

Co-authored-by: realbigsean <seananderson33@gmail.com>

* Change base_fee_per_gas to Uint256

* Add custom (de)serialization to ExecutionPayload

* Fix errors

* Add a quoted_u256 module

* Remove unused function

* lint

* Add test

* Remove extra line

Co-authored-by: Paul Hauner <paul@paulhauner.com>

## Issue Addressed

NA

## Proposed Changes

This is a wholesale rip-off of #2708, see that PR for more of a description.

I've made this PR since @realbigsean is offline and I can't merge his PR due to Github's frustrating `target-branch-check` bug. I also changed the branch to `unstable`, since I'm trying to minimize the diff between `merge-f2f`/`unstable`. I'll just rebase `merge-f2f` onto `unstable` after this PR merges.

When running `make lint` I noticed the following warning:

```

warning: patch for `fixed-hash` uses the features mechanism. default-features and features will not take effect because the patch dependency does not support this mechanism

```

So, I removed the `features` section from the patch.

## Additional Info

NA

Co-authored-by: realbigsean <seananderson33@gmail.com>