## Issue Addressed

Closes#1719

## Proposed Changes

Lift the internal `RwLock`s and `Mutex`es from the `Observed*` data structures to resolve the race conditions described in #1719.

Most of this work was done by @paulhauner on his `lift-locks` branch, I merely updated it for the current `master` and checked over it.

## Additional Info

I think it would be prudent to test this on a testnet or two before mainnet launch, just to be sure that the extra lock contention doesn't negatively impact performance.

## Issue Addressed

NA

## Proposed Changes

- Caches later blocks than is required by `ETH1_FOLLOW_DISTANCE`.

- Adds logging to `warn` if the eth1 cache is insufficiently primed.

- Use `max_by_key` instead of `max_by` in `BeaconChain::Eth1Chain` since it's simpler.

- Rename `voting_period_start_timestamp` to `voting_target_timestamp` for accuracy.

## Additional Info

The reason for eating into the `ETH1_FOLLOW_DISTANCE` and caching blocks that are closer to the head is due to possibility for `SECONDS_PER_ETH1_BLOCK` to be incorrect (as is the case for the Pyrmont testnet on Goerli).

If `SECONDS_PER_ETH1_BLOCK` is too short, we'll skip back too far from the head and skip over blocks that would be valid [`is_candidate_block`](https://github.com/ethereum/eth2.0-specs/blob/v1.0.0/specs/phase0/validator.md#eth1-data) blocks. This was the case on the Pyrmont testnet and resulted in Lighthouse choosing blocks that were about 30 minutes older than is ideal.

## Issue Addressed

*Should* address #1917

## Proposed Changes

Stops the `BackgroupMigrator` rx channel from backing up with big `BeaconState` messages.

Looking at some logs from my Medalla node, we can see a discrepancy between the head finalized epoch and the migrator finalized epoch:

```

Nov 17 16:50:21.606 DEBG Head beacon block slot: 129214, root: 0xbc7a…0b99, finalized_epoch: 4033, finalized_root: 0xf930…6562, justified_epoch: 4035, justified_root: 0x206b…9321, service: beacon

Nov 17 16:50:21.626 DEBG Batch processed service: sync, processed_blocks: 43, last_block_slot: 129214, chain: 8274002112260436595, first_block_slot: 129153, batch_epoch: 4036

Nov 17 16:50:21.626 DEBG Chain advanced processing_target: 4036, new_start: 4036, previous_start: 4034, chain: 8274002112260436595, service: sync

Nov 17 16:50:22.162 DEBG Completed batch received awaiting_batches: 5, blocks: 47, epoch: 4048, chain: 8274002112260436595, service: sync

Nov 17 16:50:22.162 DEBG Requesting batch start_slot: 129601, end_slot: 129664, downloaded: 0, processed: 0, state: Downloading(16Uiu2HAmG3C3t1McaseReECjAF694tjVVjkDoneZEbxNhWm1nZaT, 0 blocks, 1273), epoch: 4050, chain: 8274002112260436595, service: sync

Nov 17 16:50:22.654 DEBG Database compaction complete service: beacon

Nov 17 16:50:22.655 INFO Starting database pruning new_finalized_epoch: 2193, old_finalized_epoch: 2192, service: beacon

```

I believe this indicates that the migrator rx has a backed-up queue of `MigrationNotification` items which each contain a `BeaconState`.

## TODO

- [x] Remove finalized state requirement for op-pool

## Proposed Changes

In an attempt to fix OOM issues and database consistency issues observed by some users after the introduction of compaction in v0.3.4, this PR makes the following changes:

* Run compaction less often: roughly every 1024 epochs, including after long periods of non-finality. I think the division check proposed by Paul is pretty solid, and ensures we don't miss any events where we should be compacting. LevelDB lacks an easy way to check the size of the DB, which would be another good trigger.

* Make it possible to disable the compaction on finalization using `--auto-compact-db=false`

* Make it possible to trigger a manual, single-threaded foreground compaction on start-up using `--compact-db`

* Downgrade the pruning log to `DEBUG`, as it's particularly noisy during sync

I would like to ship these changes to affected users ASAP, and will document them further in the Advanced Database section of the book if they prove effective.

## Issue Addressed

Resolves#1809Resolves#1824Resolves#1818Resolves#1828 (hopefully)

## Proposed Changes

- add `validator_index` to the proposer duties endpoint

- add the ability to query for historical proposer duties

- `StateId` deserialization now fails with a 400 warp rejection

- add the `validator_balances` endpoint

- update the `aggregate_and_proofs` endpoint to accept an array

- updates the attester duties endpoint from a `GET` to a `POST`

- reduces the number of times we query for proposer duties from once per slot per validator to only once per slot

Co-authored-by: realbigsean <seananderson33@gmail.com>

Co-authored-by: Paul Hauner <paul@paulhauner.com>

## Issue Addressed

Closes#1866

## Proposed Changes

* Compact the database on finalization. This removes the deleted states from disk completely. Because it happens in the background migrator, it doesn't block other database operations while it runs. On my Medalla node it took about 1 minute and shrank the database from 90GB to 9GB.

* Fix an inefficiency in the pruning algorithm where it would always use the genesis checkpoint as the `old_finalized_checkpoint` when running for the first time after start-up. This would result in loading lots of states one-at-a-time back to genesis, and storing a lot of block roots in memory. The new code stores the old finalized checkpoint on disk and only uses genesis if no checkpoint is already stored. This makes it both backwards compatible _and_ forwards compatible -- no schema change required!

* Introduce two new `INFO` logs to indicate when pruning has started and completed. Users seem to want to know this information without enabling debug logs!

## Issue Addressed

- Related to #1691

## Proposed Changes

Adds the following API endpoints:

- `GET lighthouse/eth1/syncing`: status about how synced we are with Eth1.

- `GET lighthouse/eth1/block_cache`: all locally cached eth1 blocks.

- `GET lighthouse/eth1/deposit_cache`: all locally cached eth1 deposits.

Additionally:

- Moves some types from the `beacon_node/eth1` to the `common/eth2` crate, so they can be used in the API without duplication.

- Allow `update_deposit_cache` and `update_block_cache` to take an optional head block number to avoid duplicate requests.

## Additional Info

TBC

## Issue Addressed

Closes#800Closes#1713

## Proposed Changes

Implement the temporary state storage algorithm described in #800. Specifically:

* Add `DBColumn::BeaconStateTemporary`, for storing 0-length temporary marker values.

* Store intermediate states immediately as they are created, marked temporary. Delete the temporary flag if the block is processed successfully.

* Add a garbage collection process to delete leftover temporary states on start-up.

* Bump the database schema version to 2 so that a DB with temporary states can't accidentally be used with older versions of the software. The auto-migration is a no-op, but puts in place some infra that we can use for future migrations (e.g. #1784)

## Additional Info

There are two known race conditions, one potentially causing permanent faults (hopefully rare), and the other insignificant.

### Race 1: Permanent state marked temporary

EDIT: this has been fixed by the addition of a lock around the relevant critical section

There are 2 threads that are trying to store 2 different blocks that share some intermediate states (e.g. they both skip some slots from the current head). Consider this sequence of events:

1. Thread 1 checks if state `s` already exists, and seeing that it doesn't, prepares an atomic commit of `(s, s_temporary_flag)`.

2. Thread 2 does the same, but also gets as far as committing the state txn, finishing the processing of its block, and _deleting_ the temporary flag.

3. Thread 1 is (finally) scheduled again, and marks `s` as temporary with its transaction.

4.

a) The process is killed, or thread 1's block fails verification and the temp flag is not deleted. This is a permanent failure! Any attempt to load state `s` will fail... hope it isn't on the main chain! Alternatively (4b) happens...

b) Thread 1 finishes, and re-deletes the temporary flag. In this case the failure is transient, state `s` will disappear temporarily, but will come back once thread 1 finishes running.

I _hope_ that steps 1-3 only happen very rarely, and 4a even more rarely. It's hard to know

This once again begs the question of why we're using LevelDB (#483), when it clearly doesn't care about atomicity! A ham-fisted fix would be to wrap the hot and cold DBs in locks, which would bring us closer to how other DBs handle read-write transactions. E.g. [LMDB only allows one R/W transaction at a time](https://docs.rs/lmdb/0.8.0/lmdb/struct.Environment.html#method.begin_rw_txn).

### Race 2: Temporary state returned from `get_state`

I don't think this race really matters, but in `load_hot_state`, if another thread stores a state between when we call `load_state_temporary_flag` and when we call `load_hot_state_summary`, then we could end up returning that state even though it's only a temporary state. I can't think of any case where this would be relevant, and I suspect if it did come up, it would be safe/recoverable (having data is safer than _not_ having data).

This could be fixed by using a LevelDB read snapshot, but that would require substantial changes to how we read all our values, so I don't think it's worth it right now.

## Issue Addressed

Closes#1769Closes#1708

## Proposed Changes

Tweaks the op pool pruning so that the attestation pool is pruned against the wall-clock epoch instead of the finalized state's epoch. This should reduce the unbounded growth that we've seen during periods without finality.

Also fixes up the voluntary exit pruning as raised in #1708.

## Issue Addressed

Closes#1548

## Proposed Changes

Optimizes attester slashing choice by choosing the ones that cover the most amount of validators slashed, with the highest effective balances

## Additional Info

Initial pass, need to write a test for it

## Issue Addressed

Resolves#1776

## Proposed Changes

The beacon chain builder was using the canonical head's state root for the `genesis_state_root` field.

## Additional Info

## Issue Addressed

Closes#1557

## Proposed Changes

Modify the pruning algorithm so that it mutates the head-tracker _before_ committing the database transaction to disk, and _only if_ all the heads to be removed are still present in the head-tracker (i.e. no concurrent mutations).

In the process of writing and testing this I also had to make a few other changes:

* Use internal mutability for all `BeaconChainHarness` functions (namely the RNG and the graffiti), in order to enable parallel calls (see testing section below).

* Disable logging in harness tests unless the `test_logger` feature is turned on

And chose to make some clean-ups:

* Delete the `NullMigrator`

* Remove type-based configuration for the migrator in favour of runtime config (simpler, less duplicated code)

* Use the non-blocking migrator unless the blocking migrator is required. In the store tests we need the blocking migrator because some tests make asserts about the state of the DB after the migration has run.

* Rename `validators_keypairs` -> `validator_keypairs` in the `BeaconChainHarness`

## Testing

To confirm that the fix worked, I wrote a test using [Hiatus](https://crates.io/crates/hiatus), which can be found here:

https://github.com/michaelsproul/lighthouse/tree/hiatus-issue-1557

That test can't be merged because it inserts random breakpoints everywhere, but if you check out that branch you can run the test with:

```

$ cd beacon_node/beacon_chain

$ cargo test --release --test parallel_tests --features test_logger

```

It should pass, and the log output should show:

```

WARN Pruning deferred because of a concurrent mutation, message: this is expected only very rarely!

```

## Additional Info

This is a backwards-compatible change with no impact on consensus.

## Issue Addressed

- Resolves#1706

## Proposed Changes

Updates dependencies across the workspace. Any crate that was not able to be brought to the latest version is listed in #1712.

## Additional Info

NA

* Initial rebase

* Remove old code

* Correct release tests

* Rebase commit

* Remove eth2-testnet dep on eth2libp2p

* Remove crates lost in rebase

* Remove unused dep

## Issue Addressed

NA

## Proposed Changes

Addresses an interesting DoS vector raised by @protolambda by verifying that the head and target are consistent when processing aggregate attestations. This check prevents us from loading very old target blocks and doing lots of work to skip them to the current slot.

## Additional Info

NA

This commit was modified by Paul H whilst rebasing master onto

v0.3.0-staging

Adding UPnP support will help grow the DHT by allowing NAT traversal for peers with UPnP supported routers.

Using IGD library: https://docs.rs/igd/0.10.0/igd/

Adding the the libp2p tcp port and discovery udp port. If this fails it simply logs the attempt and moves on

Co-authored-by: Age Manning <Age@AgeManning.com>

This commit was edited by Paul H when rebasing from master to

v0.3.0-staging.

Solution 2 proposed here: https://github.com/sigp/lighthouse/issues/1435#issuecomment-692317639

- Adds an optional `--wss-checkpoint` flag that takes a string `root:epoch`

- Verify that the given checkpoint exists in the chain, or that the the chain syncs through this checkpoint. If not, shutdown and prompt the user to purge state before restarting.

Co-authored-by: Paul Hauner <paul@paulhauner.com>

## Issue Addressed

Closes#673

## Proposed Changes

Store a schema version in the database so that future releases can check they're running against a compatible database version. This would also enable automatic migration on breaking database changes, but that's left as future work.

The database config is also stored in the database so that the `slots_per_restore_point` value can be checked for consistency, which closes#673

- Resolves#1550

- Resolves#824

- Resolves#825

- Resolves#1131

- Resolves#1411

- Resolves#1256

- Resolve#1177

- Includes the `ShufflingId` struct initially defined in #1492. That PR is now closed and the changes are included here, with significant bug fixes.

- Implement the https://github.com/ethereum/eth2.0-APIs in a new `http_api` crate using `warp`. This replaces the `rest_api` crate.

- Add a new `common/eth2` crate which provides a wrapper around `reqwest`, providing the HTTP client that is used by the validator client and for testing. This replaces the `common/remote_beacon_node` crate.

- Create a `http_metrics` crate which is a dedicated server for Prometheus metrics (they are no longer served on the same port as the REST API). We now have flags for `--metrics`, `--metrics-address`, etc.

- Allow the `subnet_id` to be an optional parameter for `VerifiedUnaggregatedAttestation::verify`. This means it does not need to be provided unnecessarily by the validator client.

- Move `fn map_attestation_committee` in `mod beacon_chain::attestation_verification` to a new `fn with_committee_cache` on the `BeaconChain` so the same cache can be used for obtaining validator duties.

- Add some other helpers to `BeaconChain` to assist with common API duties (e.g., `block_root_at_slot`, `head_beacon_block_root`).

- Change the `NaiveAggregationPool` so it can index attestations by `hash_tree_root(attestation.data)`. This is a requirement of the API.

- Add functions to `BeaconChainHarness` to allow it to create slashings and exits.

- Allow for `eth1::Eth1NetworkId` to go to/from a `String`.

- Add functions to the `OperationPool` to allow getting all objects in the pool.

- Add function to `BeaconState` to check if a committee cache is initialized.

- Fix bug where `seconds_per_eth1_block` was not transferring over from `YamlConfig` to `ChainSpec`.

- Add the `deposit_contract_address` to `YamlConfig` and `ChainSpec`. We needed to be able to return it in an API response.

- Change some uses of serde `serialize_with` and `deserialize_with` to a single use of `with` (code quality).

- Impl `Display` and `FromStr` for several BLS fields.

- Check for clock discrepancy when VC polls BN for sync state (with +/- 1 slot tolerance). This is not intended to be comprehensive, it was just easy to do.

- See #1434 for a per-endpoint overview.

- Seeking clarity here: https://github.com/ethereum/eth2.0-APIs/issues/75

- [x] Add docs for prom port to close#1256

- [x] Follow up on this #1177

- [x] ~~Follow up with #1424~~ Will fix in future PR.

- [x] Follow up with #1411

- [x] ~~Follow up with #1260~~ Will fix in future PR.

- [x] Add quotes to all integers.

- [x] Remove `rest_types`

- [x] Address missing beacon block error. (#1629)

- [x] ~~Add tests for lighthouse/peers endpoints~~ Wontfix

- [x] ~~Follow up with validator status proposal~~ Tracked in #1434

- [x] Unify graffiti structs

- [x] ~~Start server when waiting for genesis?~~ Will fix in future PR.

- [x] TODO in http_api tests

- [x] Move lighthouse endpoints off /eth/v1

- [x] Update docs to link to standard

- ~~Blocked on #1586~~

Co-authored-by: Michael Sproul <michael@sigmaprime.io>

## Issue Addressed

Closes#1680

## Proposed Changes

This PR fixes a race condition in beacon node start-up whereby the pubkey cache could be created by the beacon chain builder before the `PersistedBeaconChain` was stored to disk. When the node restarted, it would find the persisted chain missing, and attempt to start from scratch, creating a new pubkey cache in the process. This call to `ValidatorPubkeyCache::new` would fail if the file already existed (which it did). I changed the behaviour so that pubkey cache initialization now doesn't care whether there's a file already in existence (it's only a cache after all). Instead it will truncate and recreate the file in the race scenario described.

## Issue Addressed

NA

## Proposed Changes

There are four new conditions introduced in v0.12.3:

1. _[REJECT]_ The attestation's epoch matches its target -- i.e. `attestation.data.target.epoch ==

compute_epoch_at_slot(attestation.data.slot)`

1. _[REJECT]_ The attestation's target block is an ancestor of the block named in the LMD vote -- i.e.

`get_ancestor(store, attestation.data.beacon_block_root, compute_start_slot_at_epoch(attestation.data.target.epoch)) == attestation.data.target.root`

1. _[REJECT]_ The committee index is within the expected range -- i.e. `data.index < get_committee_count_per_slot(state, data.target.epoch)`.

1. _[REJECT]_ The number of aggregation bits matches the committee size -- i.e.

`len(attestation.aggregation_bits) == len(get_beacon_committee(state, data.slot, data.index))`.

This PR implements new logic to suit (1) and (2). Tests are added for (3) and (4), although they were already implicitly enforced.

## Additional Info

- There's a bit of edge-case with target root verification that I raised here: https://github.com/ethereum/eth2.0-specs/pull/2001#issuecomment-699246659

- I've had to add an `--ignore` to `cargo audit` to get CI to pass. See https://github.com/sigp/lighthouse/issues/1669

## Issue Addressed

- Resolves#1616

## Proposed Changes

If we look at the function which persists fork choice and the canonical head to disk:

1db8daae0c/beacon_node/beacon_chain/src/beacon_chain.rs (L234-L280)

There is a race-condition which might cause the canonical head and fork choice values to be out-of-sync.

I believe this is the cause of #1616. I managed to recreate the issue and produce a database that was unable to sync under the `master` branch but able to sync with this branch.

These new changes solve the issue by ignoring the persisted `canonical_head_block_root` value and instead getting fork choice to generate it. This ensures that the canonical head is in-sync with fork choice.

## Additional Info

This is hotfix method that leaves some crusty code hanging around. Once this PR is merged (to satisfy the v0.2.x users) we should later update and merge #1638 so we can have a clean fix for the v0.3.x versions.

## Issue Addressed

- Resolves#1616

## Proposed Changes

Fixes a bug where we are unable to read the finalized block from fork choice.

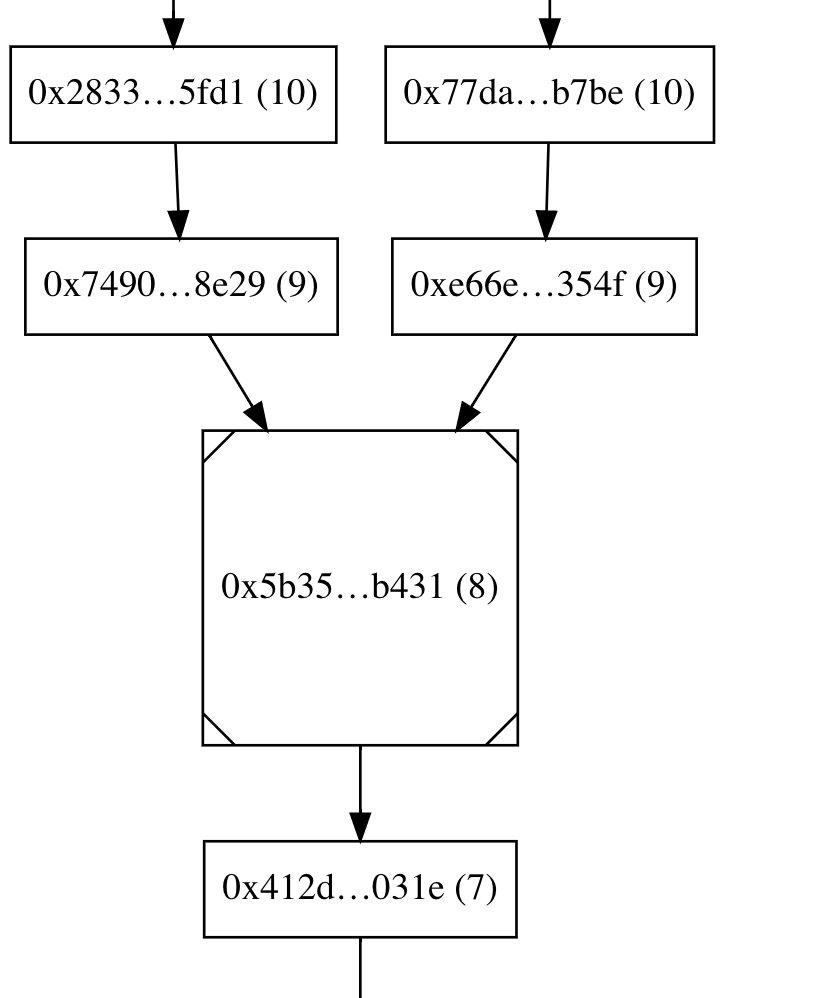

## Detail

I had made an assumption that the finalized block always has a parent root of `None`:

e5fc6bab48/consensus/fork_choice/src/fork_choice.rs (L749-L752)

This was a faulty assumption, we don't set parent *roots* to `None`. Instead we *sometimes* set parent *indices* to `None`, depending if this pruning condition is satisfied:

e5fc6bab48/consensus/proto_array/src/proto_array.rs (L229-L232)

The bug manifested itself like this:

1. We attempt to get the finalized block from fork choice

1. We try to check that the block is descendant of the finalized block (note: they're the same block).

1. We expect the parent root to be `None`, but it's actually the parent root of the finalized root.

1. We therefore end up checking if the parent of the finalized root is a descendant of itself. (note: it's an *ancestor* not a *descendant*).

1. We therefore declare that the finalized block is not a descendant of (or eq to) the finalized block. Bad.

## Additional Info

In reflection, I made a poor assumption in the quest to obtain a probably negligible performance gain. The performance gain wasn't worth the risk and we got burnt.

## Issue Addressed

Partly addresses #1547

## Proposed Changes

This fix addresses the missing attestations at slot 0 of an epoch (also sometimes slot 1 when slot 0 was skipped).

There are 2 cases:

1. BN receives the block for the attestation slot after 4 seconds (1/3rd of the slot).

2. No block is proposed for this slot.

In both cases, when we produce the attestation, we pass the head state to the

`produce_unaggregated_attestation_for_block` function here

9833eca024/beacon_node/beacon_chain/src/beacon_chain.rs (L845-L850)

Since we don't advance the state in this function, we set `attestation.data.source = state.current_justified_checkpoint` which is atleast 2 epochs lower than current_epoch(wall clock epoch).

This attestation is invalid and cannot be included in a block because of this assert from the spec:

```python

if data.target.epoch == get_current_epoch(state):

assert data.source == state.current_justified_checkpoint

state.current_epoch_attestations.append(pending_attestation)

```

https://github.com/ethereum/eth2.0-specs/blob/dev/specs/phase0/beacon-chain.md#attestations

This PR changes the `produce_unaggregated_attestation_for_block` function to ensure that it advances the state before producing the attestation at the new epoch.

Running this on my node, have missed 0 attestations across all 8 of my validators in a 100 epoch period 🎉

To compare, I was missing ~14 attestations across all 8 validators in the same 100 epoch period before the fix.

Will report missed attestations if any after running for another 100 epochs tomorrow.

Converts the graffiti binary data to string before printing to logs.

## Issue Addressed

#1566

## Proposed Changes

Rather than converting graffiti to a vector the binary data less the last character is passed to String::from_utf_lossy(). This then allows us to call the to_string() function directly to give us the string

## Additional Info

Rust skills are fairly weak

The PR:

* Adds the ability to generate a crucial test scenario that isn't possible with `BeaconChainHarness` (i.e. two blocks occupying the same slot; previously forks necessitated skipping slots):

* New testing API: Instead of repeatedly calling add_block(), you generate a sorted `Vec<Slot>` and leave it up to the framework to generate blocks at those slots.

* Jumping backwards to an earlier epoch is a hard error, so that tests necessarily generate blocks in a epoch-by-epoch manner.

* Configures the test logger so that output is printed on the console in case a test fails. The logger also plays well with `--nocapture`, contrary to the existing testing framework

* Rewrites existing fork pruning tests to use the new API

* Adds a tests that triggers finalization at a non epoch boundary slot

* Renamed `BeaconChainYoke` to `BeaconChainTestingRig` because the former has been too confusing

* Fixed multiple tests (e.g. `block_production_different_shuffling_long`, `delete_blocks_and_states`, `shuffling_compatible_simple_fork`) that relied on a weird (and accidental) feature of the old `BeaconChainHarness` that attestations aren't produced for epochs earlier than the current one, thus masking potential bugs in test cases.

Co-authored-by: Michael Sproul <michael@sigmaprime.io>

## Issue Addressed

Closes#1488

## Proposed Changes

* Prevent the pruning algorithm from over-eagerly deleting states at skipped slots when they are shared with the canonical chain.

* Add `debug` logging to the pruning algorithm so we have so better chance of debugging future issues from logs.

* Modify the handling of the "finalized state" in the beacon chain, so that it's always the state at the first slot of the finalized epoch (previously it was the state at the finalized block). This gives database pruning a clearer and cleaner view of things, and will marginally impact the pruning of the op pool, observed proposers, etc (in ways that are safe as far as I can tell).

* Remove duplicated `RevertedFinalizedEpoch` check from `after_finalization`

* Delete useless and unused `max_finality_distance`

* Add tests that exercise pruning with shared states at skip slots

* Delete unnecessary `block_strategy` argument from `add_blocks` and friends in the test harness (will likely conflict with #1380 slightly, sorry @adaszko -- but we can fix that)

* Bonus: add a `BeaconChain::with_head` method. I didn't end up needing it, but it turned out quite nice, so I figured we could keep it?

## Additional Info

Any users who have experienced pruning errors on Medalla will need to resync after upgrading to a release including this change. This should end unbounded `chain_db` growth! 🎉

## Issue Addressed

NA

## Proposed Changes

Sets the default max skips to 700 so that it can cover the 693 slot skip from `80894 - 80201`.

## Additional Info

NA

## Issue Addressed

NA

## Proposed Changes

- Fixes a mistake I made in #1530 which resulted us in *not* rejecting attestations that we intended to reject.

- Adds skip-slot checks for blocks earlier in import process, so it rejects gossip and RPC blocks.

## Additional Info

NA

## Proposed Changes

To mitigate the impact of minority forks on RAM and disk usage, this change rejects blocks whose parent lies more than 320 slots (10 epochs, ~1 hour) in the past. The behaviour is configurable via `lighthouse bn --max-skip-slots N`, and can be turned off entirely using `--max-skip-slots none`.

Co-authored-by: Paul Hauner <paul@paulhauner.com>

## Issue Addressed

NA

## Proposed Changes

- Adds a new function to allow getting a state with a bad state root history for attestation verification. This reduces unnecessary tree hashing during attestation processing, which accounted for 23% of memory allocations (by bytes) in a recent `heaptrack` observation.

- Don't clone caches on intermediate epoch-boundary states during block processing.

- Reject blocks that are known to fork choice earlier during gossip processing, instead of waiting until after state has been loaded (this only happens in edge-case).

- Avoid multiple re-allocations by creating a "forced" exact size iterator.

## Additional Info

NA

## Issue Addressed

- Resolves#1451

## Proposed Changes

- Restricts the `contains_block` and `contains_block` so they only indicate a block is present if it descends from the finalized root. This helps to ensure that fork choice never points to a block that has been pruned from the database.

- Resolves#1451

- Before importing a block, double-check that its parent is known and a descendant of the finalized root.

- Split a big, monolithic block verification test into smaller tests.

## Additional Notes

I suspect there would be a craftier way to do the `is_descendant_of_finalized` check, but we're a bit tight on time now and we can optimize later if it starts showing in benches.

## TODO

- [x] Tests

## Issue Addressed

#1419

## Proposed Changes

Creates a `--graffiti` cli flag in the validator client. If the flag is set, it overrides graffiti in the beacon node.

## Additional Info

## Issue Addressed

NA

## Proposed Changes

- When producing a block, go and ensure every attestation in the naive aggregation pool is included in the operation pool. This should help us increase the number of useful attestations in a block.

- Lift the `RwLock`s inside `NaiveAggregationPool` up into a single high-level lock. There were race conditions in the existing setup and it was hard to reason about.

## Additional Info

NA

## Issue Addressed

#1028

A bit late, but I think if `BlockError` had a kind (the current `BlockError` minus everything on the variants that comes directly from the block) and the original block, more clones could be removed

## Issue Addressed

NA

## Proposed Changes

- Moves the git-based versioning we were doing into the `lighthouse_version` crate in `common`.

- Removes the `beacon_node/version` crate, replacing it with `lighthouse_version`.

- Bumps the version to `v0.2.0`.

## Additional Info

There are now two types of version string:

1. `const VERSION: &str = Lighthouse/v0.2.0-1419501f2+`

1. `version_with_platform() = Lighthouse/v0.2.0-1419501f2+/x86_64-linux`

(1) is handy cause it's a `const` and shorter. (2) has platform info so it's more useful. Note that the plus-sign (`+`) indicates the the git commit is dirty (it used to be `(modified)` but I had to shorten it to fit into graffiti).

These version strings are now included on:

- `lighthouse --version`

- `lcli --version`

- `curl localhost:5052/node/version`

- p2p messages when we communicate our version

You can update the version by changing this constant (version is not related to a `Cargo.toml`):

b9ad7102d5/common/lighthouse_version/src/lib.rs (L4-L15)

## Issue Addressed

NA

## Proposed Changes

- Refactor the `bls` crate to support multiple BLS "backends" (e.g., milagro, blst, etc).

- Removes some duplicate, unused code in `common/rest_types/src/validator.rs`.

- Removes the old "upgrade legacy keypairs" functionality (these were unencrypted keys that haven't been supported for a few testnets, no one should be using them anymore).

## Additional Info

Most of the files changed are just inconsequential changes to function names.

## TODO

- [x] Optimization levels

- [x] Infinity point: https://github.com/supranational/blst/issues/11

- [x] Ensure milagro *and* blst are tested via CI

- [x] What to do with unsafe code?

- [x] Test infinity point in signature sets

## Issue Addressed

This PR makes the `Eth1Chain::use_dummy_backend` field private. I believe this could be good to ensure the consistency of a Eth1Chain instance. 💡

## Issue Addressed

Closes#1319

## Proposed Changes

This issue:

1. Allows users to edit their Graffiti via the cli option `--graffiti`. If the graffiti is too long, lighthouse will not start and throw an error message. Otherwise, it will set the Graffiti to be the one provided by the user, right-padded with 0s.

2. Create a new `Graffiti` type and unify the code around it. With this type, everything is enforced at compile-time, and the code can be (I think...) panic-free! :)

## Additional info

Currently, only `&str` are supported, as this is the returned type by `.arg("graffiti")`.

Since this is user-input, I tried being as careful as I could. This is also why I created the `Graffiti` type, to make sure I could check as much as possible at compile time.