## Proposed Changes

With proposer boosting implemented (#2822) we have an opportunity to re-org out late blocks.

This PR adds three flags to the BN to control this behaviour:

* `--disable-proposer-reorgs`: turn aggressive re-orging off (it's on by default).

* `--proposer-reorg-threshold N`: attempt to orphan blocks with less than N% of the committee vote. If this parameter isn't set then N defaults to 20% when the feature is enabled.

* `--proposer-reorg-epochs-since-finalization N`: only attempt to re-org late blocks when the number of epochs since finalization is less than or equal to N. The default is 2 epochs, meaning re-orgs will only be attempted when the chain is finalizing optimally.

For safety Lighthouse will only attempt a re-org under very specific conditions:

1. The block being proposed is 1 slot after the canonical head, and the canonical head is 1 slot after its parent. i.e. at slot `n + 1` rather than building on the block from slot `n` we build on the block from slot `n - 1`.

2. The current canonical head received less than N% of the committee vote. N should be set depending on the proposer boost fraction itself, the fraction of the network that is believed to be applying it, and the size of the largest entity that could be hoarding votes.

3. The current canonical head arrived after the attestation deadline from our perspective. This condition was only added to support suppression of forkchoiceUpdated messages, but makes intuitive sense.

4. The block is being proposed in the first 2 seconds of the slot. This gives it time to propagate and receive the proposer boost.

## Additional Info

For the initial idea and background, see: https://github.com/ethereum/consensus-specs/pull/2353#issuecomment-950238004

There is also a specification for this feature here: https://github.com/ethereum/consensus-specs/pull/3034

Co-authored-by: Michael Sproul <micsproul@gmail.com>

Co-authored-by: pawan <pawandhananjay@gmail.com>

## Issue Addressed

NA

## Proposed Changes

This is a *potentially* contentious change, but I find it annoying that the validator monitor logs `WARN` and `ERRO` for imperfect attestations. Perfect attestation performance is unachievable (don't believe those photo-shopped beauty magazines!) since missed and poorly-packed blocks by other validators will reduce your performance.

When the validator monitor is on with 10s or more validators, I find the logs are washed out with ERROs that are not worth investigating. I suspect that users who really want to know if validators are missing attestations can do so by matching the content of the log, rather than the log level.

I'm open to feedback about this, especially from anyone who is relying on the current log levels.

## Additional Info

NA

## Breaking Changes Notes

The validator monitor will no longer emit `WARN` and `ERRO` logs for sub-optimal attestation performance. The logs will now be emitted at `INFO` level. This change was introduced to avoid cluttering the `WARN` and `ERRO` logs with alerts that are frequently triggered by the actions of other network participants (e.g., a missed block) and require no action from the user.

## Issue Addressed

Implementing the light_client_gossip topics but I'm not there yet.

Which issue # does this PR address?

Partially #3651

## Proposed Changes

Add light client gossip topics.

Please list or describe the changes introduced by this PR.

I'm going to Implement light_client_finality_update and light_client_optimistic_update gossip topics. Currently I've attempted the former and I'm seeking feedback.

## Additional Info

I've only implemented the light_client_finality_update topic because I wanted to make sure I was on the correct path. Also checking that the gossiped LightClientFinalityUpdate is the same as the locally constructed one is not implemented because caching the updates will make this much easier. Could someone give me some feedback on this please?

Please provide any additional information. For example, future considerations

or information useful for reviewers.

Co-authored-by: GeemoCandama <104614073+GeemoCandama@users.noreply.github.com>

## Issue Addressed

#3724

## Proposed Changes

Exposes certain `validator_monitor` as an endpoint on the HTTP API. Will only return metrics for validators which are actively being monitored.

### Usage

```bash

curl -X GET "http://localhost:5052/lighthouse/ui/validator_metrics" -H "accept: application/json" | jq

```

```json

{

"data": {

"validators": {

"12345": {

"attestation_hits": 10,

"attestation_misses": 0,

"attestation_hit_percentage": 100,

"attestation_head_hits": 10,

"attestation_head_misses": 0,

"attestation_head_hit_percentage": 100,

"attestation_target_hits": 5,

"attestation_target_misses": 5,

"attestation_target_hit_percentage": 50

}

}

}

}

```

## Additional Info

Based on #3756 which should be merged first.

* Add API endpoint to count statuses of all validators (#3756)

* Delete DB schema migrations for v11 and earlier (#3761)

Co-authored-by: Mac L <mjladson@pm.me>

Co-authored-by: Michael Sproul <michael@sigmaprime.io>

## Proposed Changes

Now that the Gnosis merge is scheduled, all users should have upgraded beyond Lighthouse v3.0.0. Accordingly we can delete schema migrations for versions prior to v3.0.0.

## Additional Info

I also deleted the state cache stuff I added in #3714 as it turned out to be useless for the light client proofs due to the one-slot offset.

## Issue Addressed

Closes https://github.com/sigp/lighthouse/issues/2327

## Proposed Changes

This is an extension of some ideas I implemented while working on `tree-states`:

- Cache the indexed attestations from blocks in the `ConsensusContext`. Previously we were re-computing them 3-4 times over.

- Clean up `import_block` by splitting each part into `import_block_XXX`.

- Move some stuff off hot paths, specifically:

- Relocate non-essential tasks that were running between receiving the payload verification status and priming the early attester cache. These tasks are moved after the cache priming:

- Attestation observation

- Validator monitor updates

- Slasher updates

- Updating the shuffling cache

- Fork choice attestation observation now happens at the end of block verification in parallel with payload verification (this seems to save 5-10ms).

- Payload verification now happens _before_ advancing the pre-state and writing it to disk! States were previously being written eagerly and adding ~20-30ms in front of verifying the execution payload. State catchup also sometimes takes ~500ms if we get a cache miss and need to rebuild the tree hash cache.

The remaining task that's taking substantial time (~20ms) is importing the block to fork choice. I _think_ this is because of pull-tips, and we should be able to optimise it out with a clever total active balance cache in the state (which would be computed in parallel with payload verification). I've decided to leave that for future work though. For now it can be observed via the new `beacon_block_processing_post_exec_pre_attestable_seconds` metric.

Co-authored-by: Michael Sproul <micsproul@gmail.com>

## Issue Addressed

#3704

## Proposed Changes

Adds is_syncing_finalized: bool parameter for block verification functions. Sets the payload_verification_status to Optimistic if is_syncing_finalized is true. Uses SyncState in NetworkGlobals in BeaconProcessor to retrieve the syncing status.

## Additional Info

I could implement FinalizedSignatureVerifiedBlock if you think it would be nicer.

## Issue Addressed

Partially addresses #3651

## Proposed Changes

Adds server-side support for light_client_bootstrap_v1 topic

## Additional Info

This PR, creates each time a bootstrap without using cache, I do not know how necessary a cache is in this case as this topic is not supposed to be called frequently and IMHO we can just prevent abuse by using the limiter, but let me know what you think or if there is any caveat to this, or if it is necessary only for the sake of good practice.

Co-authored-by: Pawan Dhananjay <pawandhananjay@gmail.com>

## Issue Addressed

Part of https://github.com/sigp/lighthouse/issues/3651.

## Proposed Changes

Add a flag for enabling the light client server, which should be checked before gossip/RPC traffic is processed (e.g. https://github.com/sigp/lighthouse/pull/3693, https://github.com/sigp/lighthouse/pull/3711). The flag is available at runtime from `beacon_chain.config.enable_light_client_server`.

Additionally, a new method `BeaconChain::with_mutable_state_for_block` is added which I envisage being used for computing light client updates. Unfortunately its performance will be quite poor on average because it will only run quickly with access to the tree hash cache. Each slot the tree hash cache is only available for a brief window of time between the head block being processed and the state advance at 9s in the slot. When the state advance happens the cache is moved and mutated to get ready for the next slot, which makes it no longer useful for merkle proofs related to the head block. Rather than spend more time trying to optimise this I think we should continue prototyping with this code, and I'll make sure `tree-states` is ready to ship before we enable the light client server in prod (cf. https://github.com/sigp/lighthouse/pull/3206).

## Additional Info

I also fixed a bug in the implementation of `BeaconState::compute_merkle_proof` whereby the tree hash cache was moved with `.take()` but never put back with `.restore()`.

## Issue Addressed

#3702

Which issue # does this PR address?

#3702

## Proposed Changes

Added checkpoint-sync-url-timeout flag to cli. Added timeout field to ClientGenesis::CheckpointSyncUrl to utilize timeout set

## Additional Info

Please provide any additional information. For example, future considerations

or information useful for reviewers.

Co-authored-by: GeemoCandama <104614073+GeemoCandama@users.noreply.github.com>

Co-authored-by: Michael Sproul <micsproul@gmail.com>

## Issue Addressed

Closes#3460

## Proposed Changes

`blocks` and `block_min_delay` are never updated in the epoch summary

Co-authored-by: Michael Sproul <micsproul@gmail.com>

## Issue Addressed

New lints for rust 1.65

## Proposed Changes

Notable change is the identification or parameters that are only used in recursion

## Additional Info

na

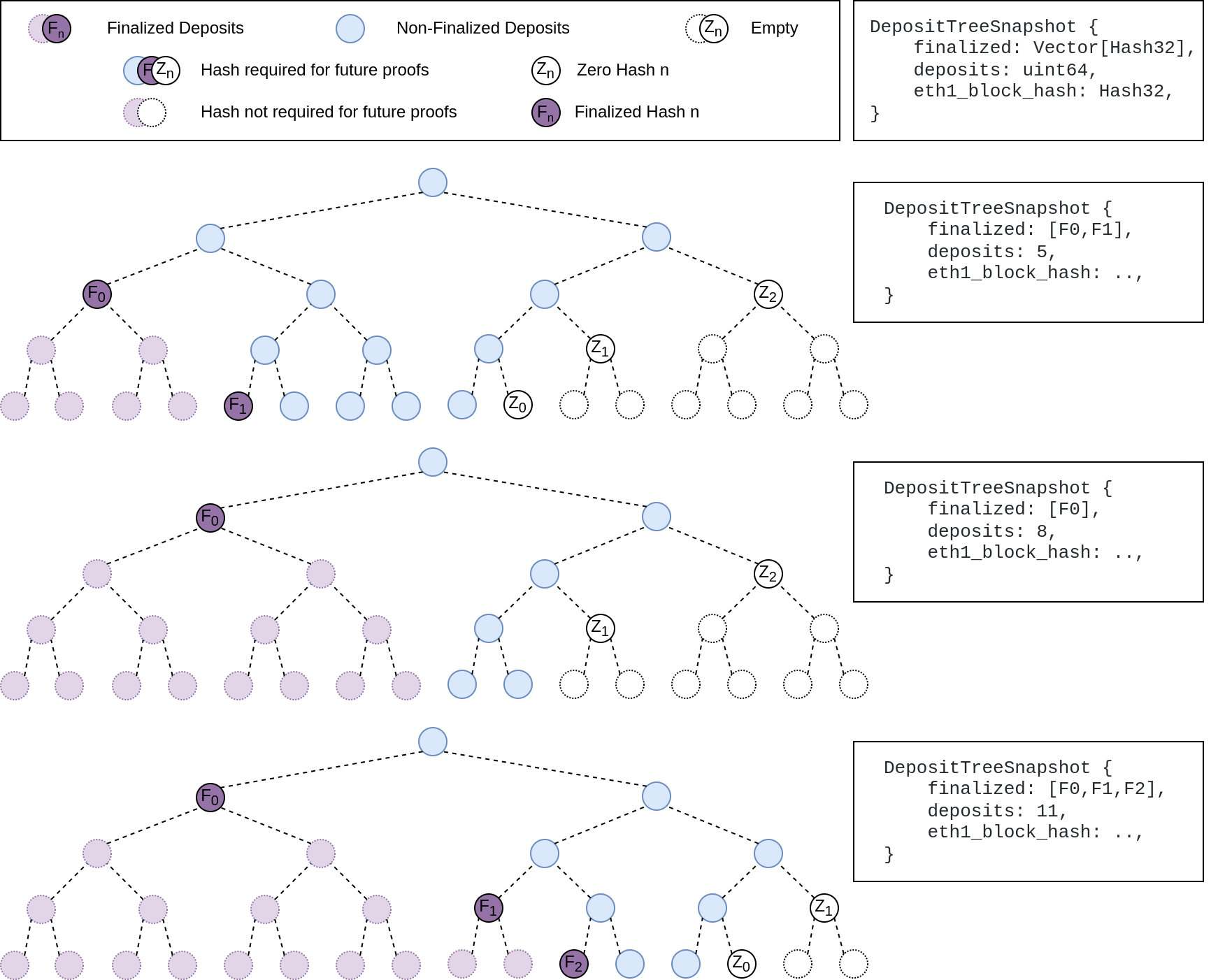

## Summary

The deposit cache now has the ability to finalize deposits. This will cause it to drop unneeded deposit logs and hashes in the deposit Merkle tree that are no longer required to construct deposit proofs. The cache is finalized whenever the latest finalized checkpoint has a new `Eth1Data` with all deposits imported.

This has three benefits:

1. Improves the speed of constructing Merkle proofs for deposits as we can just replay deposits since the last finalized checkpoint instead of all historical deposits when re-constructing the Merkle tree.

2. Significantly faster weak subjectivity sync as the deposit cache can be transferred to the newly syncing node in compressed form. The Merkle tree that stores `N` finalized deposits requires a maximum of `log2(N)` hashes. The newly syncing node then only needs to download deposits since the last finalized checkpoint to have a full tree.

3. Future proofing in preparation for [EIP-4444](https://eips.ethereum.org/EIPS/eip-4444) as execution nodes will no longer be required to store logs permanently so we won't always have all historical logs available to us.

## More Details

Image to illustrate how the deposit contract merkle tree evolves and finalizes along with the resulting `DepositTreeSnapshot`

## Other Considerations

I've changed the structure of the `SszDepositCache` so once you load & save your database from this version of lighthouse, you will no longer be able to load it from older versions.

Co-authored-by: ethDreamer <37123614+ethDreamer@users.noreply.github.com>

* add capella gossip boiler plate

* get everything compiling

Co-authored-by: realbigsean <sean@sigmaprime.io

Co-authored-by: Mark Mackey <mark@sigmaprime.io>

* small cleanup

* small cleanup

* cargo fix + some test cleanup

* improve block production

* add fixme for potential panic

Co-authored-by: Mark Mackey <mark@sigmaprime.io>

## Issue Addressed

This reverts commit ca9dc8e094 (PR #3559) with some modifications.

## Proposed Changes

Unfortunately that PR introduced a performance regression in fork choice. The optimisation _intended_ to build the exit and pubkey caches on the head state _only if_ they were not already built. However, due to the head state always being cloned without these caches, we ended up building them every time the head changed, leading to a ~70ms+ penalty on mainnet.

fcfd02aeec/beacon_node/beacon_chain/src/canonical_head.rs (L633-L636)

I believe this is a severe enough regression to justify immediately releasing v3.2.1 with this change.

## Additional Info

I didn't fully revert #3559, because there were some unrelated deletions of dead code in that PR which I figured we may as well keep.

An alternative would be to clone the extra caches, but this likely still imposes some cost, so in the interest of applying a conservative fix quickly, I think reversion is the best approach. The optimisation from #3559 was not even optimising a particularly significant path, it was mostly for VCs running larger numbers of inactive keys. We can re-do it in the `tree-states` world where cache clones are cheap.

## Issue Addressed

Fix a bug in block production that results in blocks with 0 attestations during the first slot of an epoch.

The bug is marked by debug logs of the form:

> DEBG Discarding attestation because of missing ancestor, block_root: 0x3cc00d9c9e0883b2d0db8606278f2b8423d4902f9a1ee619258b5b60590e64f8, pivot_slot: 4042591

It occurs when trying to look up the shuffling decision root for an attestation from a slot which is prior to fork choice's finalized block. This happens frequently when proposing in the first slot of the epoch where we have:

- `current_epoch == n`

- `attestation.data.target.epoch == n - 1`

- attestation shuffling epoch `== n - 3` (decision block being the last block of `n - 3`)

- `state.finalized_checkpoint.epoch == n - 2` (first block of `n - 2` is finalized)

Hence the shuffling decision slot is out of range of the fork choice backwards iterator _by a single slot_.

Unfortunately this bug was hidden when we weren't pruning fork choice, and then reintroduced in v2.5.1 when we fixed the pruning (https://github.com/sigp/lighthouse/releases/tag/v2.5.1). There's no way to turn that off or disable the filtering in our current release, so we need a new release to fix this issue.

Fortunately, it also does not occur on every epoch boundary because of the gradual pruning of fork choice every 256 blocks (~8 epochs):

01e84b71f5/consensus/proto_array/src/proto_array_fork_choice.rs (L16)01e84b71f5/consensus/proto_array/src/proto_array.rs (L713-L716)

So the probability of proposing a 0-attestation block given a proposal assignment is approximately `1/32 * 1/8 = 0.39%`.

## Proposed Changes

- Load the block's shuffling ID from fork choice and verify it against the expected shuffling ID of the head state. This code was initially written before we had settled on a representation of shuffling IDs, so I think it's a nice simplification to make use of them here rather than more ad-hoc logic that fundamentally does the same thing.

## Additional Info

Thanks to @moshe-blox for noticing this issue and bringing it to our attention.

## Issue Addressed

Closes https://github.com/sigp/lighthouse/issues/2371

## Proposed Changes

Backport some changes from `tree-states` that remove duplicated calculations of the `proposer_index`.

With this change the proposer index should be calculated only once for each block, and then plumbed through to every place it is required.

## Additional Info

In future I hope to add more data to the consensus context that is cached on a per-epoch basis, like the effective balances of validators and the base rewards.

There are some other changes to remove indexing in tests that were also useful for `tree-states` (the `tree-states` types don't implement `Index`).

## Issue Addressed

While digging around in some logs I noticed that queries for validators by pubkey were taking 10ms+, which seemed too long. This was due to a loop through the entire validator registry for each lookup.

## Proposed Changes

Rather than using a loop through the register, this PR utilises the pubkey cache which is usually initialised at the head*. In case the cache isn't built, we fall back to the previous loop logic. In the vast majority of cases I expect the cache will be built, as the validator client queries at the `head` where all caches should be built.

## Additional Info

*I had to modify the cache build that runs after fork choice to build the pubkey cache. I think it had been optimised out, perhaps accidentally. I think it's preferable to have the exit cache and the pubkey cache built on the head state, as they are required for verifying deposits and exits respectively, and we may as well build them off the hot path of block processing. Previously they'd get built the first time a deposit or exit needed to be verified.

I've deleted the unused `map_state` function which was obsoleted by `map_state_and_execution_optimistic`.

## Issue Addressed

N/A

## Proposed Changes

With https://github.com/sigp/lighthouse/pull/3214 we made it such that you can either have 1 auth endpoint or multiple non auth endpoints. Now that we are post merge on all networks (testnets and mainnet), we cannot progress a chain without a dedicated auth execution layer connection so there is no point in having a non-auth eth1-endpoint for syncing deposit cache.

This code removes all fallback related code in the eth1 service. We still keep the single non-auth endpoint since it's useful for testing.

## Additional Info

This removes all eth1 fallback related metrics that were relevant for the monitoring service, so we might need to change the api upstream.

## Issue Addressed

fixes lints from the last rust release

## Proposed Changes

Fix the lints, most of the lints by `clippy::question-mark` are false positives in the form of https://github.com/rust-lang/rust-clippy/issues/9518 so it's allowed for now

## Additional Info

## Issue Addressed

NA

## Proposed Changes

This PR attempts to fix the following spurious CI failure:

```

---- store_tests::garbage_collect_temp_states_from_failed_block stdout ----

thread 'store_tests::garbage_collect_temp_states_from_failed_block' panicked at 'disk store should initialize: DBError { message: "Error { message: \"IO error: lock /tmp/.tmp6DcBQ9/cold_db/LOCK: already held by process\" }" }', beacon_node/beacon_chain/tests/store_tests.rs:59:10

```

I believe that some async task is taking a clone of the store and holding it in some other thread for a short time. This creates a race-condition when we try to open a new instance of the store.

## Additional Info

NA

## Issue Addressed

NA

## Proposed Changes

This PR removes duplicated block root computation.

Computing the `SignedBeaconBlock::canonical_root` has become more expensive since the merge as we need to compute the merke root of each transaction inside an `ExecutionPayload`.

Computing the root for [a mainnet block](https://beaconcha.in/slot/4704236) is taking ~10ms on my i7-8700K CPU @ 3.70GHz (no sha extensions). Given that our median seen-to-imported time for blocks is presently 300-400ms, removing a few duplicated block roots (~30ms) could represent an easy 10% improvement. When we consider that the seen-to-imported times include operations *after* the block has been placed in the early attester cache, we could expect the 30ms to be more significant WRT our seen-to-attestable times.

## Additional Info

NA